版权声明:本文为博主原创文章,未经博主允许不得转载。 https://blog.csdn.net/yangguosb/article/details/87111511

作用

存储数据库和表的元信息,比如结构定义、权限和统计信息等;

Metastore is for storing schema(table definitions including location in HDFS, serde, columns, comments, types, partition definitions, views, access permissions, etc) and statistics.

部署模式

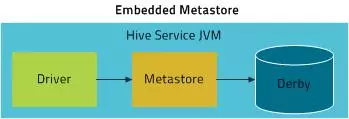

Embedded Mode

- 特点:MetaStore服务、数据库和HiveServer使用同一个进程,即HiveServer启动时开启了这些服务,使用默认的Derby数据库;

- 使用场景:个人学习和调试等试验场景;

Local Mode

- 特点:MetaStore服务和HiveServer使用相同的进程,数据库为单独的进程;

Remote Mode

- 特点:MetaStore、HiveServer和数据库均使用单独的进程;

- 使用场景:生产环境;

- 通信协议:HS2\Impala\HCatalog等与Metastore使用Thrift API通信,Metastore与数据库间使用JDBC\ODBC等通信;

支持数据库

元数据存储表说明

- DBS:存储Hive中所有数据库的基本信息;

- DATABASE_PARAMS:存储数据库的相关参数,在CREATE DATABASE时候用

WITH DBPROPERTIES (property_name=property_value, …)指定的参数; - TBLS:存储Hive表、视图、索引表的基本信息;

- TABLE_PARAMS:存储表/视图的属性信息;

- TBL_PRIVS:存储表/视图的授权信息;

- SDS:保存文件存储的基本信息,如INPUT_FORMAT、OUTPUT_FORMAT、是否压缩等;

- SD_PARAMS:存储Hive存储的属性信息;

- SERDES:存储序列化使用的类信息;

- SERDE_PARAMS:存储序列化的一些属性、格式信息,比如:行、列分隔符;

- COLUMNS_V2:存储表对应的字段信息;

- PARTITIONS:存储表分区的基本信息;

- PARTITION_KEYS:存储分区的字段信息;

- PARTITION_KEY_VALS:存储分区字段值;

- PARTITION_PARAMS:存储分区的属性信息;

客户端使用示例

import org.apache.hadoop.hive.conf.HiveConf;

import org.apache.hadoop.hive.metastore.IMetaStoreClient;

import org.apache.hadoop.hive.metastore.RetryingMetaStoreClient;

import org.apache.hadoop.hive.metastore.api.Database;

import org.apache.hadoop.hive.metastore.api.FieldSchema;

import org.apache.hadoop.hive.metastore.api.MetaException;

import org.apache.hadoop.hive.metastore.api.Table;

import org.apache.thrift.TException;

import org.slf4j.Logger;

import java.util.List;

public class HiveClient {

static final Logger logger = org.slf4j.LoggerFactory.getLogger(HiveClient.class);

static IMetaStoreClient client;

static{

try {

HiveConf hiveConf = new HiveConf();

hiveConf.addResource("hive-site.xml");

client = RetryingMetaStoreClient.getProxy(hiveConf);

} catch (MetaException ex) {

logger.error(ex.getMessage());

}

}

public static List<String> getAllDatabases() {

List<String> databases = null;

try {

databases = client.getAllDatabases();

} catch (TException ex) {

logger.error(ex.getMessage());

}

return databases;

}

public static Database getDatabase(String db) {

Database database = null;

try {

database = client.getDatabase(db);

} catch (TException ex) {

logger.error(ex.getMessage());

}

return database;

}

public static List<FieldSchema> getSchema(String db, String table) {

List<FieldSchema> schema = null;

try {

schema = client.getSchema(db, table);

} catch (TException ex) {

logger.error(ex.getMessage());

}

return schema;

}

public static List<String> getAllTables(String db) {

List<String> tables = null;

try {

tables = client.getAllTables(db);

} catch (TException ex) {

logger.error(ex.getMessage());

}

return tables;

}

public static String getLocation(String db, String table) {

String location = null;

try {

location = client.getTable(db, table).getSd().getLocation();

}catch (TException ex) {

logger.error(ex.getMessage());

}

return location;

}

public static void main(String[] args) throws Exception {

// client.getAllDatabases().stream().forEach( (e)-> {

// try {

// System.out.print(e + " : ");

// System.out.println(client.getAllTables(e));

// } catch (TException e1) {

// e1.printStackTrace();

// }

// });

// Database db1 = new Database("lrp_t1", "test", "/user/hive/warehouse/lrp_t1.db", null);

// client.createDatabase(db1);

// int t = (int)System.currentTimeMillis();

// StorageDescriptor sd = new StorageDescriptor();

// //表的列

// List<FieldSchema> cols = new LinkedList<>();

// FieldSchema col1 = new FieldSchema("col1","varchar",null);

// cols.add(col1);

// sd.setCols(cols);

//

// //存储位置

// sd.setLocation("/user/hive/warehouse/lrp_t1.db/lrp_tt1");

//

// SerDeInfo serDeInfo = new SerDeInfo();

// sd.setSerdeInfo(serDeInfo);

//

// List<FieldSchema> partitionKeys = new LinkedList<>();

// Map<String,String> parameters = new HashMap<>();

// parameters.put("k1","v1");

// Table tbl = new Table("lrp_tt1", "lrp_t1", "luruipeng", t, t, 0, sd, partitionKeys, parameters, null, null, "MANAGED_TABLE");

// client.createTable(tbl);

// client.getAllTables("lrp_t1").stream().forEach((e)-> System.out.println(e));

Table table = client.getTable("lrp_t1", "lrp_tt1");

System.out.println(table);

}

}

参考:

- 参数配置说明:https://cwiki.apache.org/confluence/display/Hive/AdminManual+Metastore+Administration#AdminManualMetastoreAdministration-MetastoreSchemaConsistencyandUpgrades

- https://www.cloudera.com/documentation/enterprise/5-8-x/topics/cdh_ig_hive_metastore_configure.html

- 元数据表结构说明:http://lxw1234.com/archives/2015/07/378.htm

- RetryingMetaStoreClient说明:https://github.com/BUPTAnderson/apache-hive-2.1.1-src/blob/master/metastore/src/java/org/apache/hadoop/hive/metastore/RetryingMetaStoreClient.java