导入手写数字识别

import keras

from keras import layers

import matplotlib.pyplot as plt

%matplotlib inline

import keras.datasets.mnist as mnist

(train_image, train_label), (test_image, test_label) = mnist.load_data()

train_image.shape

OUT:

(60000, 28, 28)

即有60000张图片 28*28像素

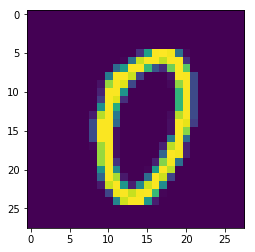

用切片的方法去取出图片

plt.imshow(train_image[0])

train_label[0]

OUT:

0

模型训练

model = keras.Sequential()

model.add(layers.Flatten()) # (60000, 28, 28) ---> (60000, 28*28)

model.add(layers.Dense(64, activation='relu'))

model.add(layers.Dense(10, activation='softmax'))

model.compile(optimizer='adam',

loss='sparse_categorical_crossentropy',

metrics=['acc']

)

model.fit(train_image, train_label, epochs=50, batch_size=512)

模型评估

model.evaluate(train_image, train_label)

OUT:

[0.30629094220472769, 0.97965000000000002]

model.evaluate(test_image, test_label)

OUT:

[0.46858452253225152, 0.96919999999999995]

numpy方法预测

import numpy as np

np.argmax(model.predict(test_image[:10]),axis=1)

test_label[:10]

OUT:

array([7, 2, 1, 0, 4, 1, 4, 9, 6, 9], dtype=int64)

array([7, 2, 1, 0, 4, 1, 4, 9, 5, 9], dtype=uint8)

模型的优化

- 增大网络容量,直到过拟合

- 采取措施抑制过拟合

- 继续增大网络容量,直到过拟合

增大网络容量

#增加几层

model = keras.Sequential()

model.add(layers.Flatten()) # (60000, 28, 28) ---> (60000, 28*28)

model.add(layers.Dense(64, activation='relu'))

model.add(layers.Dense(64, activation='relu'))

model.add(layers.Dense(64, activation='relu'))

model.add(layers.Dense(10, activation='softmax'))

model.compile(optimizer='adam',

loss='sparse_categorical_crossentropy',

metrics=['acc']

)

model.fit(train_image, train_label, epochs=50, batch_size=512, validation_data=(test_image, test_label))

模型的再优化

#添加dropout层

model = keras.Sequential()

model.add(layers.Flatten()) # (60000, 28, 28) ---> (60000, 28*28)

model.add(layers.Dense(64, activation='relu'))

model.add(layers.Dense(64, activation='relu'))

model.add(layers.Dropout(0.5))

model.add(layers.Dense(64, activation='relu'))

model.add(layers.Dropout(0.5))

model.add(layers.Dense(10, activation='softmax'))

model.compile(optimizer='adam',

loss='sparse_categorical_crossentropy',

metrics=['acc']

)

model.fit(train_image, train_label, epochs=200, batch_size=512, validation_data=(test_image, test_label))

强烈推荐