集群清洗好的数据:

数据模型:

baidu CN E 20160715153139 4.82.54.2 v2.go2yd.com http://v1.go2yd.com/user_upload/1531633977627104fdecdc68fe7a2c4b96b2226fd3f4c.mp4_bd.mp4 5826启动Hive,并创建对应的分区外部表

创建表

create external table g6_access(

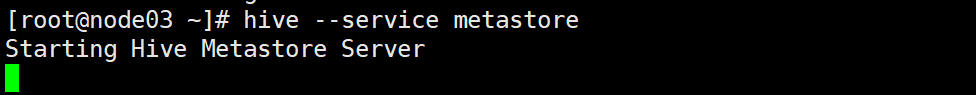

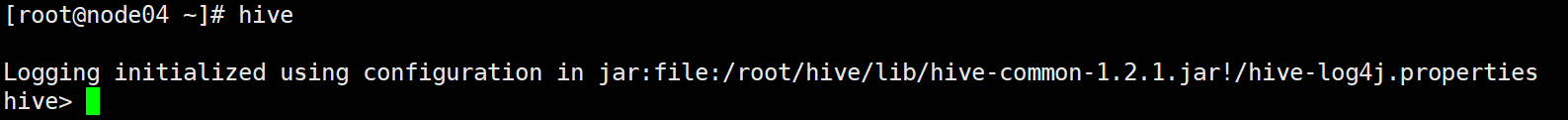

cdn string,

region string,

level string,

time string,

ip string,

domain string,

url string,

traffic bigint

) partitioned by (day string)

ROW FORMAT DELIMITED FIELDS TERMINATED BY '\t'

LOCATION '/g6/hadoop/access/clear'将相关的数据源复制到 Hive表对应的Location里面

hadoop fs -cp /g6/hadoop/access/output/day=20190325 /g6/hadoop/access/clear/day=20190325注意:分区是一个文件夹,文件夹里面包含着数据文件,LOCATION里面装的是分区的那整个文件

最后再将表更新

alter table g6_access add if not exists partition(day='20190325');

查询表