知识点

机器学习-【7】降维PCA算法【手抄笔记】

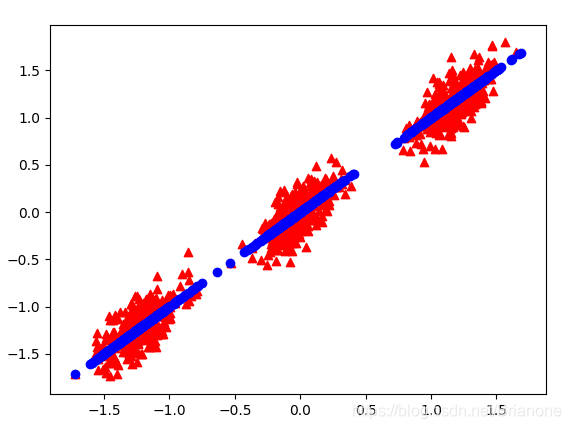

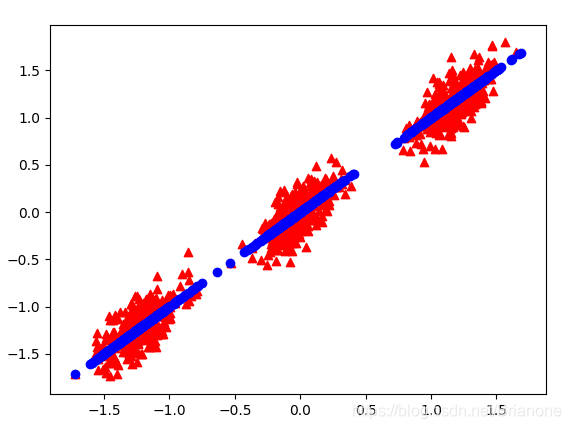

运行效果

程序代码+数据集下载

主成分分析PCA+测试数据

程序

def pca(npArr, k, show = False):

'''

:param npArr: shape=(n_examples, n_features)

:param k: to keep k components

:param show: True to show figure about origData and reconData

:return: LowNpArr, loss

'''

# Preprocessing

n_examples = npArr.shape[0]

n_features = npArr.shape[1]

mean = np.zeros(n_features)

std = np.zeros(n_features)

StdMeanNpArr = np.zeros((n_examples, n_features))

mean = np.average(npArr, axis=0).reshape(1,n_features)

std = np.std(npArr, axis=0).reshape(1,n_features)

StdMeanNpArr = (npArr - mean) / std

# pca

sigma = np.cov(StdMeanNpArr, rowvar=0)

eigValue, eigVects = np.linalg.eig(sigma) # 获得协方差矩阵的特征值,特征向量

eigValInd = np.argsort(eigValue) # 返回特征值从小到大排序的索引

eigValInd = eigValInd[:-(k+1):-1] # 从后向前一共取k个值的索引

redEigVects = eigVects[:,eigValInd] # 取出指定索引的特征向量

LowNpArr = np.dot(StdMeanNpArr, redEigVects) # 原数据与选定的特征向量内积,得到降维数据

reconNpArr = np.dot(LowNpArr, redEigVects.T) # 重构数据

redEigValue = eigValue[eigValInd]

loss = 1 - np.sum(redEigValue)/np.sum(eigValue) # 重构数据的损失程度

if show:

fig = plt.figure()

ax = fig.add_subplot(111)

ax.scatter(StdMeanNpArr[:, 0], StdMeanNpArr[:, 1], marker = '^', c='red')

ax.scatter(reconNpArr[:,0],reconNpArr[:,1], marker='o', c='blue')

plt.show()

return LowNpArr, loss