集群环境

master:192.168.230.10

slave1:192.168.230.11

slave2:192.168.230.12

运行环境

spark:2.0.2

scala:2.11.4

安装scala

1.master节点下,在/usr/local/src/scala目录下解压:

tar zxvf scala-2.12.4.tgz

2.在~/.bashrc配置环境变量SCALA_HOME

SCALA_HOME=/usr/local/src/scala/scala-2.11.4

export PATH=

HADOOP_HOME/bin:$SCALA_HOME/bin

3.将scala文件夹远程复制到slave1和slave2:

[root@master src]# scp -r scala root@slave1:/usr/local/src

[root@master src]# scp -r scala root@slave2:/usr/local/src

安装spark

1.master节点下,在/usr/local/src/spark目录下解压:

[root@master spark]# tar zxvf scala-2.12.4.tgz

2.修改spark配置文件

在/usr/local/src/spark/spark-2.0.2-bin-hadoop2.6/conf目录下

[root@master conf]# vi spark-env.sh

export JAVA_HOME=/usr/local/src/java/jdk1.8.0_172

export SCALA_HOME=/usr/local/src/scala/scala-2.11.4

export HADOOP_HOME=/usr/local/src/hadoop/hadoop-2.6.5

export HADOOP_CONF_DIR=$HADOOP_HOME/etc/hadoop

SPARK_MASTER_IP=master

SPARK_LOCAL_DIRS=/usr/local/src/spark/spark-2.0.2-bin-hadoop2.6

[root@master conf]# vi slaves

slave1

slave2

3.将安装包远程分发到slave1和slave2:

[root@master src]# scp -r spark root@slave1:/usr/local/src

[root@master src]# scp -r spark root@slave2:/usr/local/src

启动集群

在/usr/local/src/spark/spark-2.0.2-bin-hadoop2.6/sbin目录下

[root@master sbin]# ./start-all.sh

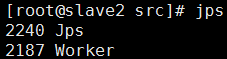

slave1和slave下:

slave1和slave下:

网页监控

master:8080

验证

1.本地模式

[root@master spark-2.0.2-bin-hadoop2.6]# ./bin/spark-submit --class org.apache.spark.examples.SparkPi --master local examples/jars/spark-examples_2.11-2.0.2.jar

2.集群standalone

[root@master spark-2.0.2-bin-hadoop2.6]# ./bin/spark-submit --class org.apache.spark.examples.SparkPi --master spark://192.168.230.10:7077 examples/jars/spark-examples_2.11-2.0.2.jar

这里的–master spark://192.168.230.10:7077,这个IP是在spark-env.sh配置的SPARK_MASTER_IP的地址

3.on yarn cluster模式

3.on yarn cluster模式

首先需进入hadoop目录下,执行./start-all.sh,master节点下有ResourceManager、NameNode、SecondaryNameNode和Maser进程,slave1和slave2节点有DataNode、Worker和NodeManager进程,此时不需要启动spark集群。

[root@master spark-2.0.2-bin-hadoop2.6]# ./bin/spark-submit --class org.apache.spark.examples.SparkPi --master yarn-cluster examples/jars/spark-examples_2.11-2.0.2.jar

4.on yarn client模式

4.on yarn client模式

[root@master spark-2.0.2-bin-hadoop2.6]# ./bin/spark-submit --class org.apache.spark.examples.SparkPi --master yarn-client examples/jars/spark-examples_2.11-2.0.2.jar

执行时报异常:

org.apache.spark.SparkException: Could not find CoarseGrainedScheduler.