环境规划

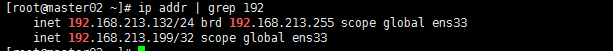

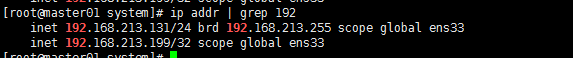

master01:192.168.213.131

master02:192.168.213.132

VIP : 192.168.213.199

在跳板机上更新master证书和把证书发送到master上

重新生成证书请求

#cd /server/ssl

# cat k8s-csr.json

{

"CN": "kubernetes",

"hosts": [

"127.0.0.1",

"192.168.213.131",

"192.168.213.132",

"192.168.213.199",

"10.254.0.1",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Hangzhou",

"L": "Hangzhou",

"O": "k8s",

"OU": "System"

}

]

}

重新生成master证书和私钥文件

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes k8s-csr.json | cfssljson -bare kubernetes

把证书发送到master01

scp kubernetes*.pem master01:/opt/kubernetes/ssl/

master相关配置和组件

在master01上把kube-apiserver, kube-scheduler, kube-controller-manager相关组件发到master02上

cd /opt/kubernetes/bin/

scp kube* master02:/opt/kubernetes/bin/

在master01上把相关证书发送master02上

scp /opt/kubernetes/ssl/* master02:/opt/kubernetes/ssl/

修改master01上kube-apiserver的启动脚本

vi /usr/lib/systemd/system/kube-apiserver.service

--advertise-address=0.0.0.0 --bind-address=0.0.0.0 修改监听地址为0.0.0.0

在master01上把kube-apiserver, kube-scheduler, kube-controller-manager的服务启动脚本发到master02上

cd /usr/lib/systemd/system

scp kube-* master02:/usr/lib/systemd/system/

master01上重启kube-apiserver

systemctl daemon-reload

systemctl restart kube-apiserver

systemctl status kube-apiserver

在master02启动服务

systemctl enable kube-apiserver

systemctl enable kube-controller-manager

systemctl enable kube-scheduler

systemctl start kube-apiserver

systemctl start kube-controller-manager

systemctl start kube-scheduler

systemctl status kube-apiserver

systemctl status kube-controller-manager

systemctl status kube-scheduler

安装nginx作为kube-apiserver代理

master01和master02安装nginx

yum install nginx -y

systemctl start nginx

systemctl enable nginx

master01和master02修改nginx配置文件

stream {

upstream k8s_proxy {

server 192.168.213.131:6443 max_fails=2 fail_timeout=20s; #健康状态检测

server 192.168.213.132:6443 max_fails=2 fail_timeout=20s;

}

server {

listen 8443;

proxy_connect_timeout 10s; #连接后端服务器的超时时间

proxy_timeout 60s; #连接超时时间,如果不配置,永远不超时

proxy_pass k8s_proxy;

}

}

安装和配置keepalived

master01和master02安装keepalived

yum install keepalived

systemctl start keepalived

systemctl enable keepalived

master01的keepalived配置文件如下

global_defs {

router_id MASTER

}

vrrp_script check_nginx {

script "systemctl status nginx"

interval 3

weight -20

}

vrrp_instance VI_1 {

state MASTER

interface ens33

virtual_router_id 51

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass redhat

}

virtual_ipaddress {

192.168.213.199

}

track_script {

check_nginx

}

}

master02的keepalived配置文件如下:

global_defs {

router_id BACKUP

}

vrrp_script check_nginx {

script "systemctl status nginx"

interval 3

weight -20

}

vrrp_instance VI_1 {

state BACKUP

interface ens33

virtual_router_id 51

priority 99

advert_int 1

authentication {

auth_type PASS

auth_pass redhat

}

virtual_ipaddress {

192.168.213.199

}

track_script {

check_nginx

}

}

修改客户端node节点配置

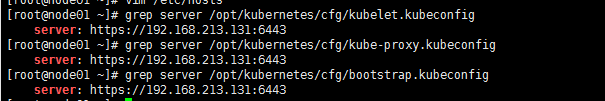

查看配置

grep server /opt/kubernetes/cfg/kubelet.kubeconfig

grep server /opt/kubernetes/cfg/kube-proxy.kubeconfig

grep server /opt/kubernetes/cfg/bootstrap.kubeconfig

修改ip为vip和对外的暴露的端口

sed -ri 's/192.168.213.131:6443/192.168.213.199:8443/g' /opt/kubernetes/cfg/*.kubeconfig

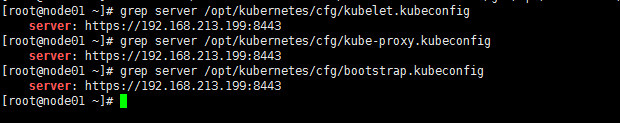

node节点验证是否修改成功

grep server /opt/kubernetes/cfg/kubelet.kubeconfig

grep server /opt/kubernetes/cfg/kube-proxy.kubeconfig

grep server /opt/kubernetes/cfg/bootstrap.kubeconfig

node节点重启kube-proxy和kubelet

systemctl daemon-reload

systemctl restart kube-proxy

systemctl restart kubelet

systemctl status kube-proxy

systemctl status kubelet

修改kubectl客户端的配置文件

sed -ri 's/192.168.213.131:6443/192.168.213.199:8443/g' /root/.kube/config

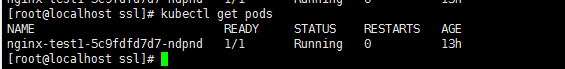

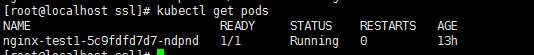

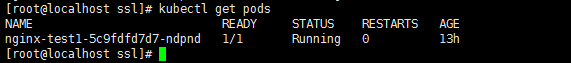

验证

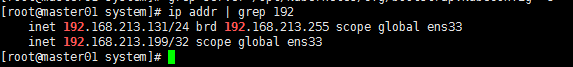

下载vip在master01上

kubectl客户端能正常连接apiserver

分别停止master01上kube-apiserver服务和master02上的kube-apiserver服务

systemctl stop kube-apiserver

kubectl客户端还是能够正常连接apiserver

停止master01上的nginx服务

systemctl stop nginx

vip漂移到master01上

kubectl客户端还是能够正常连接apiserver

再次启动master01上nginx的服务

systemctl start nginx

vip漂移到master01上

kubectl客户端还是能够正常连接apiserver