版权声明:本文为博主([email protected])原创文章,未经博主允许不得转载。 https://blog.csdn.net/z_feng12489/article/details/90031981

6.2 激活函数及其梯度

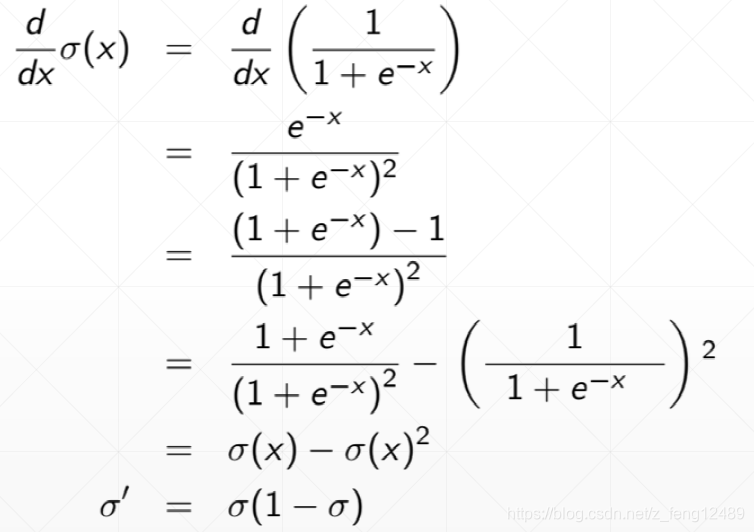

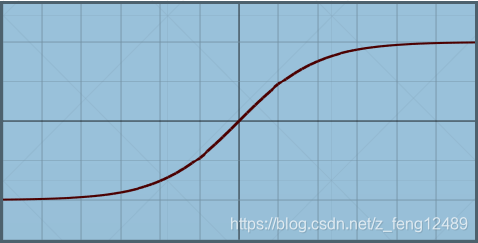

sigmoid

a = tf.linspace(-10., 10., 10)

with tf.GradientTape() as tape:

tape.watch(a)

y = tf.sigmoid(a)

grads = tape.gradient(y, [a])

print('x:', a.numpy())

print('y:', y.numpy())

print('grad:', grads[0].numpy())

x: [-10. -7.7777777 -5.5555553 -3.333333 -1.1111107 1.1111116

3.333334 5.5555563 7.7777786 10. ]

y: [4.5388937e-05 4.1878223e-04 3.8510561e-03 3.4445226e-02 2.4766389e-01

7.5233626e-01 9.6555483e-01 9.9614894e-01 9.9958128e-01 9.9995458e-01]

grad: [4.5386874e-05 4.1860685e-04 3.8362255e-03 3.3258751e-02 1.8632649e-01

1.8632641e-01 3.3258699e-02 3.8362255e-03 4.1854731e-04 4.5416677e-05]

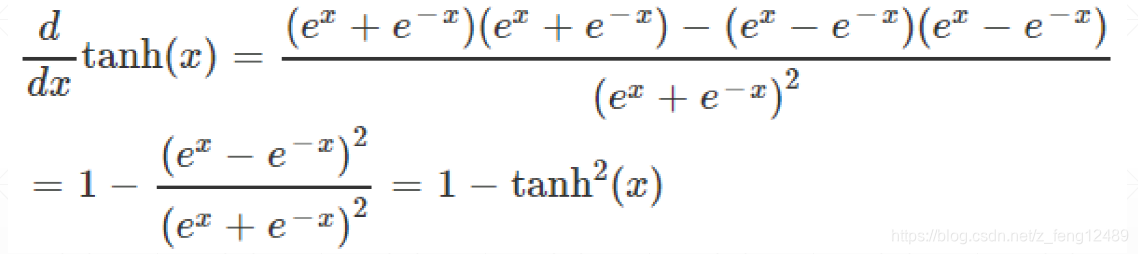

tanh

a = tf.linspace(-10, 10, 10)

print(tf.tanh(a))

# tf.Tensor(

# [-1. -0.99999964 -0.99997014 -0.997458 -0.8044547 0.804455

# 0.997458 0.99997014 0.99999964 1. ], shape=(10,), dtype=float32)

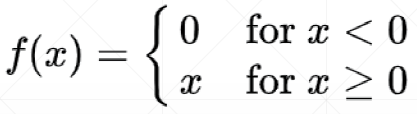

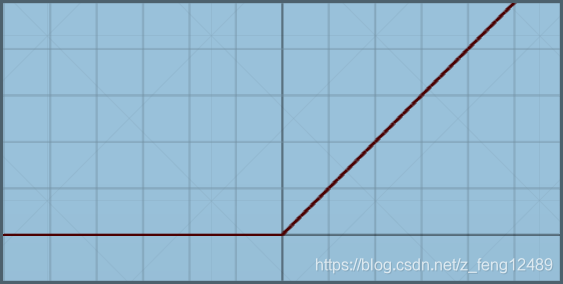

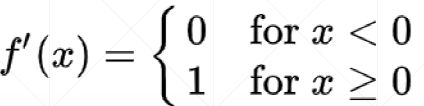

Rectified Linear Unit

a = tf.linspace(-10., 10., 10)

print(tf.nn.relu(a))

# tf.Tensor(

# [ 0. 0. 0. 0. 0. 1.1111116

# 3.333334 5.5555563 7.7777786 10. ], shape=(10,), dtype=float32)

print(tf.nn.leaky_relu(a))

# tf.Tensor(

# [-2. -1.5555556 -1.111111 -0.6666666 -0.22222213 1.1111116

# 3.333334 5.5555563 7.7777786 10. ], shape=(10,), dtype=float32)