1.我搭建的是三台centos7的环境 首先准备三个centos7(文中出现的所有的链接都是我自己的)

centos7下载地址(也可以上官网自行下载):https://pan.baidu.com/s/1Y_EVLDuLwpKv2hU3HSiPDA 提取码:05mi

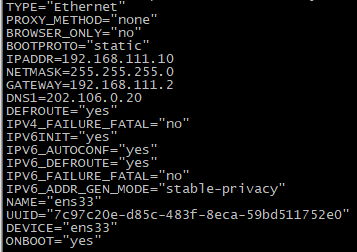

2.安装完成后需要修改ip,都改为静态的ip

vi /etc/sysconfig/network-scripts/ifcfg-ens33(有可能不是ifcfg-ens33,自行判断),以下是我的配置,你们可以参考下

BOOTTPROTO必须改为static,NETMASK,GATEWAY,BROADCAST根据自己的虚拟网络编辑器修改

三台虚拟机都需要配置 我的三台机器是

master:192.168.111.10 master

slave1:192.168.111.11 slave1

slave2:192.168.111.12 slave2

设置完毕后ping下网络

3.修改hostname

https://www.cnblogs.com/zhangjiahao/p/10990093.html

4.安装java环境(jdk)

https://www.cnblogs.com/zhangjiahao/p/8551362.html

5.配置多个虚拟机互信(免密登录)

https://www.cnblogs.com/zhangjiahao/p/10989245.html

6.安装配置hadoop

(1)下载

链接:https://pan.baidu.com/s/1m0IXN1up0nk2rxDUMgEC-g

提取码:mhxa

(2)上传解压

rz 选择你的文件进行上传

tar -zxvf 刚才上传的包进行解压

(3)配置

打开hadoop下的etc 找到hadoop-env.sh和yarn-env.sh 在里面添加JAVA_HOME=/usr/local/src/java1.8.0_172(你的jdk安装路径)

编辑core-site.xml:

<configuration> <property> <name>fs.default.name</name> <value>hdfs://master:9000</value> </property> <property> <name>hadoop.tmp.dir</name> <value>file:/usr/local/src/hadoop-2.6.1/tmp</value> </property> </configuration>

编辑hdfs-site.xml:

<configuration> <property> <name>dfs.namenode.secondary.http-address</name> <value>master:9091</value> </property> <property> <name>dfs.namenode.name.dir</name> <value>file:/usr/local/src/hadoop-2.6.1/dfs/name</value> </property> <property> <name>dfs.datanode.data.dir</name> <value>file:/usr/local/src/hadoop-2.6.1/dfs/data</value> </property> <property> <name>dfs.replication</name> <value>3</value> </property> </configuration>

编辑mapred-site.xml:

<configuration> <property> <name>mapreduce.framework.name</name> <value>yarn</value> </property> </configuration>

编辑yarn-site.xml:

<configuration> <property> <name>yarn.nodemanager.aux-services</name> <value>mapreduce_shuffle</value> </property> <property> <name>yarn.nodemanager.aux-services.mapreduce.shuffle.class</name> <value>org.apache.hadoop.mapred.ShuffleHandler</value> </property> <property> <name>yarn.resourcemanager.address</name> <value>master:8032</value> </property> <property> <name>yarn.resourcemanager.scheduler.address</name> <value>master:8030</value> </property> <property> <name>yarn.resourcemanager.resource-tracker.address</name> <value>master:8031</value> </property> <property> <name>yarn.resourcemanager.admin.address</name> <value>master:8033</value> </property> <property> <name>yarn.resourcemanager.webapp.address</name> <value>master:8088</value> </property> </configuration>

7.将hadoop配置分发到各个节点

scp -r hadoop2.6.1 root@slave1:/usr/local/src

scp -r hadoop2.6.1 root@slave2:/usr/local/src

8.将环境变量的配置分发到各个节点

scp -r /etc/profile root@slave1:/etc

scp -r /etc/profile root@slave2:/etc

9.配置生效

10.在主节点初始化集群

cd/hadoop2.6.1/sbin

hadoop namenode -format

11.启动集群,查看集群是否正常

./start-dfs.sh ./start-yarn.sh

12.查看

hadoop fs -ls /

到hadoop的根目录下 cd /etc/

touch 1.txt

echo 123456 > 1.txt

cat 1.txt

hadoop fs -put 1.txt /