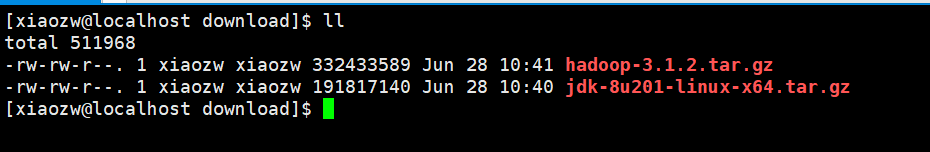

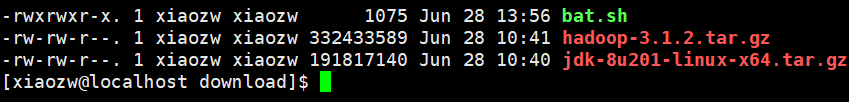

1. 下载hadoop和jdk安装包到指定目录,并安装java环境。

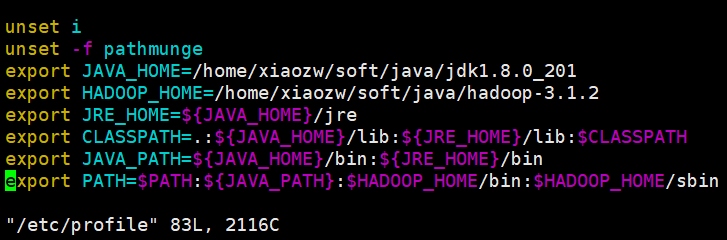

2.解压hadoop到指定目录,配置环境变量。vim /etc/profile

export JAVA_HOME=/home/xiaozw/soft/java/jdk1.8.0_201

export HADOOP_HOME=/home/xiaozw/soft/java/hadoop-3.1.2

export JRE_HOME=${JAVA_HOME}/jre

export CLASSPATH=.:${JAVA_HOME}/lib:${JRE_HOME}/lib:$CLASSPATH

export JAVA_PATH=${JAVA_HOME}/bin:${JRE_HOME}/bin

export PATH=$PATH:${JAVA_PATH}:$HADOOP_HOME/bin:$HADOOP_HOME/sbin

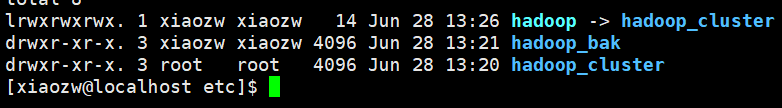

3. 复制配置文件到新文件夹,备份用。

cp -r hadoop hadoop_cluster

重命名配置文件。

mv hadoop hadoop_bak

创建软链接

ln -s hadoop hadoop_cluster

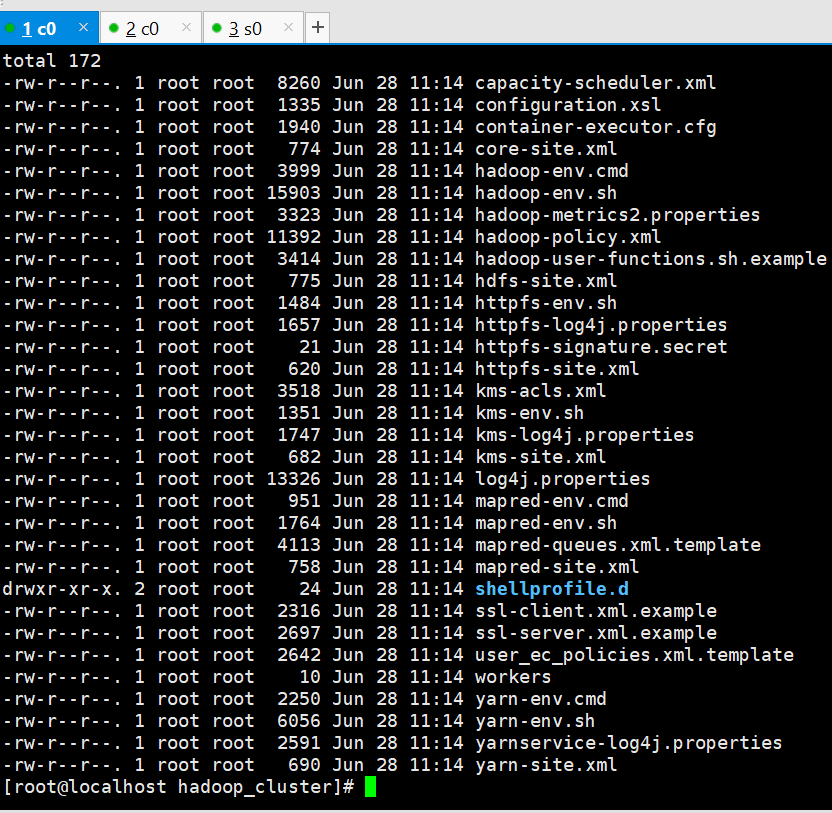

修改配置文件,路径:soft/java/hadoop-3.1.2/etc/hadoop_cluster/

分别修改

core-site.xml

<property>

<name>fs.defaultFS</name>

<value>hdfs://c0:9000/</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/home/xiaozw/soft/tmp/hadoop-${user.name}</value>

</property>

hdfs-site.xml

<property>

<name>dfs.replication</name>

<value>2</value>

</property>

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>c3:9868</value>

</property>

mapred-site.xml

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

yarn-site.xml

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.recourcemanager.hostname</name>

<value>c3</value>

</property>

<property>

<name>yarn.log-aggregation-enable</name>

<value>true</value>

</property>

<property>

<name>yarn.log-aggregation.retain-seconds</name>

<value>604800</value>

</property>

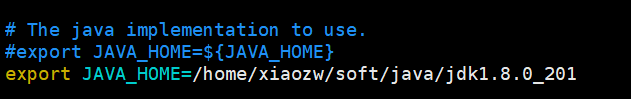

修改hadoop_cluster/hadoop-env.sh

export JAVA_HOME=/home/xiaozw/soft/java/jdk1.8.0_201

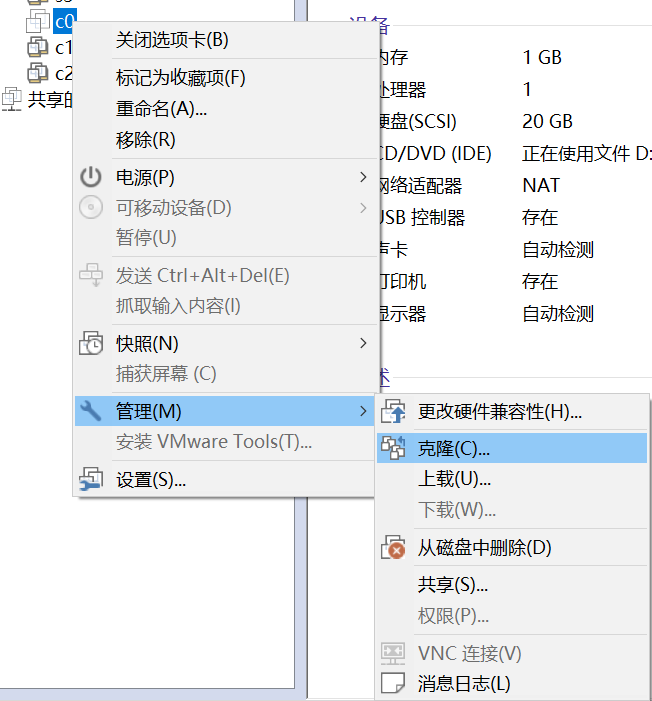

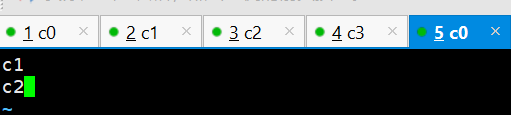

4. 克隆多台机器。修改hostname

分别修改每台机器。

vim /etc/hostname

c0

每台机器都一样配置。

vim /etc/hosts

192.168.132.143 c0

192.168.132.144 c1

192.168.132.145 c2

192.168.132.146 c3

4台服务器需要ssh免密码登录。

设置2台服务器为data-node。进入配置文件目录:

cd soft/java/hadoop-3.1.2/etc/hadoop_cluster/

sudo vim workers

新建脚本方便拷贝文件到多台服务器上面。

bat.sh

for((i=1;i<=3;i++))

{

#scp /home/xiaozw/soft/java/hadoop-3.1.2/etc/hadoop_cluster/hadoop-env.sh xiaozw@c$i:/home/xiaozw/soft/java/hadoop-3.1.2/etc/hadoop_cluster/hadoop-env.sh

#scp /home/xiaozw/soft/java/hadoop-3.1.2/etc/hadoop_cluster/hdfs-site.xml xiaozw@c$i:/home/xiaozw/soft/java/hadoop-3.1.2/etc/hadoop_cluster/hdfs-site.xml

#scp /home/xiaozw/soft/java/hadoop-3.1.2/etc/hadoop_cluster/core-site.xml xiaozw@c$i:/home/xiaozw/soft/java/hadoop-3.1.2/etc/hadoop_cluster/core-site.xml

#scp /home/xiaozw/soft/java/hadoop-3.1.2/etc/hadoop_cluster/mapred-site.xml xiaozw@c$i:/home/xiaozw/soft/java/hadoop-3.1.2/etc/hadoop_cluster/mapred-site.xml

#scp /home/xiaozw/soft/java/hadoop-3.1.2/etc/hadoop_cluster/yarn-site.xml xiaozw@c$i:/home/xiaozw/soft/java/hadoop-3.1.2/etc/hadoop_cluster/yarn-site.xml

scp /home/xiaozw/soft/java/hadoop-3.1.2/etc/hadoop_cluster/workers xiaozw@c$i:/home/xiaozw/soft/java/hadoop-3.1.2/etc/hadoop_cluster/workers

ssh xiaozw@c$i rm -rf /home/xiaozw/soft/tmp/

#scp /etc/hosts xiaozw@c$i:/etc/hosts

}

新增权限

chmod a+x bat.sh

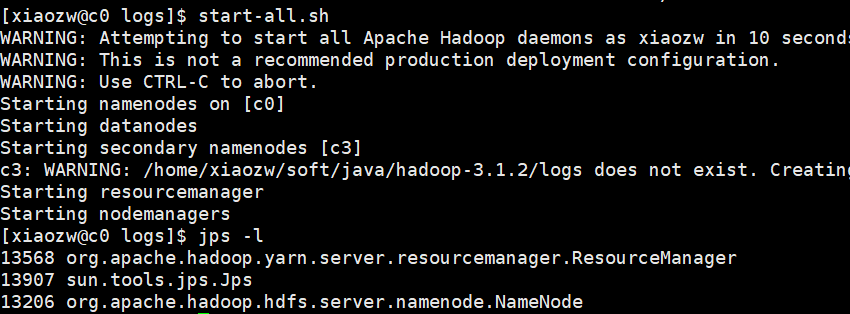

5. 启动hadoop

start-all.sh

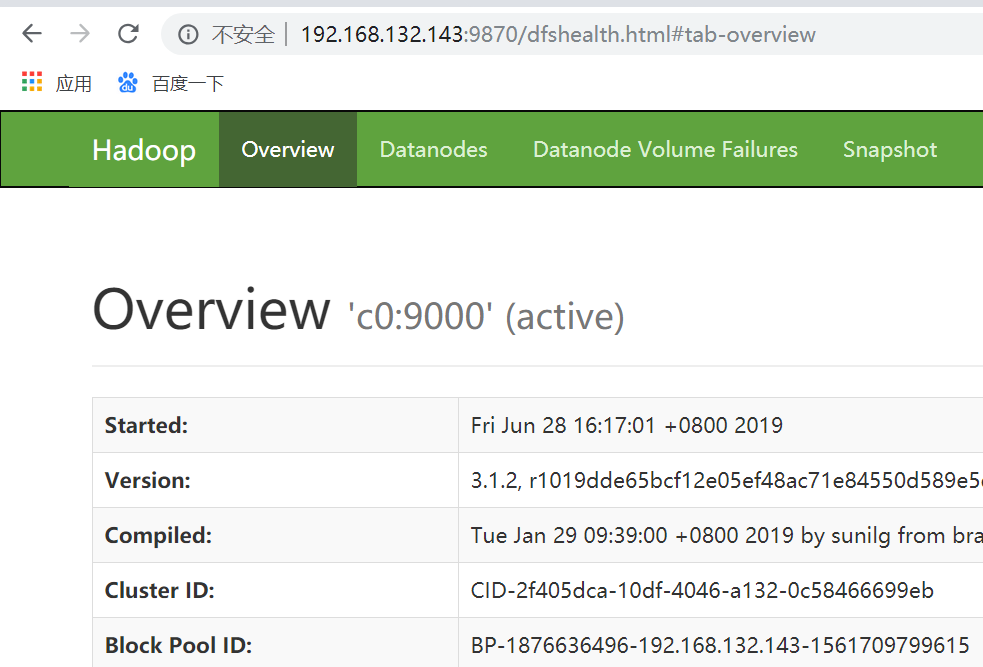

http://192.168.132.143:9870/dfshealth.html#tab-overview

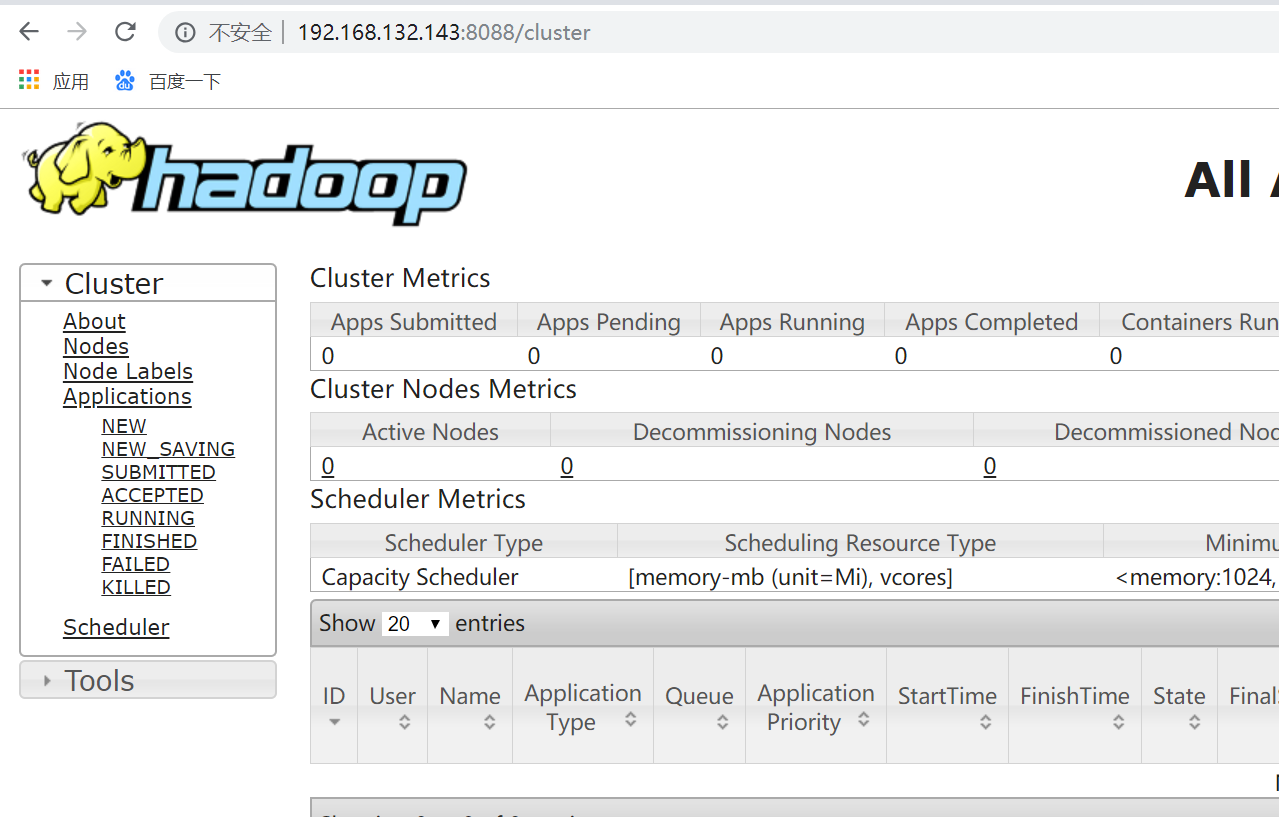

http://192.168.132.143:8088/cluster

配置文件下载地址:

https://files.cnblogs.com/files/xiaozw/yarn-site.xml

https://files.cnblogs.com/files/xiaozw/mapred-site.xml

https://files.cnblogs.com/files/xiaozw/hdfs-site.xml

https://files.cnblogs.com/files/xiaozw/core-site.xml

https://files.cnblogs.com/files/xiaozw/bat.sh