。 - 实践一个视觉里程计前端

- 理解slam软件框架

- 调试程序

怎么管理地图点,如何处理误匹配,如何选择关键帧。从简单到复杂实现一个框架。

- /bin : 二进制

- /include/myshlam:当把引用自己的头文件时需要写 include “myslam/xxx.h”,和别的库不容易混淆

- /src:.cpp文件

- /test: 测试用文件

- /lib: 存放编译好的库文件,libmyslam.so

- /config: 配置文件

- /cmak_modules:第三方库cmake文件

基本数据结构

设计好数据单元,程序的处理流程。

- 帧 :把我们认为重要的帧保存下来,认为相机的轨迹可以用这些关键帧来描述。

- 路标: 就是相机中的特征点,把所有land_mark放在地图中,帧的位姿和路标位置估计相当于局部的slam问题。

- 配置文件:每次运行的参数在这里读取就行

%YAML:1.0

# data

# the tum dataset directory, change it to yours!

dataset_dir: /home/ciang/dataset/rgbd_dataset_freiburg1_xyz

# camera intrinsics

# fr1

camera.fx: 517.3

camera.fy: 516.5

camera.cx: 325.1

camera.cy: 249.7

camera.depth_scale: 5000

- 坐标变换: 坐标系之间的变换,定义一个类,把所有的变换操作都放在这里:世界->相机;相机->归一化的相机坐标(去深度后);归一化后相机坐标->像素坐标。

具体实现:

#include "myslam/camera.h"

namespace myslam

{

Camera::Camera()

{

}

//传入:世界系的坐标点,是一个Vector3d 向量代表空间点(xyz)

// SE3 变换矩阵,注意T_c_w 的写法,从世界转换到相机,是吧c写在前面,和公式推到时相同

Vector3d Camera::world2camera ( const Vector3d& p_w, const SE3& T_c_w )

{

return T_c_w*p_w;

}

//反过来之后是SE3的inverse逆矩阵

Vector3d Camera::camera2world ( const Vector3d& p_c, const SE3& T_c_w )

{

return T_c_w.inverse() *p_c;

}

//相机坐标到像素点坐标,fx_ fy_cx_cy_是相机内参,根据公式得p_c ( 0,0 )

Vector2d Camera::camera2pixel ( const Vector3d& p_c )

{

return Vector2d (

fx_ * p_c ( 0,0 ) / p_c ( 2,0 ) + cx_,

fy_ * p_c ( 1,0 ) / p_c ( 2,0 ) + cy_

);

}

//回顾下公式吧

Vector3d Camera::pixel2camera ( const Vector2d& p_p, double depth )

{

return Vector3d (

( p_p ( 0,0 )-cx_ ) *depth/fx_,

( p_p ( 1,0 )-cy_ ) *depth/fy_,

depth

);

}

//连续变换世界到相机再到像素

Vector2d Camera::world2pixel ( const Vector3d& p_w, const SE3& T_c_w )

{

return camera2pixel ( world2camera ( p_w, T_c_w ) );

}

Vector3d Camera::pixel2world ( const Vector2d& p_p, const SE3& T_c_w, double depth )

{

return camera2world ( pixel2camera ( p_p, depth ), T_c_w );

}

}

为了关注算法的正确实现,c++使用的比较简单,用C的方式写c++

开始写

Config ,Frame,Camera,MapPoint,Map

类的关系

Camera

内参外参,相机坐标系,像素坐标系,世界坐标系之间tf树。外参值得就是输入,就是我们的数据。

//宏定义。。。。保证纸杯定义一次

#ifndef CAMERA_H

#define CAMERA_H

#include "myslam/common_include.h"

//命名空间

namespace myslam

{

// Pinhole RGBD camera model

class Camera

{

public:

typedef std::shared_ptr<Camera> Ptr;

float fx_, fy_, cx_, cy_, depth_scale_; // Camera intrinsics

Camera();

Camera ( float fx, float fy, float cx, float cy, float depth_scale=0 ) :

fx_ ( fx ), fy_ ( fy ), cx_ ( cx ), cy_ ( cy ), depth_scale_ ( depth_scale )

{}

// coordinate transform: world, camera, pixel

Vector3d world2camera( const Vector3d& p_w, const SE3& T_c_w );

Vector3d camera2world( const Vector3d& p_c, const SE3& T_c_w );

Vector2d camera2pixel( const Vector3d& p_c );

Vector3d pixel2camera( const Vector2d& p_p, double depth=1 );

Vector3d pixel2world ( const Vector2d& p_p, const SE3& T_c_w, double depth=1 );

Vector2d world2pixel ( const Vector3d& p_w, const SE3& T_c_w );

};

}

#endif // CAMERA_H

Camera实现

#include "myslam/camera.h"

namespace myslam

{

Camera::Camera()

{

}

Vector3d Camera::world2camera ( const Vector3d& p_w, const SE3& T_c_w )

{

return T_c_w*p_w;

}

Vector3d Camera::camera2world ( const Vector3d& p_c, const SE3& T_c_w )

{

return T_c_w.inverse() *p_c;

}

Vector2d Camera::camera2pixel ( const Vector3d& p_c )

{

return Vector2d (

fx_ * p_c ( 0,0 ) / p_c ( 2,0 ) + cx_,

fy_ * p_c ( 1,0 ) / p_c ( 2,0 ) + cy_

);

}

Vector3d Camera::pixel2camera ( const Vector2d& p_p, double depth )

{

return Vector3d (

( p_p ( 0,0 )-cx_ ) *depth/fx_,

( p_p ( 1,0 )-cy_ ) *depth/fy_,

depth

);

}

Vector2d Camera::world2pixel ( const Vector3d& p_w, const SE3& T_c_w )

{

return camera2pixel ( world2camera ( p_w, T_c_w ) );

}

Vector3d Camera::pixel2world ( const Vector2d& p_p, const SE3& T_c_w, double depth )

{

return camera2world ( pixel2camera ( p_p, depth ), T_c_w );

}

}

Fram定义

#ifndef FRAME_H

#define FRAME_H

#include "myslam/common_include.h"

#include "myslam/camera.h"

namespace myslam

{

// forward declare

class MapPoint;

class Frame

{

//头指针

//帧数

//时间戳

//变换矩阵

//相机内参

//图像点云数据

public:

typedef std::shared_ptr<Frame> Ptr; //

unsigned long id_; // id of this frame

double time_stamp_; // when it is recorded

SE3 T_c_w_; // transform from world to camera

Camera::Ptr camera_; // Pinhole RGBD Camera model

Mat color_, depth_; // color and depth image

public: // data members

Frame();

Frame( long id, double time_stamp=0, SE3 T_c_w=SE3(), Camera::Ptr camera=nullptr, Mat color=Mat(), Mat depth=Mat() );

~Frame();

//产生一个静态的frame 并把头指针返回

//寻找深度

//根据公式判断是否在合理的旋转变换范围之内

// factory function

static Frame::Ptr createFrame();

// find the depth in depth map

double findDepth( const cv::KeyPoint& kp );

// Get Camera Center

Vector3d getCamCenter() const;

// check if a point is in this frame

bool isInFrame( const Vector3d& pt_world );

};

}

#endif // FRAME_H

实现:

#ifndef FRAME_H

#define FRAME_H

#include "myslam/common_include.h"

#include "myslam/camera.h"

namespace myslam

{

// forward declare 前向声明,还不确定的类

class MapPoint;

class Frame

{

public:

typedef std::shared_ptr<Frame> Ptr;

unsigned long id_; // id of this frame

double time_stamp_; // when it is recorded时间戳

SE3 T_c_w_; // transform from world to camera这一帧从world到camera的se3

Camera::Ptr camera_; // Pinhole RGBD Camera model 相机内参

Mat color_, depth_; // color and depth image 这帧里存储的图片点云信息

public: // data members

Frame();

//SE3 T_c_w=SE3() 不理解为什么这么写

Frame( long id, double time_stamp=0, SE3 T_c_w=SE3(), Camera::Ptr camera=nullptr, Mat color=Mat(), Mat depth=Mat() );

~Frame();

// factory function

static Frame::Ptr createFrame();

// find the depth in depth map

double findDepth( const cv::KeyPoint& kp );

// Get Camera Center

Vector3d getCamCenter() const;

// check if a point is in this frame

bool isInFrame( const Vector3d& pt_world );

};

}

#endif // FRAME_H

MapPoint

表示路标点,估计其世界坐标,拿到当前帧提取到的特征点与地图中的路标点匹配估计相机运动,所以呢描述子树不可少的,此外记录一个点的被观测数和被匹配的次数,作为评价好坏程度指标。

0.1/include/myslam/frame.h

#ifndef MAPPOINT_H

#define MAPPOINT_H

namespace myslam

{

class Frame;

class MapPoint

{

public:

typedef shared_ptr<MapPoint> Ptr;

unsigned long id_; // ID

Vector3d pos_; // Position in world

Vector3d norm_; // Normal of viewing direction

Mat descriptor_; // Descriptor for matching

int observed_times_; // being observed by feature matching algo.

int correct_times_; // being an inliner in pose estimation

MapPoint();

MapPoint( long id, Vector3d position, Vector3d norm );

// factory function

static MapPoint::Ptr createMapPoint();

};

}

#endif // MAPPOINT_H

实现:

#include "myslam/common_include.h"

#include "myslam/mappoint.h"

namespace myslam

{

MapPoint::MapPoint()

: id_(-1), pos_(Vector3d(0,0,0)), norm_(Vector3d(0,0,0)), observed_times_(0), correct_times_(0)

{

}

//带参数构造

MapPoint::MapPoint ( long id, Vector3d position, Vector3d norm )

: id_(id), pos_(position), norm_(norm), observed_times_(0), correct_times_(0)

{

}

MapPoint::Ptr MapPoint::createMapPoint()

{

static long factory_id = 0;

return MapPoint::Ptr(

new MapPoint( factory_id++, Vector3d(0,0,0), Vector3d(0,0,0) )

);

}

}

Map类:

Map 类管理着所有的路标点,并负责添加新路标、删除不好的路标等工作。 VO 的匹配过程只需要和 Map 打交道即可

Map 类中实际存储了各个关键帧和路标点,既需要随机访问,又需要随时插入和删除,因此我们使用散列(Hash)来存储它们。

Map类的实现:

#include "myslam/map.h"

namespace myslam

{

//声明处:unordered_map<unsigned long, Frame::Ptr > keyframes_; // all key-frames

void Map::insertKeyFrame ( Frame::Ptr frame )

{

cout<<"Key frame size = "<<keyframes_.size()<<endl;

if ( keyframes_.find(frame->id_) == keyframes_.end() )

{ //[参见STL make_pair的用法](https://www.cnblogs.com/ranjiewen/p/5901296.html)

keyframes_.insert( make_pair(frame->id_, frame) );

//存入对应的键&键值,由make_pair维护

}

else

{

keyframes_[ frame->id_ ] = frame;

}

}

//管理点云,数据结构是硬伤。。。2019/05

void Map::insertMapPoint ( MapPoint::Ptr map_point )

{

if ( map_points_.find(map_point->id_) == map_points_.end() )

{

map_points_.insert( make_pair(map_point->id_, map_point) );

}

else

{

map_points_[map_point->id_] = map_point;

}

}

}

Config:

我们把构造函数声明为私有,防止这个类的对象在别处建立,它只能在 setParameterFile 时构造。实际构造的对象是 Config 的智能指针: static shared_ptrconfig_。

#ifndef CONFIG_H

#define CONFIG_H

#include "myslam/common_include.h"

namespace myslam

{

class Config

{

private:

static std::shared_ptr<Config> config_;

// static std::shared_ptr<Config> config_;

cv::FileStorage file_; //cv中管理XML增删改查的类

Config () {} // private constructor makes a singleton

public:

~Config(); // close the file when deconstructing

// set a new config file

static void setParameterFile( const std::string& filename );

// access the parameter values

template< typename T >

static T get( const std::string& key )

{

return T( Config::config_->file_[key] );//file是具体的管理xml

}

};

}

#endif // CONFIG_H

Config实现:

namespace myslam

{

void Config::setParameterFile( const std::string& filename )

{

if ( config_ == nullptr )

config_ = shared_ptr<Config>(new Config);

if(config_== nullptr)

config_== shared_ptr<Config>(new Config);

//config_中的 管理器file

///主要功能的一行

config_->file_ = cv::FileStorage( filename.c_str(), cv::FileStorage::READ );

if ( config_->file_.isOpened() == false )

{

std::cerr<<"parameter file "<<filename<<" does not exist."<<std::endl;

config_->file_.release();

return;

}

}

至此,我们定义了 SLAM 程序的基本数据结构,书写了若干个基本类。这好比是造房 子的砖头和水泥。你可以调用 cmake 编译这个 0.1版,尽管它还没有实质性的功能。接下 来我们来考虑把前面讲过的 VO 算法加到工程中,并做一些测试来调整各算法的性能。注 意,我会刻意地暴露某些设计的问题,所以你看到的实现不见得就是最好的(或者足够好 的)。

第一次改进

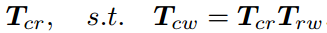

假设参考帧相对世界坐标的变换矩阵为 ,当前帧与世界坐标系间为 Tcw,则待估计的运动与这两个帧的变换矩阵构成左乘关系:

VisualOdometry 类给出了上述算法的实现

#ifndef VISUALODOMETRY_H

#define VISUALODOMETRY_H

#include "myslam/common_include.h"

#include "myslam/map.h"

#include <opencv2/features2d/features2d.hpp>

namespace myslam

{

class VisualOdometry

{

public:

typedef shared_ptr<VisualOdometry> Ptr;

//枚举典型用法:只能多选一时候

enum VOState {

INITIALIZING=-1,

OK=0,

LOST

};

VOState state_; // current VO status

Map::Ptr map_; // map with all frames and map points 实现MAP那些

Frame::Ptr ref_; // reference frame 参考帧

Frame::Ptr curr_; // current frame 当前帧 这个类的原材料

//Ptr is similar to boost::shared_ptr that is part of the Boost library

cv::Ptr<cv::ORB> orb_; // orb detector and computer cv::ORB 类型的cv下的Ptr智能指针

//

vector<cv::Point3f> pts_3d_ref_; // 3d points in reference frame

//

vector<cv::KeyPoint> keypoints_curr_; // keypoints in current frame

//

Mat descriptors_curr_; // descriptor in current frame

Mat descriptors_ref_; // descriptor in reference frame

//

vector<cv::DMatch> feature_matches_;

//根据上面一堆得到的结果

SE3 T_c_r_estimated_; // the estimated pose of current frame

//判断好坏

int num_inliers_; // number of inlier features in icp

int num_lost_; // number of lost times

// parameters

int num_of_features_; // number of features

double scale_factor_; // scale in image pyramid

int level_pyramid_; // number of pyramid levels

float match_ratio_; // ratio for selecting good matches

int max_num_lost_; // max number of continuous lost times

int min_inliers_; // minimum inliers

double key_frame_min_rot; // minimal rotation of two key-frames

double key_frame_min_trans; // minimal translation of two key-frames

public: // functions

VisualOdometry();

~VisualOdometry();

bool addFrame( Frame::Ptr frame ); // add a new frame

protected:

// inner operation

void extractKeyPoints();

void computeDescriptors();

void featureMatching();

void poseEstimationPnP();

void setRef3DPoints();

void addKeyFrame();

bool checkEstimatedPose();

bool checkKeyFrame();

};

}

#endif // VISUALODOMETRY_H