这个服务的代理,相对于服务网关来说,有些典型,

今天调通了,作个记录。

一,nginx配置

upstream ai_ambassador {

ip_hash;

server 1.2.3.4:30080;

}

server {

listen 8080;

server_name localhost;

client_max_body_size 500m;

proxy_connect_timeout 600;

proxy_read_timeout 600;

proxy_send_timeout 600;

add_header 'Access-Control-Allow-Origin' '*';

proxy_http_version 1.1;

proxy_set_header Connection "";

location / {

proxy_pass http://ai_ambassador;

proxy_set_header Host $host;

proxy_set_header X-Real-Scheme $scheme;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

}

}

二, zipkin

记住红线部署,不然,nginx会抱怨zipkin重定义次数太多,因为/zipkin本身的服务里使用了302跳转。

---

apiVersion: v1

kind: Service

metadata:

name: zipkin

annotations:

getambassador.io/config: |

---

apiVersion: ambassador/v1

kind: TracingService

name: tracing

service: zipkin:9411

driver: zipkin

---

apiVersion: ambassador/v1

kind: Mapping

name: zipkin_mapping

prefix: /zipkin

rewrite: /zipkin/

service: zipkin:9411

spec:

selector:

app: zipkin

ports:

- port: 9411

name: http

targetPort: 9411

# nodePort: 32764

# type: NodePort

---

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: zipkin

spec:

replicas: 1

strategy:

type: RollingUpdate

template:

metadata:

labels:

app: zipkin

spec:

containers:

- name: zipkin

image: harbor.xxx.cn/3rd_part/openzipkin/zipkin:2.16

imagePullPolicy: IfNotPresent

ports:

- name: http

containerPort: 9411

三,指定ambassador的nodeport端口。

---

apiVersion: v1

kind: Service

metadata:

name: ambassador

spec:

type: NodePort

ports:

- name: http

port: 80

targetPort: 8080

protocol: TCP

nodePort: 30080

selector:

service: ambassador

四,由于要将mlfow tracking的数据放到mysql中,先建一个mysql服务(嘿嘿,由于拼写错误,ml flow tracking和ml flow tracing没分清)。由于是测试,密码随意 ,没有将数据,配置之类挂载出来。

apiVersion: apps/v1

kind: Deployment

metadata:

name: mlflow-tracing-mysql

spec:

replicas: 1

selector:

matchLabels:

name: mlflow-tracing-mysql

template:

metadata:

labels:

name: mlflow-tracing-mysql

spec:

containers:

- name: mysql

image: harbor.xxx.cn/3rd_part/mysql:5.7.24

imagePullPolicy: IfNotPresent

ports:

- containerPort: 3306

env:

- name: MYSQL_ROOT_PASSWORD

value: "xxxx"

---

kind: Service

apiVersion: v1

metadata:

name: mlflow-tracing-mysql

spec:

type: NodePort

ports:

- name: mlflow-tracing-mysql

port: 3306

targetPort: 3306

protocol: TCP

selector:

name: mlflow-tracing-mysql

这一步完了,好像可能,要确认root用户可远程访问,我好像还自己建好了mlflow库,可能不建不行吧,毕竟人家是直接使用的。

如果更新了hdfs这些配置,最好也要重建数据库,因为这些配置是第一次连接时,写进数据库了,或是自己进数据库修改吧。

五,mlflow tracking配置,这是重头戏。

apiVersion: apps/v1

kind: Deployment

metadata:

name: mlflow-tracking

spec:

replicas: 1

selector:

matchLabels:

name: mlflow-tracking

template:

metadata:

labels:

name: mlflow-tracking

spec:

imagePullSecrets:

- name: harborsecret

containers:

- name: mlflow-tracing

image: harbor.xxx.cn/mlflow/mlflow-tracing:v1.2

imagePullPolicy: IfNotPresent

# command: ['sh', '-c', 'echo Hello Kubernetes! && sleep 360000']

command: ['sh', '-c', 'mlflow server --backend-store-uri mysql+pymysql://root:xxx@mlflow-tracing-mysql:3306/mlflow --default-artifact-root hdfs://xxxx:8020/ml_model/mlflow --host 0.0.0.0']

ports:

- containerPort: 5000

env:

- name: MLFRACKING_TOKEN

value: "no use"

---

kind: Service

apiVersion: v1

metadata:

name: mlflow-tracking

annotations:

getambassador.io/config: |

---

apiVersion: ambassador/v1

kind: Mapping

name: mlflow_tracking_mapping

prefix: /mlflow-tracking/

# rewrite: /

service: mlflow-tracking:5000

spec:

type: NodePort

ports:

- name: mlflow-tracking

port: 5000

targetPort: 5000

protocol: TCP

# nodePort: 30080

selector:

name: mlflow-tracking

六,mlflow tracking的dockerfile肿么写的呢?look,如果条件所限,请使用http代码,如果国外慢,则pip时,使用国内镜像。

FROM harbor.xxx.cn/3rd_part/continuumio/miniconda3:4.7.10 RUN export http_proxy=http://xxx.local:8080 \ && export https_proxy=http://xxx.local:8080 \ && export ftp_proxy=xxx.local:8080 \ && pip install pymysql mlflow -i http://pypi.douban.com/simple --trusted-host pypi.douban.com \ && conda install hdfs3 -c conda-forge \ && conda clean -y -all \ && rm -rf ~/.cache/pip ENV MLFLOW_HDFS_DRIVER=libhdfs3 EXPOSE 5000:5000

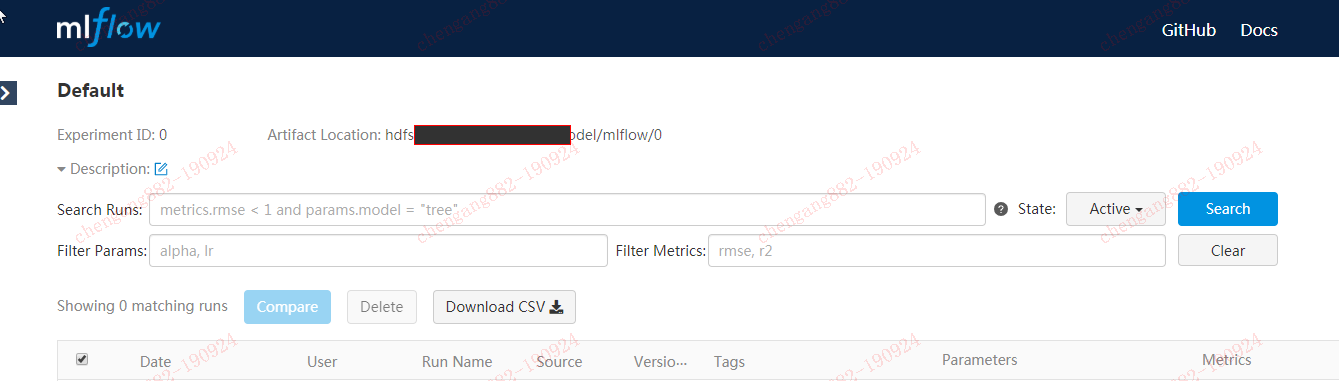

七,七星连珠之后,即可访问nginx上代理的mlflow tracking服务啦。。