今天使用selenium给别人写的一个自动化爬虫程序

from selenium import webdriver

from selenium.webdriver.common.by import By

from selenium.webdriver.support.ui import WebDriverWait

from selenium.webdriver.support import expected_conditions as EC

from selenium.common.exceptions import TimeoutException

from pyquery import PyQuery as pq

import json

import re

import time

import os

import csv

browser=webdriver.Firefox()

browser.maximize_window()

wait=WebDriverWait(browser, 10)

def search():

browser.get("https://www.jd.com/")

input=wait.until(

EC.presence_of_element_located((By.CSS_SELECTOR, "#key"))

)

submit=wait.until(

EC.element_to_be_clickable((By.CSS_SELECTOR,"#search > div > div.form > button > i"))

)

input.send_keys("电脑")

submit.click()

def next_page(page_number):

input = wait.until(

EC.presence_of_element_located((By.CSS_SELECTOR, "#J_bottomPage > span.p-skip > input"))

)

submit = wait.until(

EC.element_to_be_clickable((By.CSS_SELECTOR, "#J_bottomPage > span.p-skip > a"))

)

input.clear()

input.send_keys(page_number)

submit.click()

jiexi_page()

def write_to_file(content):

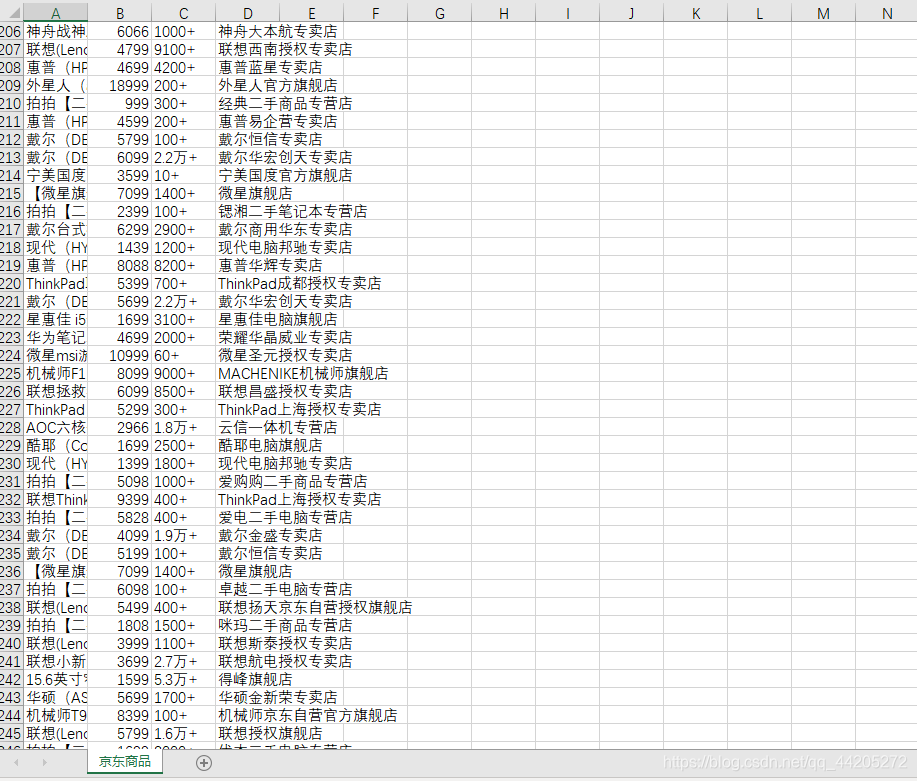

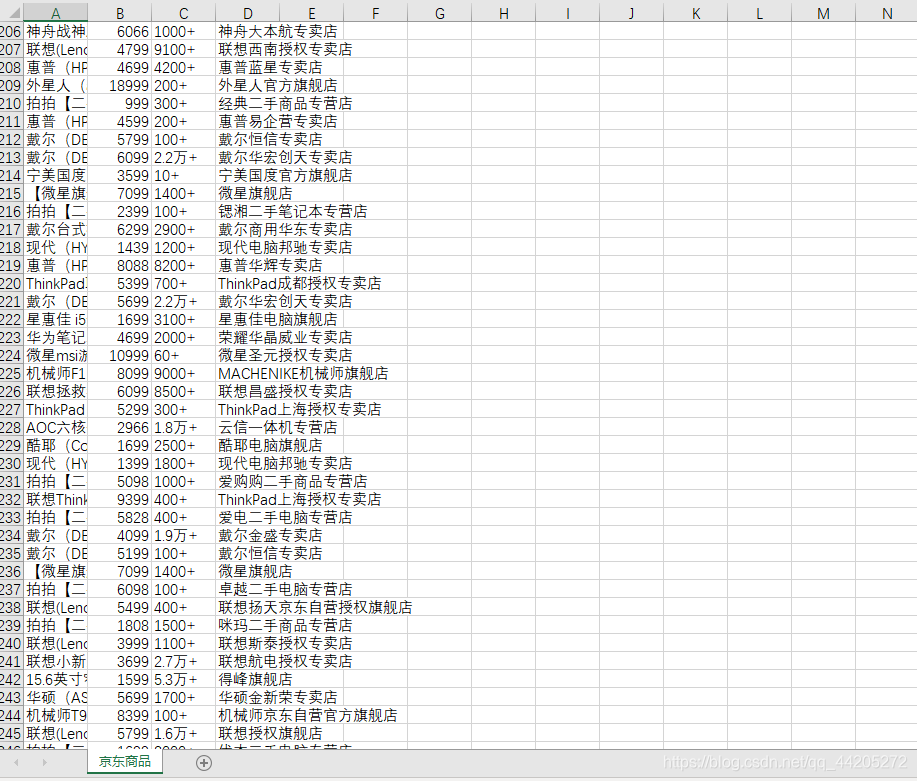

f = open('京东商品.csv', 'a', encoding='utf-8', newline='')

writer = csv.writer(f)

writer.writerow(content)

def jiexi_page():

wait.until(

EC.presence_of_element_located((By.CSS_SELECTOR,"#J_searchWrap .gl-item"))

)

html=browser.page_source

doc=pq(html)

items=doc("#J_searchWrap .gl-item").items()

for item in items:

product={

'name':item.find(".p-name.p-name-type-2").text().replace("\n",""),

'price':item.find(".p-price").text()[1:].replace("\n",""),

'评价':item.find(".p-commit").text()[:-3],

'shop':item.find(".p-shop").text()

}

product_list = list(product.values())

print(product_list)

write_to_file(product_list)

def main():

search()

time.sleep(1)

next_page(1)

for i in range(2,101):

next_page(i)

time.sleep(2)

if __name__=="__main__":

main()