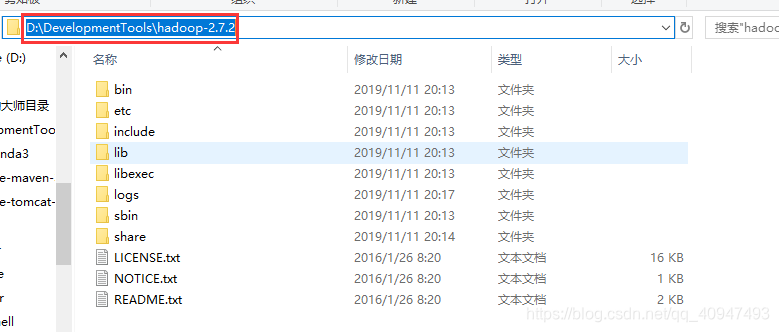

一、下载Hadoop jar包至非中文路径

-

下载链接:https://hadoop.apache.org/releases.html

-

解压至非中文路径

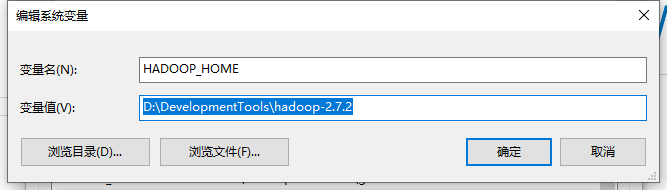

二、配置Hadoop环境变量

- 配置HADOOP_HOME环境变量

- 配置Path环境变量

三、创建一个Maven工程

-

创建一个名为

HdfsClientDemo的Maven工程 -

在

pom.xml中导入相应的依赖<dependencies> <dependency> <groupId>junit</groupId> <artifactId>junit</artifactId> <version>RELEASE</version> </dependency> <dependency> <groupId>org.apache.logging.log4j</groupId> <artifactId>log4j-core</artifactId> <version>2.8.2</version> </dependency> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-common</artifactId> <version>2.7.2</version> </dependency> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-client</artifactId> <version>2.7.2</version> </dependency> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-hdfs</artifactId> <version>2.7.2</version> </dependency> <dependency> <groupId>jdk.tools</groupId> <artifactId>jdk.tools</artifactId> <version>1.8</version> <scope>system</scope> <systemPath>${JAVA_HOME}/lib/tools.jar</systemPath> </dependency> </dependencies> -

在项目的

src/main/resources目录下,新建一个文件,命名为log4j.properties,在文件中填入log4j.rootLogger=INFO, stdout log4j.appender.stdout=org.apache.log4j.ConsoleAppender log4j.appender.stdout.layout=org.apache.log4j.PatternLayout log4j.appender.stdout.layout.ConversionPattern=%d %p [%c] - %m%n log4j.appender.logfile=org.apache.log4j.FileAppender log4j.appender.logfile.File=target/spring.log log4j.appender.logfile.layout=org.apache.log4j.PatternLayout log4j.appender.logfile.layout.ConversionPattern=%d %p [%c] - %m%n -

创建包名:

com.easysir.hdfs

-

创建

HdfsClient类package com.easysir.hdfs; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.FileSystem; import org.apache.hadoop.fs.Path; import java.io.IOException; import java.net.URI; import java.net.URISyntaxException; /** * description * * @author Hu.Wang 2020/02/02 11:09 */ public class HDFSClient { public static void main(String[] args) throws IOException, URISyntaxException, InterruptedException { Configuration conf = new Configuration(); // 1 获取hdfs客户端对象,此处注意如果没有配置Windows中host文件,SZMaster01使用地址代替 FileSystem fs = FileSystem.get(new URI("hdfs://SZMaster01:9000"), conf, "hadoop"); // 2 在hdfs上创建路径 fs.mkdirs(new Path("/0202/mydir")); // 3 关闭资源 fs.close(); System.out.println("over"); } } -

执行程序