本文继续介绍matlab提供的其他优化求解函数,这里介绍

- fminbnd, 用于查找单变量函数在定区间上的最小值,

- fminsearch,使用无导数法计算无约束的多变量函数的最小值

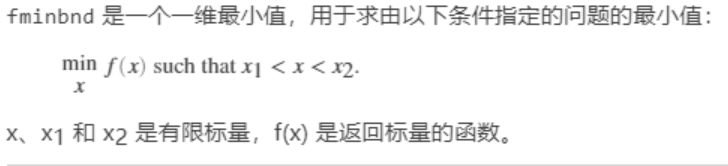

fminbnd的用法和fminunc很相似,区别就是fminunc功能更加强大,可以搜索多变量函数最优点,但是fminbnd只能用于单变量的无约束问题:

典型的问题表示如下:

函数仅提供优化变量的上下界,其他约束都不允许,语法形式仅有如下几种:

x = fminbnd(fun,x1,x2)

x = fminbnd(fun,x1,x2,options)

x = fminbnd(problem)

[x,fval] = fminbnd(___)

[x,fval,exitflag] = fminbnd(___)

[x,fval,exitflag,output] = fminbnd(___)

举个例子,我们优化函数:

function f = scalarobjective(x)

f = 0;

for k = -10:10

f = f + (k+1)^2*cos(k*x)*exp(-k^2/2);

end

主函数如下:

%% the use of fminbnd

options = optimset('Display','iter','PlotFcns',@optimplotfval);

[x,fval,exitflag,output] = fminbnd(@scalarobjective,1,3,options)

结果如下:

Func-count x f(x) Procedure

1 1.76393 -0.589643 initial

2 2.23607 -0.627273 golden

3 2.52786 -0.47707 golden

4 2.05121 -0.680212 parabolic

5 2.03127 -0.68196 parabolic

6 1.99608 -0.682641 parabolic

7 2.00586 -0.682773 parabolic

8 2.00618 -0.682773 parabolic

9 2.00606 -0.682773 parabolic

10 2.0061 -0.682773 parabolic

11 2.00603 -0.682773 parabolic

Optimization terminated:

the current x satisfies the termination criteria using OPTIONS.TolX of 1.000000e-04

x =

2.0061

fval =

-0.6828

exitflag =

1

output =

struct with fields:

iterations: 10

funcCount: 11

algorithm: 'golden section search, parabolic interpolation'

message: 'Optimization terminated:↵ the current x satisfies the termination criteria using OPTIONS.TolX of 1.000000e-04 ↵'

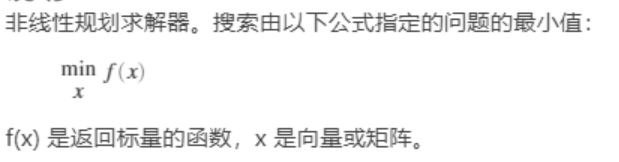

而对于fminsearch的数学标准形式:

函数甚至不提供优化变量的上下界,仅仅能够使用无约束优化功能,提供的函数使用方式也仅有以下几种:

x = fminsearch(fun,x0)

x = fminsearch(fun,x0,options)

x = fminsearch(problem)

[x,fval] = fminsearch(___)

[x,fval,exitflag] = fminsearch(___)

[x,fval,exitflag,output] = fminsearch(___)

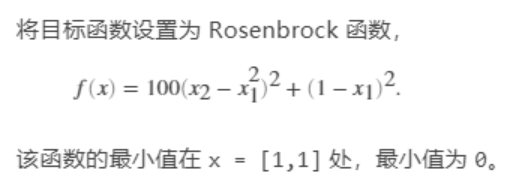

举个例子,解matlab提供的rosenbrock函数:

%% the use of fminsearch\

clc

clear all

options = optimset('Display','iter','PlotFcns',@optimplotfval,'TolCon',1e-6);

fun = @(x)100*(x(2) - x(1)^2)^2 + (1 - x(1))^2;

x0 = [-1.2,1];

[x,fval,exitflag,output] = fminsearch(fun,x0,options)

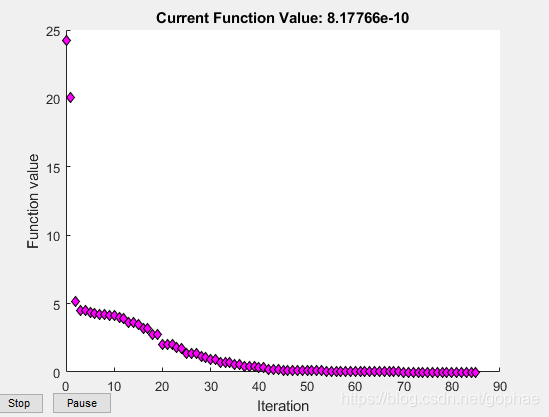

既然fminunc和fminseach都有一样的功能,我们不如做个小小的对比,fminsearch是个老一点的函数,仅仅提供Nelder-Mead simplex direct search这一种搜索方式,也就是单纯性搜索,可以看一下和fminunc提供的‘quasi-newton’或者‘trust-region’比较是什么样的:

我们还是解同样的rosenblock函数,看迭代次数对比:

%% the use of fminsearch\

clc

clear all

options = optimset('Display','iter','PlotFcns',@optimplotfval,'TolCon',1e-6);

fun = @(x)100*(x(2) - x(1)^2)^2 + (1 - x(1))^2;

x0 = [-1.2,1];

[x,fval,exitflag,output] = fminsearch(fun,x0,options)

%% comparison between fminsearch and fminunc

clc

clear all

options = optimoptions(@fminunc,'Display','iter','Algorithm','quasi-newton','PlotFcns',@optimplotfval);

fun = @(x)100*(x(2) - x(1)^2)^2 + (1 - x(1))^2;

x0 = [-1.2,1];

[x,fval,exitflag,output] = fminunc(fun,x0,options)

结果如下:

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

fminsearch result:

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

Optimization terminated:

the current x satisfies the termination criteria using OPTIONS.TolX of 1.000000e-04

and F(X) satisfies the convergence criteria using OPTIONS.TolFun of 1.000000e-04

x =

1.0000 1.0000

fval =

8.1777e-10

exitflag =

1

output =

struct with fields:

iterations: 85

funcCount: 159

algorithm: 'Nelder-Mead simplex direct search'

message: 'Optimization terminated:↵ the current x satisfies the termination criteria using OPTIONS.TolX of 1.000000e-04 ↵ and F(X) satisfies the convergence criteria using OPTIONS.TolFun of 1.000000e-04 ↵'

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

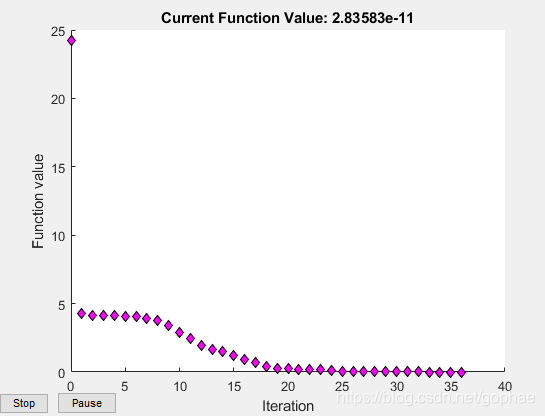

fminunc result:

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

x =

1.0000 1.0000

fval =

2.8358e-11

exitflag =

1

output =

struct with fields:

iterations: 36

funcCount: 138

stepsize: 2.3879e-04

lssteplength: 1

firstorderopt: 1.8950e-05

algorithm: 'quasi-newton'

message: '↵Local minimum found.↵↵Optimization completed because the size of the gradient is less than↵the value of the optimality tolerance.↵↵<stopping criteria details>↵↵Optimization completed: The first-order optimality measure, 8.748957e-08, is less ↵than options.OptimalityTolerance = 1.000000e-06.↵↵'

迭代次数少了很多,而且值得注意的是,这里stopping criteria fminsearch默认的是e-4, 而fminunc默认的是e-6,用的是更加严格的约束标准。

画出来迭代曲线:

fminunc

fminsearch

说明quasi-newton大发好啊,注意,fminunc是用不了内点法的,因为没有constraints, 我们在fmincon里面就可以用到内点法了。