工具及软件

1. myeclipse 10.8

2. hadoop2.6.0 插件 点我下载

1. 将插件放入myeclipse的目录MyEclipse\MyEclipse 10\dropins

2. 如有必要,删除configuration目录下的org.eclipse.update文件夹

3. 第一次启动eclpse后,会让我们设定一个工作目录,即以后建的项目都在这个工作目录下。

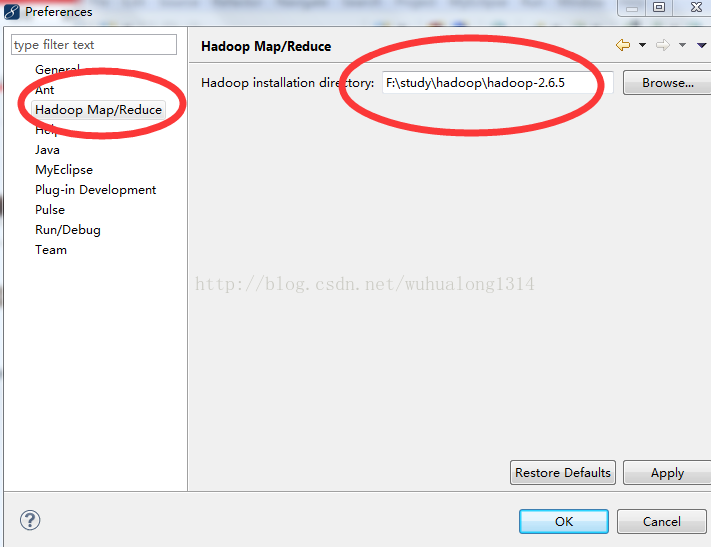

进入后,在菜单window->Rreferences下打开设置:

点击browse选择hadoop目录,然后点OK

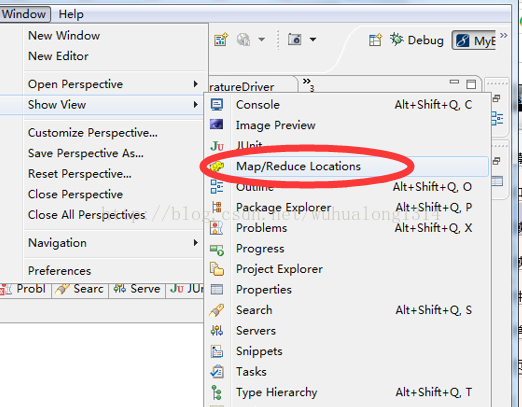

4. 打开myeclipse在window>>show view >>会出现MapReduce Tools>>Map/Reduce Locations 打开

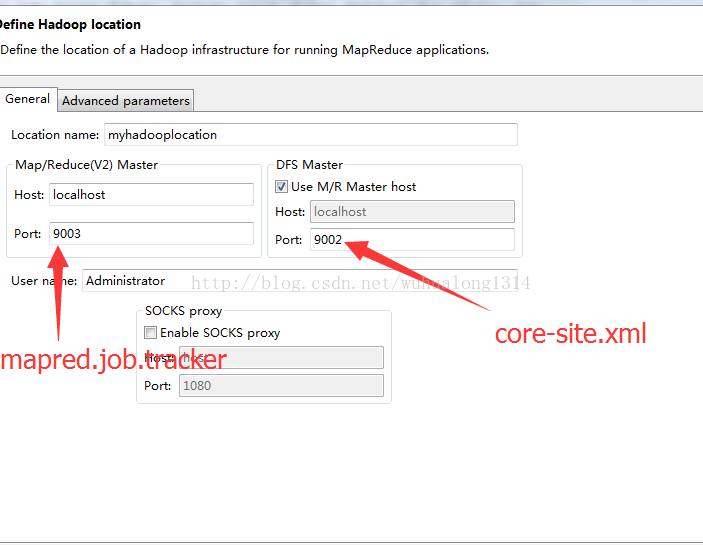

配置hadoop运行环境

在eclipse下配置hadoop location的时候。hadoop端口号应该与conf文件夹下的core-site.xml以及mapred-site.xml保持一致,前者对应dfs master,后者对应map-red master

在配置完后,在Project Explorer中就可以浏览到DFS中的文件

编写第一个MR程序(统计单词数量)

在eclipse菜单下 new Project 可以看到,里面增加了Map/Reduce选项:

新建一个Map/Reduce

新建一个类 WordCount.java

代码如下

import java.io.IOException;

import java.util.StringTokenizer;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.util.GenericOptionsParser;

public class WordCount {

public static class TokenizerMapper extends

Mapper<Object, Text, Text, IntWritable> {

private final static IntWritable one = new IntWritable(1);

private Text word = new Text();

public void map(Object key, Text value, Context context)

throws IOException, InterruptedException {

System.out.println("key=" + key.toString());

System.out.println("Value=" + value.toString());

StringTokenizer itr = new StringTokenizer(value.toString());

while (itr.hasMoreTokens()) {

word.set(itr.nextToken());

context.write(word, one);

}

}

}

public static class IntSumReducer extends

Reducer<Text, IntWritable, Text, IntWritable> {

private IntWritable result = new IntWritable();

public void reduce(Text key, Iterable<IntWritable> values,

Context context) throws IOException, InterruptedException {

int sum = 0;

for (IntWritable val : values) {

sum += val.get();

}

result.set(sum);

context.write(key, result);

}

}

public static void main(String[] args) throws Exception {

Configuration conf = new Configuration();

System.out.println("url:" + conf.get("fs.default.name"));

String[] otherArgs = new GenericOptionsParser(conf, args)

.getRemainingArgs();

if (otherArgs.length != 2) {

System.err.println("Usage: wordcount <in> <out>");

System.exit(2);

}

Job job = new Job(conf, "word count");

job.setJarByClass(WordCount.class);

job.setMapperClass(TokenizerMapper.class);

job.setCombinerClass(IntSumReducer.class);

job.setReducerClass(IntSumReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

FileInputFormat.addInputPath(job, new Path(otherArgs[0]));

FileOutputFormat.setOutputPath(job, new Path(otherArgs[1]));

System.exit(job.waitForCompletion(true) ? 0 : 1);

}

}

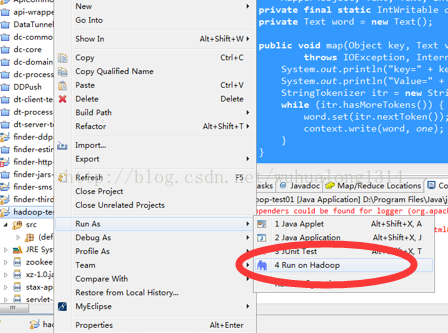

接下来 直接在 myeclipse中运行。 Run on Hadoop

如果报错 如

(null) entry in command string: null chmod 0700

需要把 hadoop\bin 下的hadoop.dll拷贝到 c:\windows\system32目录中

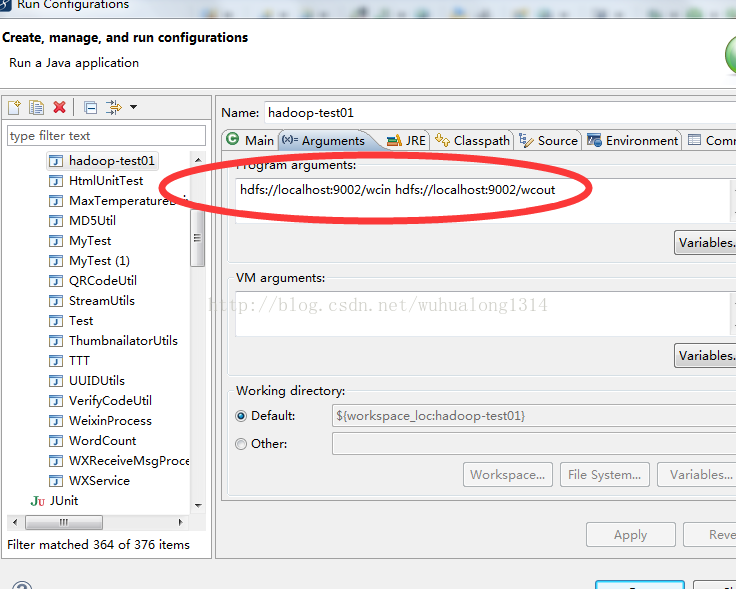

以上的WordCount运行还需要设置运行时参数, 输入文件夹和输出文件夹

Run Configuration

首先创建 wcin 目录 并且上传多个 .txt 的 碎文件 到 wcin目录下

F:\study\hadoop\hadoop-2.6.5\sbin>hadoop fs -mkdir hdfs://localhost:9002/wcin

F:\study\hadoop\hadoop-2.6.5\sbin>hadoop fs -put c:\a.txt hdfs://localhost:9002/

wcin/wc.txtwcout目录不用创建了 ,运行输出后自动创建

看图结果

进入 wcout

下载后 打开输出文件 ,统计出了单子数量

eclipse中查看文件系统