准备文件:

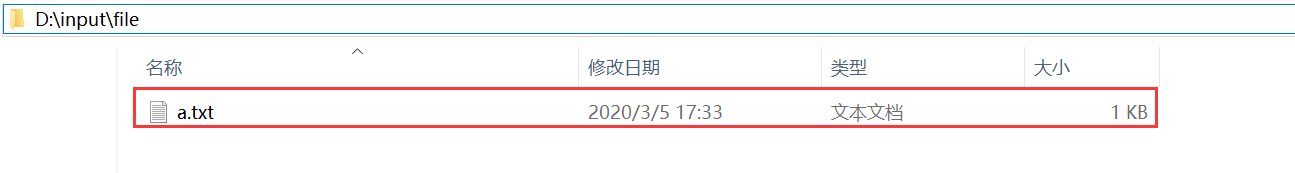

自己在d盘下D:\input\file文件下创建a.txt文本

其中a.txt文本下编辑的的内容为:

hadoop

flume

spark

hive

flink

具体代码实现:

package xuwei.tech;

import org.apache.flink.api.common.functions.FlatMapFunction;

import org.apache.flink.api.java.ExecutionEnvironment;

import org.apache.flink.api.java.operators.AggregateOperator;

import org.apache.flink.api.java.operators.DataSource;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.util.Collector;

/**

* 用java实现批处理

*

*/

public class BatchWordCountJava {

public static void main(String[] args)throws Exception {

//获取flink的运行环境

ExecutionEnvironment env = ExecutionEnvironment.getExecutionEnvironment();

//获取文件的内容

String inputpath="D:\\input\\file";

String outPut="D:\\input\\result";

//获取文件的内容

DataSource<String> text = env.readTextFile(inputpath);

AggregateOperator<Tuple2<String, Integer>> counts = text.flatMap(new Tockenizer()).groupBy(0).sum(1);

counts.writeAsCsv(outPut,"\n"," ");

env.execute("batch word count");

}

public static class Tockenizer implements FlatMapFunction<String, Tuple2<String,Integer>>{

@Override

public void flatMap(String value, Collector<Tuple2<String, Integer>> out) throws Exception {

String[] tokens = value.toLowerCase().split("\\W+");

for (String token:tokens){

if(token.length()>0){

out.collect(new Tuple2<String, Integer>(token,1));

}

}

}

}

}

运行程序,控制台打印信息:

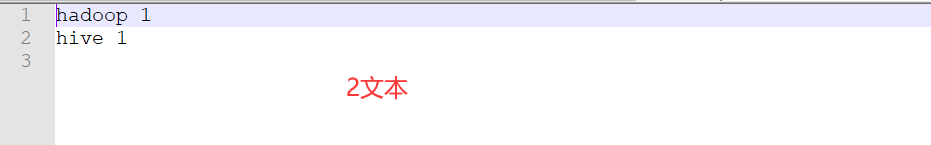

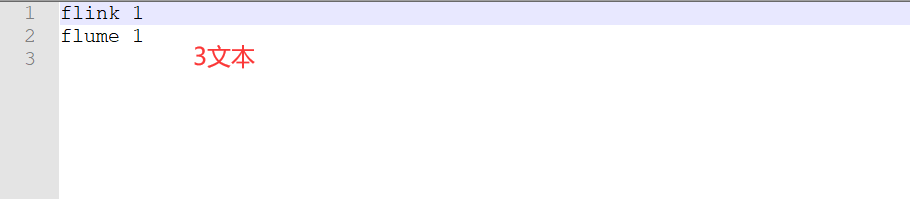

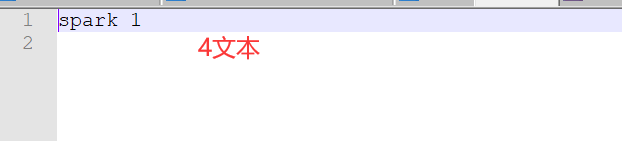

生成文件数据

发现,数据还做了一个排序