更新时间:2019-10-1

(1)nccl不用显式制定。

(2)bazel版本低于2.27

(3)gcc版本高于4.8.5,低于7.1

更新时间:2019-6-10

文章目录

tensorflow已经更新到1.13版,官方的linux安装文件采用的是glibc2.23, 而centos只支持到glibc2.17,所以在使用pip install tensorflow-gpu安装后的使用过程中会报错:

ImportError: /lib64/libc.so.6: version `GLIBC_2.23' not found (required by /usr/local/python3.6/lib/python3.6/site-packages/tensorflow/python/_pywrap_tensorflow.so)

升级到glibc是不可能的,升级完系统都进不了了。只能重新源码编译tensorflow,这样就不会报错了。

下面是源码编译的过程,版本为最新版1.13:

nccl,cuda,cudnn都用最新版本的上一个版本,例如cuda最新10.1,则用10.0

1. 准备cuda

这个过程不用多说,网上教程很多,我使用是cuda 10.0 cudnn 7.5.0

参考一下: https://www.jianshu.com/p/a201b91b3d96

note:一定要记住自己的cuda版本和cudnn版本,以及cuda的安装位置,因为后面用得到。

2. 准备NCCL

nccl是tensorflow gpu版必须的,现在版本2.4.2,下载地址:https://developer.nvidia.com/nccl/nccl-download

下载后应该是rpm文件,安装命令:rpm -ivh nccl-repo-rhel7-2.4.2-ga-cuda10.0-1-1.x86_64.rpm

这个很奇怪,并不会直接安装,而只是解压了一下,产生了3个rpm文件,用命令:rpm -qpl nccl-repo-rhel7-2.4.2-ga-cuda10.0-1-1.x86_64.rpm,

可以看到文件位置:

到相应的文件夹下安装3个rpm文件,安装位置应该默认到/usr/lib64, 如果不确定可以用rpm -qpl xxx.rpm查看安装位置。

note: 这里要记住nccl的版本和安装位置

3. 安装bazel

bazel是google的编译工具,tensorflow就是用它编译的,所以必须安装。

下载链接:https://github.com/bazelbuild/bazel/releases

选在最新版下载:

下载后新建一个文件夹,文件名为bazel,并把该文件放到里面,解压命令:

unzip bazel-0.24.1-dist.zip

解压后编译:

./compile.sh

等待一段时间,就会提示成功,编译后二进制执行文件在: bazel/ouput 目录下,

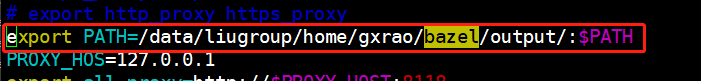

在bashrc里添加PATH:

这里的目录一定要正确,之后:source ~/.bashrc

在命令行输入: bazel 出现下面就表示成功了:

4. 安装tensorflow

git clone https://github.com/tensorflow/tensorflow.git

cd tensorflow

开始编译配置:

./configure

注意:与cuda和nccl相关的选择Y,其他都选择no:

Found possible Python library paths:

/DATA/119/gxrao2/usr/local/conda3/lib/python3.7/site-packages

Please input the desired Python library path to use. Default is [/DATA/119/gxrao2/usr/local/conda3/lib/python3.7/site-packages]

Do you wish to build TensorFlow with XLA JIT support? [Y/n]: n

No XLA JIT support will be enabled for TensorFlow.

Do you wish to build TensorFlow with OpenCL SYCL support? [y/N]: n

No OpenCL SYCL support will be enabled for TensorFlow.

Do you wish to build TensorFlow with ROCm support? [y/N]: n

No ROCm support will be enabled for TensorFlow.

Do you wish to build TensorFlow with CUDA support? [y/N]: y

CUDA support will be enabled for TensorFlow.

Do you wish to build TensorFlow with TensorRT support? [y/N]: n

No TensorRT support will be enabled for TensorFlow.

Could not find any cuda.h matching version '' in any subdirectory:

''

'include'

'include/cuda'

'include/*-linux-gnu'

'extras/CUPTI/include'

'include/cuda/CUPTI'

of:

'/lib64'

'/usr'

'/usr/lib'

'/usr/lib64'

'/usr/lib64/mysql'

'/usr/lib64/qt-3.3/lib'

'/usr/lib64/xulrunner'

Asking for detailed CUDA configuration...

Please specify the CUDA SDK version you want to use. [Leave empty to default to CUDA 10]: 10.0

Please specify the cuDNN version you want to use. [Leave empty to default to cuDNN 7]: 7.5

Please specify the locally installed NCCL version you want to use. [Leave empty to use http://github.com/nvidia/nccl]: 2.4

Please specify the comma-separated list of base paths to look for CUDA libraries and headers. [Leave empty to use the default]: /DATA/119/gxrao2/usr/local/cuda-10.0,/usr

Found CUDA 10.0 in:

/DATA/119/gxrao2/usr/local/cuda-10.0/lib64

/DATA/119/gxrao2/usr/local/cuda-10.0/include

Found cuDNN 7 in:

/DATA/119/gxrao2/usr/local/cuda-10.0/lib64

/DATA/119/gxrao2/usr/local/cuda-10.0/include

Found NCCL 2 in:

/usr/lib64

/usr/include

Please specify a list of comma-separated CUDA compute capabilities you want to build with.

You can find the compute capability of your device at: https://developer.nvidia.com/cuda-gpus.

Please note that each additional compute capability significantly increases your build time and binary size, and that TensorFlow only supports compute capabilities >= 3.5 [Default is: 6.1,6.1,6.1,6.1,6.1,6.1,6.1,6.1]:

Do you want to use clang as CUDA compiler? [y/N]: n

nvcc will be used as CUDA compiler.

Please specify which gcc should be used by nvcc as the host compiler. [Default is /usr/local/bin/gcc]: y

Invalid gcc path. y cannot be found.

Please specify which gcc should be used by nvcc as the host compiler. [Default is /usr/local/bin/gcc]: /usr/bin/gcc

Do you wish to build TensorFlow with MPI support? [y/N]: n

No MPI support will be enabled for TensorFlow.

Please specify optimization flags to use during compilation when bazel option "--config=opt" is specified [Default is -march=native -Wno-sign-compare]: n

Would you like to interactively configure ./WORKSPACE for Android builds? [y/N]: n

Not configuring the WORKSPACE for Android builds.

Preconfigured Bazel build configs. You can use any of the below by adding "--config=<>" to your build command. See .bazelrc for more details.

--config=mkl # Build with MKL support.

--config=monolithic # Config for mostly static monolithic build.

--config=gdr # Build with GDR support.

--config=verbs # Build with libverbs support.

--config=ngraph # Build with Intel nGraph support.

--config=numa # Build with NUMA support.

--config=dynamic_kernels # (Experimental) Build kernels into separate shared objects.

Preconfigured Bazel build configs to DISABLE default on features:

--config=noaws # Disable AWS S3 filesystem support.

--config=nogcp # Disable GCP support.

--config=nohdfs # Disable HDFS support.

--config=noignite # Disable Apache Ignite support.

--config=nokafka # Disable Apache Kafka support.

--config=nonccl # Disable NVIDIA NCCL support.

Configuration finished

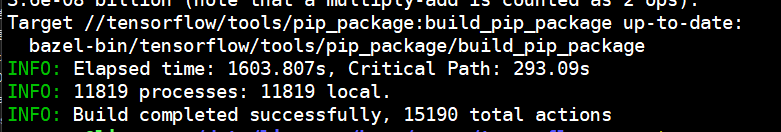

(base) gxrao2@mic119:/DATA/119/gxrao2/tf_install/tensorflow$ bazel build --config=opt --config=cuda //tensorflow/tools/pip_package:build_pip_package

WARNING: Output base '/home/gxrao2/.cache/bazel/_bazel_gxrao2/6db07e2ca57d5a7ff7347aece9f0c169' is on NFS. This may lead to surprising failures and undetermined behavior.

Starting local Bazel server and connecting to it...

注意:

使用编译命令编译:

bazel build --config=opt --config=cuda //tensorflow/tools/pip_package:build_pip_package

等待结束就好,需要一定的时间,如果成功,则胜利了。

装换为whl文件:

bazel-bin/tensorflow/tools/pip_package/build_pip_package /tmp/tensorflow_pkg

使用pip安装文件:

pip install /tmp/tensorflow_pkg/*.whl

5. 失败后的查错

- bazel版本,tensorflow对于bazel有版本要求,一般最新版的tensorflow用最新的bazel肯定没有问题。

- cuda,cudnn, nccl 安装位置以及版本不能有错,在配置的过程中一定要指定正确,尤其是nccl 一定要查看安装位置,不然配置过程会找不到的。

- 不需要的选项不要选择,配置过程一定要正确。