centos7.4 新部署hadoop-2.6.0-cdh5.16.2集群,启动有警告,需要本地的hadoop库

[hadoop@node131 sbin]$ start-all.sh

20/03/07 18:27:03 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

根据网上命令确认无本地库

[hadoop@node131 sbin]$ hadoop checknative -a

20/03/07 18:36:04 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

Native library checking:

hadoop: false

zlib: false

snappy: false

lz4: false

bzip2: false

openssl: false

20/03/07 18:36:04 INFO util.ExitUtil: Exiting with status 1

官网的编译方法看起来比较简单:

https://hadoop.apache.org/docs/current/hadoop-project-dist/hadoop-common/NativeLibraries.htmlhttp://archive.cloudera.com/cdh5/cdh/5/hadoop/hadoop-project-dist/hadoop-common/NativeLibraries.html It is mandatory to install both the zlib and gzip development packages on the target platform in order to build the native hadoop library;

先安装jdk1.8 安装maven , 添加到环境变量及path

vi ~/.bashrc

export JAVA_HOME=/usr/java/jdk1.8.0_162

export PATH=$JAVA_HOME/bin:$JAVA_HOME/jre/bin:$PATH

export CLASSPATH=.:${JAVA_HOME}/lib:${JAVA_HOME}/jre/lib:$CLASSPATH

export PATH=$PATH:/home/hadoop/apache-maven-3.3.9/bin

再安装必要的编译软件

yum install -y gcc autoconf automake libtool zlib-devel

开始编译

- JDK依赖问题

[hadoop@node131 src]$ mvn package -Pdist,native -DskipTests -Dtar -X

[ERROR] 位置: 类 ExcludePrivateAnnotationsStandardDoclet

[ERROR] /home/hadoop/hadoop-2.6.0-cdh5.16.2/src/hadoop-common-project/hadoop-annotations/src/main/java/org/apache/hadoop/classification/tools/ExcludePrivateAnnotationsStandardDoclet.java:[56,11] 错误: 找不到符号

[ERROR] -> [Help 1]

org.apache.maven.lifecycle.LifecycleExecutionException: Failed to execute goal org.apache.maven.plugins:maven-compiler-plugin:2.5.1:compile (default-compile) on project hadoop-annotations: Compilation failure

问题代码 位置:

import com.sun.javadoc.RootDoc;

....

public static boolean validOptions(String[][] options, DocErrorReporter reporter) { StabilityOptions.validOptions(options, reporter); String[][] filteredOptions = StabilityOptions.filterOptions(options); Line56 return Standard.validOptions(filteredOptions, reporter); } 这是引入了com.sun包,需要在指定tools.jar本地位置,如下修改

vi hadoop-common-project/hadoop-annotations/pom.xml

依赖添加本地tools.jar :

<dependency>

<groupId>com.sun</groupId> <artifactId>tools</artifactId> <version>1.8.0</version> <scope>system</scope> <systemPath>${java.home}/../lib/tools.jar</systemPath> </dependency> 2.JavaDoc标记问题1

[hadoop@node131 src]$ mvn package -Pdist,native -DskipTests -Dtar -X

[ERROR] Failed to execute goal org.apache.maven.plugins:maven-javadoc-plugin:2.8.1:jar (module-javadocs) on project hadoop-annotations: MavenReportException: Error while creating archive:

[ERROR] Exit code: 1 - /home/hadoop/hadoop-2.6.0-cdh5.16.2/src/hadoop-common-project/hadoop-annotations/src/main/java/org/apache/hadoop/classification/InterfaceStability.java:27: 错误: 意外的结束标记: </ul>

[ERROR] * </ul>

[ERROR] ^

[ERROR]

[ERROR] Command line was: /usr/java/jdk1.8.0_162/jre/../bin/javadoc @options @packages

/**

* Annotation to inform users of how much to rely on a particular package,

* class or method not changing over time.

* </ul>

*/

改为

/**

* Annotation to inform users of how much to rely on a particular package,

* class or method not changing over time.

*<ul> </ul>

*/

即按照规范增加了<ul>,叹。。。

3.protobuf 缺失问题

[hadoop@node131 src]$ mvn package -Pdist,native -DskipTests -Dtar -X

[ERROR] Failed to execute goal org.apache.hadoop:hadoop-maven-plugins:2.6.0-cdh5.16.2:protoc (compile-protoc) on project hadoop-common: org.apache.maven.plugin.MojoExecutionException: 'protoc --version' did not return a version -> [Help 1]

org.apache.maven.lifecycle.LifecycleExecutionException: Failed to execute goal org.apache.hadoop:hadoop-maven-plugins:2.6.0-cdh5.16.2:protoc (compile-protoc) on project hadoop-common: org.apache.maven.plugin.MojoExecutionException: 'protoc --version' did not return a version

at org.apache.maven.lifecycle.internal.MojoExecutor.execute(MojoExecutor.java:212)

at org.apache.maven.lifecycle.internal.MojoExecutor.execute(MojoExecutor.java:153)

此版本需要下载和安装protobuf-2.5.0.tar.gz

下载位置:https://pan.baidu.com/s/1pJlZubT

(参考:https://www.cnblogs.com/cenzhongman/p/7191094.html)

tar -zxvf protobuf-2.5.0.tar.gz

[root@node131 protobuf-2.5.0]# ./configure --prefix=/usr/local/protobuf/

configure: error: in `/home/hadoop/protobuf-2.5.0':

configure: error: C++ preprocessor "/lib/cpp" fails sanity check

See `config.log' for more details

configure: error: C++ preprocessor "/lib/cpp" fails sanity check

缺少C++库

yum install -y glibc-headers install gcc-c++

继续

[root@node131 protobuf-2.5.0]# ./configure --prefix=/usr/local/protobuf/

...

checking whether -pthread is sufficient with -shared... yes

configure: creating ./config.status

config.status: creating Makefile

config.status: creating scripts/gtest-config

config.status: creating build-aux/config.h

config.status: executing depfiles commands

config.status: executing libtool commands

[root@node131 protobuf-2.5.0]# make && make install

....

/zero_copy_stream_impl.h google/protobuf/io/zero_copy_stream_impl_lite.h '/usr/local/protobuf/include/google/protobuf/io'

make[3]: 离开目录“/home/hadoop/protobuf-2.5.0/src”

make[2]: 离开目录“/home/hadoop/protobuf-2.5.0/src”

make[1]: 离开目录“/home/hadoop/protobuf-2.5.0/src”

添加到环境变量验证

[root@node131 protobuf-2.5.0]# vi /etc/profile

vi /etc/profile

export PATH=/usr/local/protobuf/bin:$PATH

source /etc/profile

[root@node131 protobuf-2.5.0]# protoc --version

libprotoc 2.5.0

4.cmake等缺失

[hadoop@node131 src]$ mvn package -Pdist,native -DskipTests -Dtar -X

[INFO] ------------------------------------------------------------------------

[ERROR] Failed to execute goal org.apache.maven.plugins:maven-antrun-plugin:1.7:run (make) on project hadoop-common: An Ant BuildException has occured: Execute failed: java.io.IOException: Cannot run program "cmake" (in directory "/home/hadoop/hadoop-2.6.0-cdh5.16.2/src/hadoop-common-project/hadoop-common/target/native"): error=2, 没有那个文件或目录

[ERROR] around Ant part ...<exec failonerror="true" dir="/home/hadoop/hadoop-2.6.0-cdh5.16.2/src/hadoop-common-project/hadoop-common/target/native" executable="cmake">... @ 4:143 in /home/hadoop/hadoop-2.6.0-cdh5.16.2/src/hadoop-common-project/hadoop-common/target/antrun/build-main.xml

[ERROR] -> [Help 1]

org.apache.maven.lifecycle.LifecycleExecutionException: Failed to execute goal org.apache.maven.plugins:maven-antrun-plugin:1.7:run (make) on project hadoop-common: An Ant BuildException has occured: Execute failed: java.io.IOException: Cannot run program "cmake" (in directory "/home/hadoop/hadoop-2.6.0-cdh5.16.2/src/hadoop-common-project/hadoop-common/target/native"): error=2, 没有那个文件或目录

around Ant part ...<exec failonerror="true" dir="/home/hadoop/hadoop-2.6.0-cdh5.16.2/src/hadoop-common-project/hadoop-common/target/native" executable="cmake">... @ 4:143 in /home/hadoop/hadoop-2.6.0-cdh5.16.2/src/hadoop-common-project/hadoop-common/target/antrun/build-main.xml

at org.apache.maven.lifecycle.internal.MojoExecutor.execute(MojoExecutor.java:212)

yum install -y cmake

5.JavaDoc标记问题2

[hadoop@node131 src]$ mvn package -Pdist,native -DskipTests -Dtar -X

...

^

/home/hadoop/hadoop-2.6.0-cdh5.16.2/src/hadoop-common-project/hadoop-nfs/src/main/java/org/apache/hadoop/oncrpc/RpcProgram.java:68: 错误: @param name 未找到

* @param DatagramSocket registrationSocket if not null, use this socket to

^

Command line was: /usr/java/jdk1.8.0_162/jre/../bin/javadoc @options @packages

Refer to the generated Javadoc files in '/home/hadoop/hadoop-2.6.0-cdh5.16.2/src/hadoop-common-project/hadoop-nfs/target' dir.

at org.apache.maven.plugin.javadoc.AbstractJavadocMojo.executeJavadocCommandLine(AbstractJavadocMojo.java:5077)

at org.apache.maven.plugin.javadoc.AbstractJavadocMojo.executeReport(AbstractJavadocMojo.java:1997)

at org.apache.maven.plugin.javadoc.JavadocJar.execute(JavadocJar.java:179)

... 22 more

[ERROR]

[ERROR]

[ERROR] For more information about the errors and possible solutions, please read the following articles:

[ERROR] [Help 1] http://cwiki.apache.org/confluence/display/MAVEN/MojoExecutionException

[ERROR]

[ERROR] After correcting the problems, you can resume the build with the command

[ERROR] mvn <goals> -rf :hadoop-nfs

[hadoop@node131 src]$

[hadoop@node131 src]$ ls ~/.m2/repository/org/apache/maven/plugins/maven-javadoc-plugin/2.9.1

maven-javadoc-plugin-2.9.1.jar

[hadoop@node131 src]$ vi /home/hadoop/hadoop-2.6.0-cdh5.16.2/src/hadoop-common-project/hadoop-nfs/pom.xml

<build>

<plugins>

中添加:

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-javadoc-plugin</artifactId>

<version>2.9.1</version>

<executions>

<execution>

<id>attach-javadocs</id>

<goals>

<goal>jar</goal>

</goals>

<configuration>

<additionalparam>-Xdoclint:none</additionalparam>

</configuration>

</execution>

</executions>

</plugin>

没有效果:

cd /home/hadoop/hadoop-2.6.0-cdh5.16.2/src/

mvn package -Pdist,native -DskipTests -Dtar -X

[INFO] BUILD FAILURE

[INFO] ------------------------------------------------------------------------

[INFO] Total time: 03:59 min

[INFO] Finished at: 2020-03-04T14:02:24+08:00

[INFO] Final Memory: 117M/333M

[INFO] ------------------------------------------------------------------------

[ERROR] Failed to execute goal org.apache.maven.plugins:maven-javadoc-plugin:2.9.1:jar (module-javadocs) on project hadoop-nfs: MavenReportException: Error while creating archive:

[ERROR] Exit code: 1 - /home/hadoop/hadoop-2.6.0-cdh5.16.2/src/hadoop-common-project/hadoop-nfs/src/main/java/org/apache/hadoop/mount/MountdBase.java:51: 警告: @param 没有说明

[ERROR] * @param program

[ERROR] ^

[ERROR] /home/hadoop/hadoop-2.6.0-cdh5.16.2/src/hadoop-common-project/hadoop-nfs/src/main/java/org/apache/hadoop/mount/MountdBase.java:52: 警告: @throws 没有说明

[ERROR] * @throws IOException

[ERROR] ^

......

[ERROR] Command line was: /usr/java/jdk1.8.0_162/jre/../bin/javadoc @options @packages

[ERROR]

[ERROR] Refer to the generated Javadoc files in '/home/hadoop/hadoop-2.6.0-cdh5.16.2/src/hadoop-common-project/hadoop-nfs/target' dir.

[ERROR] -> [Help 1]

org.apache.maven.lifecycle.LifecycleExecutionException: Failed to execute goal org.apache.maven.plugins:maven-javadoc-plugin:2.9.1:jar (module-javadocs) on project hadoop-nfs: MavenReportException: Error while creating archive:

Exit code: 1 - /home/hadoop/hadoop-2.6.0-cdh5.16.2/src/hadoop-common-project/hadoop-nfs/src/main/java/org/apache/hadoop/mount/MountdBase.java:51: 警告: @param 没有说明

* @param program

^

/home/hadoop/hadoop-2.6.0-cdh5.16.2/src/hadoop-common-project/hadoop-nfs/src/main/java/org/apache/hadoop/mount/MountdBase.java:52: 警告: @throws 没有说明

* @throws IOException

直接skip吧。

-Dmaven.javadoc.skip=true表示跳过javadoc生成

mvn package -Pdist,native -DskipTests -Dtar -Dmaven.javadoc.skip=true -X

[INFO] Apache Hadoop Main ................................. SUCCESS [ 3.119 s]

[INFO] Apache Hadoop Build Tools .......................... SUCCESS [ 1.818 s]

[INFO] Apache Hadoop Project POM .......................... SUCCESS [ 2.572 s]

[INFO] Apache Hadoop Annotations .......................... SUCCESS [ 4.089 s]

[INFO] Apache Hadoop Assemblies ........................... SUCCESS [ 3.028 s]

[INFO] Apache Hadoop Project Dist POM ..................... SUCCESS [ 6.993 s]

[INFO] Apache Hadoop Maven Plugins ........................ SUCCESS [ 51.139 s]

[INFO] Apache Hadoop MiniKDC .............................. SUCCESS [ 10.368 s]

[INFO] Apache Hadoop Auth ................................. SUCCESS [ 9.901 s]

[INFO] Apache Hadoop Auth Examples ........................ SUCCESS [ 4.333 s]

[INFO] Apache Hadoop Common ............................... SUCCESS [ 45.225 s]

[INFO] Apache Hadoop NFS .................................. SUCCESS [ 5.386 s]

[INFO] Apache Hadoop KMS .................................. SUCCESS [ 22.447 s]

[INFO] Apache Hadoop Common Project ....................... SUCCESS [ 0.200 s]

[INFO] Apache Hadoop HDFS ................................. FAILURE [ 01:17 h]

[INFO] Apache Hadoop HttpFS ............................... SKIPPED

[INFO] Apache Hadoop HDFS BookKeeper Journal .............. SKIPPED

[ERROR] Failed to execute goal org.apache.maven.plugins:maven-compiler-plugin:2.5.1:compile (default-compile) on project hadoop-yarn-common: Compilation failure: Compilation failure:

[ERROR] /home/hadoop/hadoop-2.6.0-cdh5.16.2/src/hadoop-yarn-project/hadoop-yarn/hadoop-yarn-common/src/main/java/org/apache/hadoop/yarn/ipc/RPCUtil.java:[101,10] 错误: 未报告的异常错误Throwable; 必须对其进行捕获或声明以便抛出

[ERROR] /home/hadoop/hadoop-2.6.0-cdh5.16.2/src/hadoop-yarn-project/hadoop-yarn/hadoop-yarn-common/src/main/java/org/apache/hadoop/yarn/ipc/RPCUtil.java:[104,10] 错误: 未报告的异常错误Throwable; 必须对其进行捕获或声明以便抛出

[ERROR] /home/hadoop/hadoop-2.6.0-cdh5.16.2/src/hadoop-yarn-project/hadoop-yarn/hadoop-yarn-common/src/main/java/org/apache/hadoop/yarn/ipc/RPCUtil.java:[107,10] 错误: 未报告的异常错误Throwable; 必须对其进行捕获或声明以便抛出

[ERROR] -> [Help 1]

org.apache.maven.lifecycle.LifecycleExecutionException: Failed to execute goal org.apache.maven.plugins:maven-compiler-plugin:2.5.1:compile (default-compile) on project hadoop-yarn-common: Compilation failure

at org.apache.maven.lifecycle.internal.MojoExecutor.execute(MojoExecutor.java:212)

at org.apache.maven.lifecycle.internal.MojoExecutor.execute(MojoExecutor.java:153)

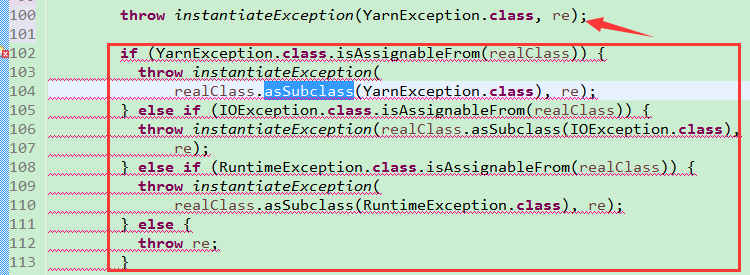

6.异常代码问题

cp /home/hadoop/hadoop-2.6.0-cdh5.16.2/src/hadoop-yarn-project/hadoop-yarn/hadoop-yarn-common/src/main/java/org/apache/hadoop/yarn/ipc/RPCUtil.java /home/hadoop/hadoop-2.6.0-cdh5.16.2/src/hadoop-yarn-project/hadoop-yarn/hadoop-yarn-common/src/main/java/org/apache/hadoop/yarn/ipc/RPCUtil.java.back

vi /home/hadoop/hadoop-2.6.0-cdh5.16.2/src/hadoop-yarn-project/hadoop-yarn/hadoop-yarn-common/src/main/java/org/apache/hadoop/yarn/ipc/RPCUtil.java

此处使用了JDK1.8,为编译通过直接按下图注释。抛出异常:

7.缺少依赖

[INFO] ------------------------------------------------------------------------

[ERROR] Failed to execute goal org.apache.maven.plugins:maven-antrun-plugin:1.7:run (make) on project hadoop-pipes: An Ant BuildException has occured: exec returned: 1

[ERROR] around Ant part ...<exec failonerror="true" dir="/home/hadoop/hadoop-2.6.0-cdh5.16.2/src/hadoop-tools/hadoop-pipes/target/native" executable="cmake">... @ 5:133 in /home/hadoop/hadoop-2.6.0-cdh5.16.2/src/hadoop-tools/hadoop-pipes/target/antrun/build-main.xml

[ERROR] -> [Help 1]

org.apache.maven.lifecycle.LifecycleExecutionException: Failed to execute goal org.apache.maven.plugins:maven-antrun-plugin:1.7:run (make) on project hadoop-pipes: An Ant BuildException has occured: exec returned: 1

around Ant part ...<exec failonerror="true" dir="/home/hadoop/hadoop-2.6.0-cdh5.16.2/src/hadoop-tools/hadoop-pipes/target/native" executable="cmake">... @ 5:133 in /home/hadoop/hadoop-2.6.0-cdh5.16.2/src/hadoop-tools/hadoop-pipes/target/antrun/build-main.xml

at org.apache.maven.lifecycle.internal.MojoExecutor.execute(MojoExecutor.java:212)

at org.apache.maven.lifecycle.internal.MojoExecutor.execute(MojoExecutor.java:153)

yum install -y zlib zlib-devel openssl openssl-devel

8.下载jar一直很慢,且在国外的几个repository切换: http://dynamodb-local.s3-website-us-west-2.amazonaws.com/release/com/amazonaws/aws-java-sdk-bundle/1.11.134/aws-java-sdk-bundle-1.11.134.jar

下载一直在切换,失败

更换

vi pom.xml

<repositories>

<repository>

<id>aliyun-repos</id> <name>aliyun-repos</name> <url>http://maven.aliyun.com/nexus/content/groups/public/</url> <releases> <enabled>true</enabled> </releases> <snapshots> <enabled>false</enabled> </snapshots> </repository> </repositories> main:

[exec] $ tar cf hadoop-2.6.0-cdh5.16.2.tar hadoop-2.6.0-cdh5.16.2

[exec] $ gzip -f hadoop-2.6.0-cdh5.16.2.tar

[exec]

[exec] Hadoop dist tar available at: /home/hadoop/hadoop-2.6.0-cdh5.16.2/src/hadoop-dist/target/hadoop-2.6.0-cdh5.16.2.tar.gz

[exec]

[INFO] Executed tasks

[INFO]

[INFO] --- maven-javadoc-plugin:2.8.1:jar (module-javadocs) @ hadoop-dist ---

[INFO] Skipping javadoc generation

[INFO] ------------------------------------------------------------------------

[INFO] Reactor Summary:

[INFO]

[INFO] Apache Hadoop Main ................................. SUCCESS [ 2.478 s]

[INFO] Apache Hadoop Build Tools .......................... SUCCESS [ 1.348 s]

[INFO] Apache Hadoop Project POM .......................... SUCCESS [ 2.095 s]

[INFO] Apache Hadoop Annotations .......................... SUCCESS [ 1.334 s]

[INFO] Apache Hadoop Assemblies ........................... SUCCESS [ 0.716 s]

[INFO] Apache Hadoop Project Dist POM ..................... SUCCESS [ 2.975 s]

[INFO] Apache Hadoop Maven Plugins ........................ SUCCESS [ 4.385 s]

[INFO] Apache Hadoop MiniKDC .............................. SUCCESS [ 6.470 s]

[INFO] Apache Hadoop Auth ................................. SUCCESS [ 6.039 s]

[INFO] Apache Hadoop Auth Examples ........................ SUCCESS [ 2.419 s]

[INFO] Apache Hadoop Common ............................... SUCCESS [01:27 min]

[INFO] Apache Hadoop NFS .................................. SUCCESS [ 5.808 s]

[INFO] Apache Hadoop KMS .................................. SUCCESS [ 8.945 s]

[INFO] Apache Hadoop Common Project ....................... SUCCESS [ 0.158 s]

[INFO] Apache Hadoop HDFS ................................. SUCCESS [01:16 min]

[INFO] Apache Hadoop HttpFS ............................... SUCCESS [ 9.751 s]

[INFO] Apache Hadoop HDFS BookKeeper Journal .............. SUCCESS [ 2.181 s]

[INFO] Apache Hadoop HDFS-NFS ............................. SUCCESS [ 3.381 s]

[INFO] Apache Hadoop HDFS Project ......................... SUCCESS [ 0.153 s]

[INFO] hadoop-yarn ........................................ SUCCESS [ 0.136 s]

[INFO] hadoop-yarn-api .................................... SUCCESS [ 12.136 s]

[INFO] hadoop-yarn-common ................................. SUCCESS [ 10.451 s]

[INFO] hadoop-yarn-server ................................. SUCCESS [ 0.128 s]

[INFO] hadoop-yarn-server-common .......................... SUCCESS [ 3.963 s]

[INFO] hadoop-yarn-server-nodemanager ..................... SUCCESS [ 23.185 s]

[INFO] hadoop-yarn-server-web-proxy ....................... SUCCESS [ 1.355 s]

[INFO] hadoop-yarn-server-applicationhistoryservice ....... SUCCESS [ 1.946 s]

[INFO] hadoop-yarn-server-resourcemanager ................. SUCCESS [ 4.257 s]

[INFO] hadoop-yarn-server-tests ........................... SUCCESS [ 2.411 s]

[INFO] hadoop-yarn-client ................................. SUCCESS [ 1.441 s]

[INFO] hadoop-yarn-applications ........................... SUCCESS [ 0.093 s]

[INFO] hadoop-yarn-applications-distributedshell .......... SUCCESS [ 1.293 s]

[INFO] hadoop-yarn-applications-unmanaged-am-launcher ..... SUCCESS [ 1.094 s]

[INFO] hadoop-yarn-site ................................... SUCCESS [ 0.105 s]

[INFO] hadoop-yarn-registry ............................... SUCCESS [ 1.982 s]

[INFO] hadoop-yarn-project ................................ SUCCESS [ 7.104 s]

[INFO] hadoop-mapreduce-client ............................ SUCCESS [ 0.288 s]

[INFO] hadoop-mapreduce-client-core ....................... SUCCESS [ 8.545 s]

[INFO] hadoop-mapreduce-client-common ..................... SUCCESS [ 4.466 s]

[INFO] hadoop-mapreduce-client-shuffle .................... SUCCESS [ 1.544 s]

[INFO] hadoop-mapreduce-client-app ........................ SUCCESS [ 3.016 s]

[INFO] hadoop-mapreduce-client-hs ......................... SUCCESS [ 2.688 s]

[INFO] hadoop-mapreduce-client-jobclient .................. SUCCESS [ 4.295 s]

[INFO] hadoop-mapreduce-client-hs-plugins ................. SUCCESS [ 1.264 s]

[INFO] hadoop-mapreduce-client-nativetask ................. SUCCESS [ 5.796 s]

[INFO] Apache Hadoop MapReduce Examples ................... SUCCESS [ 2.006 s]

[INFO] hadoop-mapreduce ................................... SUCCESS [ 5.838 s]

[INFO] Apache Hadoop MapReduce Streaming .................. SUCCESS [ 3.664 s]

[INFO] Apache Hadoop Distributed Copy ..................... SUCCESS [ 4.593 s]

[INFO] Apache Hadoop Archives ............................. SUCCESS [ 0.601 s]

[INFO] Apache Hadoop Archive Logs ......................... SUCCESS [ 0.706 s]

[INFO] Apache Hadoop Rumen ................................ SUCCESS [ 0.890 s]

[INFO] Apache Hadoop Gridmix .............................. SUCCESS [ 0.924 s]

[INFO] Apache Hadoop Data Join ............................ SUCCESS [ 0.565 s]

[INFO] Apache Hadoop Ant Tasks ............................ SUCCESS [ 0.492 s]

[INFO] Apache Hadoop Extras ............................... SUCCESS [ 0.642 s]

[INFO] Apache Hadoop Pipes ................................ SUCCESS [ 1.844 s]

[INFO] Apache Hadoop OpenStack support .................... SUCCESS [ 4.137 s]

[INFO] Apache Hadoop Amazon Web Services support .......... SUCCESS [02:19 min]

[INFO] Apache Hadoop Azure support ........................ SUCCESS [ 8.889 s]

[INFO] Apache Hadoop Client ............................... SUCCESS [ 9.275 s]

[INFO] Apache Hadoop Mini-Cluster ......................... SUCCESS [ 2.590 s]

[INFO] Apache Hadoop Scheduler Load Simulator ............. SUCCESS [ 7.943 s]

[INFO] Apache Hadoop Azure Data Lake support .............. SUCCESS [ 31.357 s]

[INFO] Apache Hadoop Tools Dist ........................... SUCCESS [ 10.671 s]

[INFO] Apache Hadoop Tools ................................ SUCCESS [ 0.048 s]

[INFO] Apache Hadoop Distribution ......................... SUCCESS [ 21.582 s]

[INFO] ------------------------------------------------------------------------

[INFO] BUILD SUCCESS

[INFO] ------------------------------------------------------------------------

[INFO] Total time: 09:54 min

[INFO] Finished at: 2020-03-07T16:51:55+08:00

[INFO] Final Memory: 173M/523M

[INFO] ------------------------------------------------------------------------

OK 了,编译成功了。

复制到目录下验证:

[hadoop@node131 sbin]$ ls /home/hadoop/hadoop-2.6.0-cdh5.16.2/src/hadoop-dist/target/hadoop-2.6.0-cdh5.16.2/lib/native/

libhadoop.a libhadooppipes.a libhadoop.so libhadoop.so.1.0.0 libhadooputils.a libhdfs.a libhdfs.so libhdfs.so.0.0.0 libnativetask.a libnativetask.so libnativetask.so.1.0.0

[hadoop@node131 sbin]$ cp /home/hadoop/hadoop-2.6.0-cdh5.16.2/src/hadoop-dist/target/hadoop-2.6.0-cdh5.16.2/lib/native/* /home/hadoop/hadoop-2.6.0-cdh5.16.2/lib/native/

[hadoop@node131 sbin]$ ls /home/hadoop/hadoop-2.6.0-cdh5.16.2/lib/native/

libhadoop.a libhadooppipes.a libhadoop.so libhadoop.so.1.0.0 libhadooputils.a libhdfs.a libhdfs.so libhdfs.so.0.0.0 libnativetask.a libnativetask.so libnativetask.so.1.0.0

[hadoop@node131 sbin]$

[hadoop@node131 sbin]$ hadoop checknative -a

20/03/07 19:07:46 WARN bzip2.Bzip2Factory: Failed to load/initialize native-bzip2 library system-native, will use pure-Java version

20/03/07 19:07:46 INFO zlib.ZlibFactory: Successfully loaded & initialized native-zlib library

Native library checking:

hadoop: true /home/hadoop/hadoop-2.6.0-cdh5.16.2/lib/native/libhadoop.so

zlib: true /lib64/libz.so.1

snappy: false

lz4: true revision:10301

bzip2: false

openssl: true /lib64/libcrypto.so

20/03/07 19:07:47 INFO util.ExitUtil: Exiting with status 1

[hadoop@node131 sbin]$

待续...