一、快速配置Hadoop并启动(为了快速上手用单机搭建):

hadoop下载地载:http://mirror.bit.edu.cn/apache/hadoop/

1、修改配置文件:

core-site.xml

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://localhost:9000</value>

</property>

</configuration>

hdfs-site.xml

<configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

</configuration>mapred-site.xml

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

</configuration>yarn-site.xml

<configuration>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

</configuration>hadoop-env.sh

export JAVA_HOME=/usr/java/jdk1.8.0_1212、格式化文件系统

./hdfs namenode -format3、启动名称节点和数据节点后台进程

./sbin/start-dfs.sh

启动ResourceManger和NodeManager后台进程

./sbin/start-yarn.sh或者只用

./sbin/start-all.sh二、测试

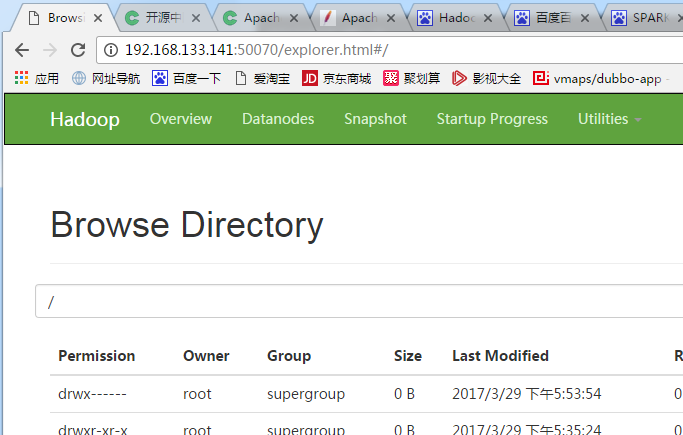

2.1 HDFS测试

使用浏览器查看hdfs目录,端口号是50070:

操作材料下载

https://pan.baidu.com/s/1hs62YTe

进入hadoop解压目录下的bin目录, HDFS创建目录:

./hdfs dfs -mkdir /wordcount

./hdfs dfs -mkdir /wordcount/result

./hadoop fs -rmr /wordcount/result拷贝input文件夹到HDFS目录下

./hdfs dfs -put /opt/input /wordcount查看文件列表:

./hadoop fs -ls /wordcount/input2.2 MapReduce测试

是参考官方文档的wordcount实验,将wordcount的代码译并打包,放到服务器的目录(/opt/testsource)下(注意不是hdfs的目录下)

并将测试的要进行wordcount的文件放入hdfs的/wordcount/input目录下

执行hadoop job

./hadoop jar /opt/testsource/learning.jar

hadoop.WordCount /wordcount/input /wordcount/result确认执行结果

hdfs dfs -cat /wordcount/result/*附wordcount代码:

package hadoop;

/**

* Created by BD-PC11 on 2017/3/29.

*/

import java.io.IOException;

import java.util.*;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.conf.*;

import org.apache.hadoop.io.*;

import org.apache.hadoop.mapred.*;

import org.apache.hadoop.util.*;

public class WordCount {

public static class Map extends MapReduceBase

implements Mapper<LongWritable, Text, Text, IntWritable> {

private final static IntWritable one = new IntWritable(1);

private Text word = new Text();

public void map(LongWritable key, Text value, OutputCollector<Text, IntWritable> output,

Reporter reporter) throws IOException {

String line = value.toString();

StringTokenizer tokenizer = new StringTokenizer(line);

while (tokenizer.hasMoreTokens()) {

word.set(tokenizer.nextToken());

output.collect(word, one);

}

}

}

public static class Reduce extends MapReduceBase

implements Reducer<Text, IntWritable, Text, IntWritable> {

public void reduce(Text key, Iterator<IntWritable> values,

OutputCollector<Text, IntWritable> output,

Reporter reporter) throws IOException {

int sum = 0;

while (values.hasNext()) {

sum += values.next().get();

}

output.collect(key, new IntWritable(sum));

}

}

public static void main(String[] args) throws Exception {

JobConf conf = new JobConf(WordCount.class);

conf.setJobName("wordcount");

conf.setOutputKeyClass(Text.class);

conf.setOutputValueClass(IntWritable.class);

conf.setMapperClass(Map.class);

conf.setCombinerClass(Reduce.class);

conf.setReducerClass(Reduce.class);

conf.setInputFormat(TextInputFormat.class);

conf.setOutputFormat(TextOutputFormat.class);

FileInputFormat.setInputPaths(conf, new Path(args[0]));

FileOutputFormat.setOutputPath(conf, new Path(args[1]));

JobClient.runJob(conf);

}

}