前言

喜大普奔,终于更新啦,上期说到,如何使用ffmpeg+rtmp进行拉流,不熟悉的小伙伴们,可以先看上一期。今天我们要实现的是使用ffmpeg+rtmp拉流,拉完的FLV流,提取出H.264视频,再提取出YUV,再提取出rgb图,最后用opencv处理图像。我的任务是得到rgb图像,从而可以对图像进行处理。

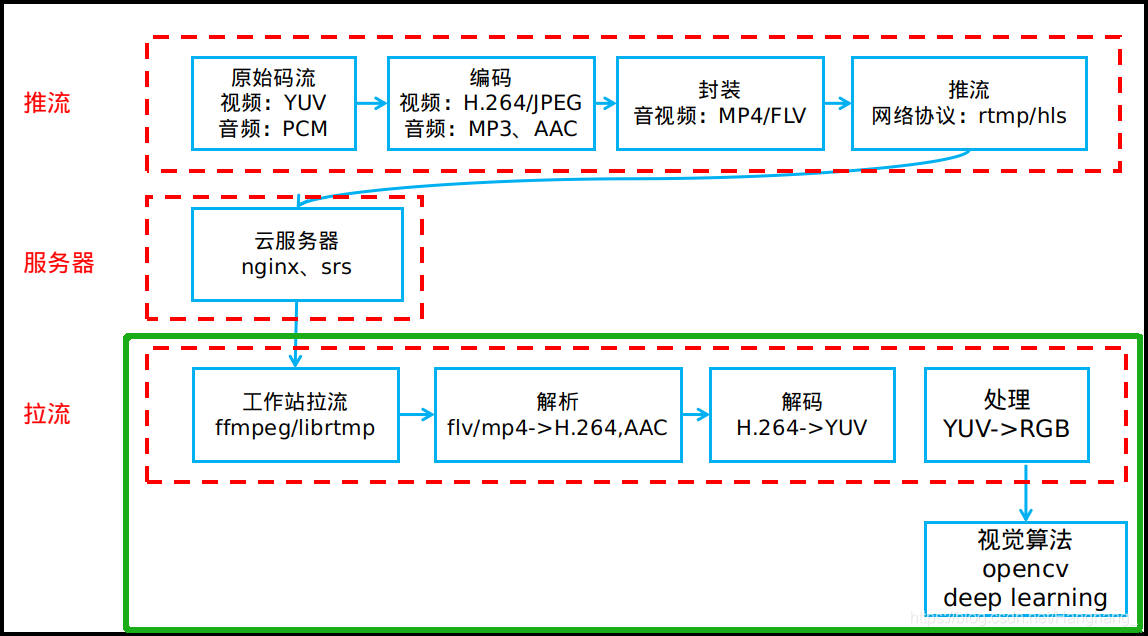

整体流程:rtmp拉流–>FLV–>H.264–>YUV–>RGB–>OPENCV处理

框架示意图:我们要实现下图绿色框部分:

源码

首先说明一下,filter在这里的作用就是从原复用格式中提取出相关的格式,比如FLV包含了H.264与AAC,filter就可以提取出其中的H.264,这里的filter仍使用旧版的api,编译会出现warning,但目前仍可以使用,等有空的时候我再更新代码吧。同样的,这次也是拉芒果台的视频。

main.cpp:

#include <iostream>

extern "C"

{

#include "libavformat/avformat.h"

#include <libavutil/mathematics.h>

#include <libavutil/time.h>

#include <libavutil/samplefmt.h>

#include <libavcodec/avcodec.h>

};

#include "opencv2/core.hpp"

#include<opencv2/opencv.hpp>

void AVFrame2Img(AVFrame *pFrame, cv::Mat& img);

void Yuv420p2Rgb32(const uchar *yuvBuffer_in, const uchar *rgbBuffer_out, int width, int height);

using namespace std;

using namespace cv;

int main(int argc, char* argv[])

{

AVFormatContext *ifmt_ctx = NULL;

AVPacket pkt;

AVFrame *pframe = NULL;

int ret, i;

int videoindex=-1;

AVCodecContext *pCodecCtx;

AVCodec *pCodec;

const char *in_filename = "rtmp://58.200.131.2:1935/livetv/hunantv"; //芒果台rtmp地址

const char *out_filename_v = "test.h264"; //Output file URL

//Register

av_register_all();

//Network

avformat_network_init();

//Input

if ((ret = avformat_open_input(&ifmt_ctx, in_filename, 0, 0)) < 0)

{

printf( "Could not open input file.");

return -1;

}

if ((ret = avformat_find_stream_info(ifmt_ctx, 0)) < 0)

{

printf( "Failed to retrieve input stream information");

return -1;

}

videoindex=-1;

for(i=0; i<ifmt_ctx->nb_streams; i++)

{

if(ifmt_ctx->streams[i]->codec->codec_type==AVMEDIA_TYPE_VIDEO)

{

videoindex=i;

}

}

//Find H.264 Decoder

pCodec = avcodec_find_decoder(AV_CODEC_ID_H264);

if(pCodec==NULL){

printf("Couldn't find Codec.\n");

return -1;

}

pCodecCtx = avcodec_alloc_context3(pCodec);

if (!pCodecCtx) {

fprintf(stderr, "Could not allocate video codec context\n");

exit(1);

}

if(avcodec_open2(pCodecCtx, pCodec,NULL)<0){

printf("Couldn't open codec.\n");

return -1;

}

pframe=av_frame_alloc();

if(!pframe)

{

printf("Could not allocate video frame\n");

exit(1);

}

FILE *fp_video=fopen(out_filename_v,"wb+"); //用于保存H.264

cv::Mat image_test;

AVBitStreamFilterContext* h264bsfc = av_bitstream_filter_init("h264_mp4toannexb");

while(av_read_frame(ifmt_ctx, &pkt)>=0)

{

if (pkt.stream_index == videoindex) {

av_bitstream_filter_filter(h264bsfc, ifmt_ctx->streams[videoindex]->codec, NULL, &pkt.data, &pkt.size,

pkt.data, pkt.size, 0);

printf("Write Video Packet. size:%d\tpts:%lld\n", pkt.size, pkt.pts);

//保存为h.264 该函数用于测试

//fwrite(pkt.data, 1, pkt.size, fp_video);

// Decode AVPacket

if(pkt.size)

{

ret = avcodec_send_packet(pCodecCtx, &pkt);

if (ret < 0 || ret == AVERROR(EAGAIN) || ret == AVERROR_EOF) {

std::cout << "avcodec_send_packet: " << ret << std::endl;

continue;

}

//Get AVframe

ret = avcodec_receive_frame(pCodecCtx, pframe);

if (ret == AVERROR(EAGAIN) || ret == AVERROR_EOF) {

std::cout << "avcodec_receive_frame: " << ret << std::endl;

continue;

}

//AVframe to rgb

AVFrame2Img(pframe,image_test);

}

}

//Free AvPacket

av_packet_unref(&pkt);

}

//Close filter

av_bitstream_filter_close(h264bsfc);

fclose(fp_video);

avformat_close_input(&ifmt_ctx);

if (ret < 0 && ret != AVERROR_EOF)

{

printf( "Error occurred.\n");

return -1;

}

return 0;

}

void Yuv420p2Rgb32(const uchar *yuvBuffer_in, const uchar *rgbBuffer_out, int width, int height)

{

uchar *yuvBuffer = (uchar *)yuvBuffer_in;

uchar *rgb32Buffer = (uchar *)rgbBuffer_out;

int channels = 3;

for (int y = 0; y < height; y++)

{

for (int x = 0; x < width; x++)

{

int index = y * width + x;

int indexY = y * width + x;

int indexU = width * height + y / 2 * width / 2 + x / 2;

int indexV = width * height + width * height / 4 + y / 2 * width / 2 + x / 2;

uchar Y = yuvBuffer[indexY];

uchar U = yuvBuffer[indexU];

uchar V = yuvBuffer[indexV];

int R = Y + 1.402 * (V - 128);

int G = Y - 0.34413 * (U - 128) - 0.71414*(V - 128);

int B = Y + 1.772*(U - 128);

R = (R < 0) ? 0 : R;

G = (G < 0) ? 0 : G;

B = (B < 0) ? 0 : B;

R = (R > 255) ? 255 : R;

G = (G > 255) ? 255 : G;

B = (B > 255) ? 255 : B;

rgb32Buffer[(y*width + x)*channels + 2] = uchar(R);

rgb32Buffer[(y*width + x)*channels + 1] = uchar(G);

rgb32Buffer[(y*width + x)*channels + 0] = uchar(B);

}

}

}

void AVFrame2Img(AVFrame *pFrame, cv::Mat& img)

{

int frameHeight = pFrame->height;

int frameWidth = pFrame->width;

int channels = 3;

//输出图像分配内存

img = cv::Mat::zeros(frameHeight, frameWidth, CV_8UC3);

Mat output = cv::Mat::zeros(frameHeight, frameWidth,CV_8U);

//创建保存yuv数据的buffer

uchar* pDecodedBuffer = (uchar*)malloc(frameHeight*frameWidth * sizeof(uchar)*channels);

//从AVFrame中获取yuv420p数据,并保存到buffer

int i, j, k;

//拷贝y分量

for (i = 0; i < frameHeight; i++)

{

memcpy(pDecodedBuffer + frameWidth*i,

pFrame->data[0] + pFrame->linesize[0] * i,

frameWidth);

}

//拷贝u分量

for (j = 0; j < frameHeight / 2; j++)

{

memcpy(pDecodedBuffer + frameWidth*i + frameWidth / 2 * j,

pFrame->data[1] + pFrame->linesize[1] * j,

frameWidth / 2);

}

//拷贝v分量

for (k = 0; k < frameHeight / 2; k++)

{

memcpy(pDecodedBuffer + frameWidth*i + frameWidth / 2 * j + frameWidth / 2 * k,

pFrame->data[2] + pFrame->linesize[2] * k,

frameWidth / 2);

}

//将buffer中的yuv420p数据转换为RGB;

Yuv420p2Rgb32(pDecodedBuffer, img.data, frameWidth, frameHeight);

//简单处理,这里用了canny来进行二值化

cvtColor(img, output, CV_RGB2GRAY);

waitKey(2);

Canny(img, output, 50, 50*2);

waitKey(2);

imshow("test",output);

waitKey(10);

// 测试函数

// imwrite("test.jpg",img);

//释放buffer

free(pDecodedBuffer);

img.release();

output.release();

}

CmakeLists.txt:

cmake_minimum_required(VERSION 3.10)

project(version1_0)

set(CMAKE_CXX_STANDARD 11)

set(CMAKE_CXX_FLAGS "-D__STDC_CONSTANT_MACROS")

find_package( OpenCV REQUIRED )

include_directories( ${OpenCV_INCLUDE_DIRS} )

find_path(AVCODEC_INCLUDE_DIR libavcodec/avcodec.h)

find_library(AVCODEC_LIBRARY avcodec)

find_path(AVFORMAT_INCLUDE_DIR libavformat/avformat.h)

find_library(AVFORMAT_LIBRARY avformat)

find_path(AVUTIL_INCLUDE_DIR libavutil/avutil.h)

find_library(AVUTIL_LIBRARY avutil)

find_path(AVDEVICE_INCLUDE_DIR libavdevice/avdevice.h)

find_library(AVDEVICE_LIBRARY avdevice)

add_executable(version1_0 main.cpp)

target_include_directories(version1_0 PRIVATE ${AVCODEC_INCLUDE_DIR} ${AVFORMAT_INCLUDE_DIR} ${AVUTIL_INCLUDE_DIR} ${AVDEVICE_INCLUDE_DIR})

target_link_libraries(version1_0 PRIVATE ${AVCODEC_LIBRARY} ${AVFORMAT_LIBRARY} ${AVUTIL_LIBRARY} ${AVDEVICE_LIBRARY} ${OpenCV_LIBS})

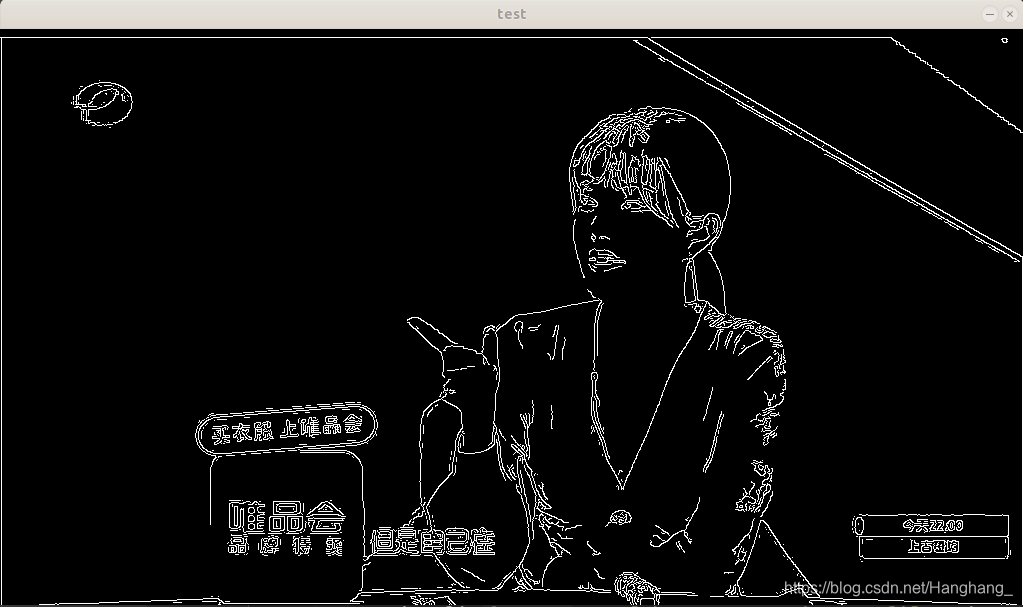

效果图:这里使用了canny对图像进行二值化,目的是为了测试opencv与代码的稳定性。

实测半小时以上程序不会崩溃,对于内存的处理应该没太大问题。

问题:

但是对于网络情况,却有些不乐观,不知道是因为我家网络的缘故还是芒果台服务器的缘故,我拉的流总是会卡,不流畅,下午五六点测试卡的要死,晚上还好,但有时候也会卡。不过测试了一下,使用VLC播放器播放芒果台,也是卡卡的,所以估计是它服务器的问题。

所以针对以上问题,我打算自己搭建nginx服务器,在本地推流,然后本地拉流,拉流推流同时运行,预知后事如何,咱们下一节见,会有那么一天,咱们能搭建出多用户无线图传SLAM系统。

如果我的文章对你有帮助,欢迎点赞,评论,关注!

参考文献:

ffmpeg的example:decode_video.cpp

https://www.cnblogs.com/riddick/p/7719298.html

https://blog.csdn.net/hiwubihe/article/details/82346759