1. 参考说明

参考文档:

https://cwiki.apache.org/confluence/display/Hive/GettingStarted

2. 安装环境说明

2.1. 环境说明

CentOS7.4+ Hadoop2.7.5的伪分布式环境

| 主机名 |

NameNode |

SecondaryNameNode

扫描二维码关注公众号,回复:

988708 查看本文章

|

DataNodes |

| centoshadoop.smartmap.com |

192.168.1.80 |

192.168.1.80 |

192.168.1.80 |

|

|

|

|

|

Hadoop的安装目录为:/opt/hadoop/hadoop-2.7.5

3. 安装

3.1. Hive下载

https://hive.apache.org/downloads.html

3.2. Hive解压

将下载的apache-hive-2.3.3-bin.tar.gz解压到/opt/hadoop/hive-2.3.3目录下

4. 配置

4.1. 修改profile文件

vi /etc/profile

export HIVE_HOME=/opt/hadoop/hive-2.3.3

export PATH=$PATH:$HIVE_HOME/bin

export CLASSPATH=$CLASSPATH:$HIVE_HOME/lib

4.2. 将JDK升级为1.8版本

将JDK切换成1.8的版本,并修改所有与JAVA_HOME相关的变量

4.3. 安装MySQL数据库

4.3.1. 下载MySQL源

[root@centoshadoop soft]# wget http://repo.mysql.com/mysql57-community-release-el7-8.noarch.rpm

4.3.2. 安装MySQL源

[root@centoshadoop soft]# yum install mysql57-community-release-el7-8.noarch.rpm

4.3.3. 安装MySQL

[root@centoshadoop soft]# yum install mysql-server

4.3.4. 启动mysql服务

[root@centoshadoop soft]# systemctl start mysqld

[root@centoshadoop soft]# systemctl enable mysqld

4.3.5. 重置root密码

MySQL5.7会在安装后为root用户生成一个随机密码, MySQL为root用户生成的随机密码通过mysqld.log文件可以查找到

[root@centoshadoop soft]# grep 'temporary password' /var/log/mysqld.log

2018-05-22T09:23:43.115820Z 1 [Note] A temporary password is generated for root@localhost: 2&?SYJpBOdwo

[root@centoshadoop soft]#

[ambari@master opt]$ mysql -u root -p

Enter password:

Welcome to the MySQL monitor. Commands end with ; or \g.

Your MySQL connection id is 2

Server version: 5.7.22

…....

mysql> set global validate_password_policy=0;

Query OK, 0 rows affected (0.00 sec)

mysql> set global validate_password_length=3;

Query OK, 0 rows affected (0.00 sec)

mysql> set global validate_password_mixed_case_count=0;

Query OK, 0 rows affected (0.00 sec)

mysql> set global validate_password_number_count=0;

Query OK, 0 rows affected (0.00 sec)

mysql> set global validate_password_special_char_count=0;

Query OK, 0 rows affected (0.00 sec)

mysql> alter user 'root'@'localhost' identified by 'gis123';

Query OK, 0 rows affected (0.00 sec)

mysql> flush privileges;

Query OK, 0 rows affected (0.01 sec)

mysql> SHOW VARIABLES LIKE 'validate_password%';

+--------------------------------------+-------+

| Variable_name | Value |

+--------------------------------------+-------+

| validate_password_check_user_name | OFF |

| validate_password_dictionary_file | |

| validate_password_length | 4 |

| validate_password_mixed_case_count | 0 |

| validate_password_number_count | 0 |

| validate_password_policy | LOW |

| validate_password_special_char_count | 0 |

+--------------------------------------+-------+

7 rows in set (0.01 sec)

mysql> set global validate_password_length=3;

Query OK, 0 rows affected (0.00 sec)

mysql> alter user 'root'@'localhost' identified by 'gis';

Query OK, 0 rows affected (0.00 sec)

mysql> flush privileges;

Query OK, 0 rows affected (0.00 sec)

mysql> quit

Bye

[ambari@master opt]$ mysql -u root -p

Enter password:

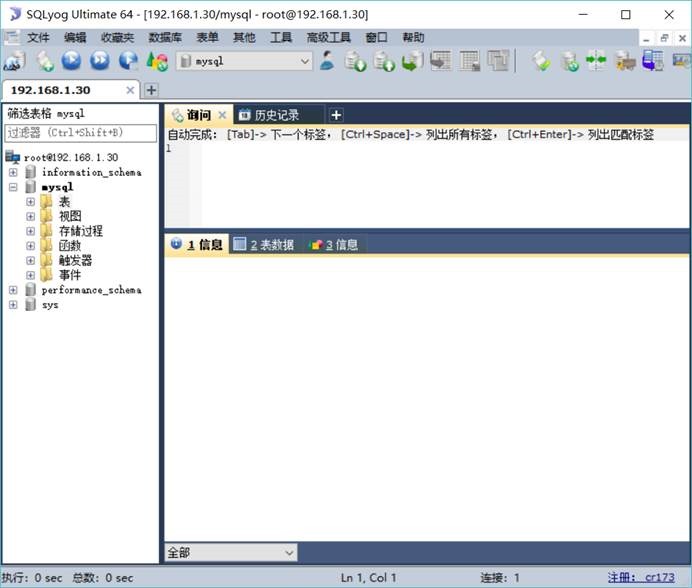

4.3.6. 开放数据库访问权限

[root@localsource ~]# mysql -u root -p

Enter password:

Welcome to the MySQL monitor. Commands end with ; or \g.

……

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

mysql> GRANT ALL PRIVILEGES ON *.* TO 'root'@'%' IDENTIFIED BY 'gis' WITH GRANT OPTION;

Query OK, 0 rows affected, 1 warning (0.00 sec)

mysql> FLUSH PRIVILEGES;

Query OK, 0 rows affected (0.00 sec)

mysql> quit

4.3.7. 安装mysql jdbc驱动

4.3.7.1. 上传软件包到/opt/java/目录下

上传软件包mysql-connector-java-5.1.46.jar到/opt/java/jdk1.8.0_171/lib/目录下

4.3.7.2. 测试

import java.sql.*;

public class SqlTest {

public static void main(String[] args) throws Exception {

try {

String driver="com.mysql.jdbc.Driver";

String url="jdbc:mysql://127.0.0.1:3306/mysql?serverTimezone=Asia/Shanghai&useUnicode=true&characterEncoding=utf8&useSSL=false";

String user="root";

String password="gis";

Class.forName(driver);

Connection conn=DriverManager.getConnection(url,user,password);

Statement stmt=conn.createStatement();

System.out.println("mysql test successful!");

stmt.close();

conn.close();

} catch (Exception e) {

e.printStackTrace();

System.out.println("mysql test fail!");

}

}

}

编译执行

javac SqlTest.java

java SqlTest

4.4. 修改Hive的配置文件

cd /opt/hadoop/hive-2.3.3/conf/

cp hive-env.sh.template hive-env.sh

4.5. 配置Hive的Metastore

[root@centoshadoop conf]# cp /opt/hadoop/hive-2.3.3/conf/hive-default.xml.template /opt/hadoop/hive-2.3.3/conf/hive-site.xml

[root@centoshadoop conf]# vi /opt/hadoop/hadoop-2.7.5/etc/hadoop/mapred-site.xml

[root@centoshadoop conf]# mkdir -p /opt/hadoop/hive-2.3.3/temp/hadoopUser

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

<description>Driver class name for a JDBC metastore</description>

</property>

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://127.0.0.1:3306/hive?createDatabaseIfNotExist=true&serverTimezone=Asia/Shanghai&useUnicode=true&characterEncoding=utf8&useSSL=false</value>

<description>

JDBC connect string for a JDBC metastore.

</description>

</property>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>root</value>

<description>Username to use against metastore database</description>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>gis</value>

<description>password to use against metastore database</description>

</property>

<property>

<name>hive.metastore.warehouse.dir</name>

<value>/user/hive/warehouse</value>

<description>location of default database for the warehouse</description>

</property>

<property>

<name>hive.exec.local.scratchdir</name>

<value>/opt/hadoop/hive-2.3.3/temp/${system:user.name}</value>

<description>Local scratch space for Hive jobs</description>

</property>

<property>

<name>hive.downloaded.resources.dir</name>

<value>/opt/hadoop/hive-2.3.3/temp/${hive.session.id}_resources</value>

<description>Temporary local directory for added resources in the remote file system.</description>

</property>

<property>

<name>hive.querylog.location</name>

<value>/opt/hadoop/hive-2.3.3/temp/${system:user.name}</value>

<description>Location of Hive run time structured log file</description>

</property>

<property>

<name>hive.server2.logging.operation.log.location</name>

<value>/opt/hadoop/hive-2.3.3/temp/${system:user.name}/operation_logs</value>

<description>Top level directory where operation logs are stored if logging functionality is enabled</description>

</property>

5. 启动Hadoop

5.1. 启动YARN与HDFS

cd /opt/hadoop/hadoop-2.7.5/sbin

start-all.sh

5.2. 启动historyserver

cd /opt/hadoop/hadoop-2.7.5/sbin

mr-jobhistory-daemon.sh start historyserver

6. 初始化元数据

[root@centoshadoop bin]# cp /opt/java/jdk1.8.0_171/lib/mysql-connector-java-5.1.46.jar /opt/hadoop/hive-2.3.3/lib/

[root@centoshadoop bin]# schematool -dbType mysql -initSchema

7. 应用Hive工具

7.1. 启动运行Hive的交互式Shell环境

cd /opt/hadoop/hive-2.3.3/bin

hive

7.2. 列出表格

hive> show tables;

7.3. 创建表格

hive> CREATE TABLE records (year STRING, temperature INT, quality INT) ROW FORMAT DELIMITED FIELDS TERMINATED BY '\t';

OK

Time taken: 3.755 seconds

7.4. 加载数据

hive> LOAD DATA LOCAL INPATH '/root/hapood/data/input/ncdc/micro-tab/sample.txt' OVERWRITE INTO TABLE records;

Loading data to table default.records

OK

Time taken: 1.412 seconds

[root@centoshadoop micro-tab]# hadoop fs -ls /user/hive/warehouse

Found 1 items

drwxr-xr-x - hadoop supergroup 0 2018-05-22 19:12 /user/hive/warehouse/records

[root@centoshadoop micro-tab]# hadoop fs -ls /user/hive/warehouse/records

Found 1 items

7.5. 查询数据

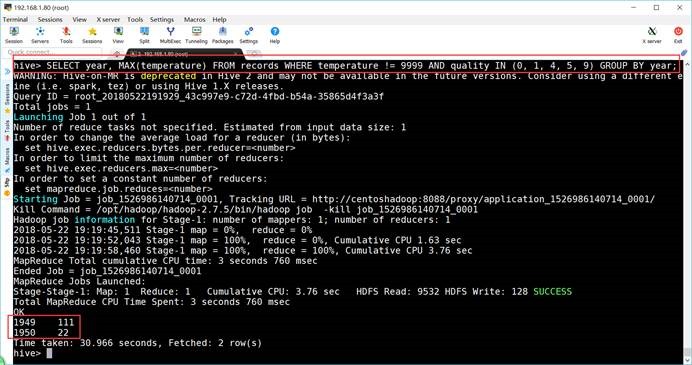

hive> SELECT year, MAX(temperature) FROM records WHERE temperature != 9999 AND quality IN (0, 1, 4, 5, 9) GROUP BY year;

WARNING: Hive-on-MR is deprecated in Hive 2 and may not be available in the future versions. Consider using a different execution engine (i.e. spark, tez) or using Hive 1.X releases.

Query ID = root_20180522191929_43c997e9-c72d-4fbd-b54a-35865d4f3a3f

Total jobs = 1

Launching Job 1 out of 1

7.6. 退出

hive> exit;

7.7. 分区与桶

7.7.1. 分区

7.7.1.1. 创建分区表

hive> DROP TABLE IF EXISTS logs;

hive> CREATE TABLE logs (ts BIGINT, line STRING) PARTITIONED BY (dt STRING, country STRING);

7.7.1.2. 加载数据到分区表

hive> LOAD DATA LOCAL INPATH '/root/hapood/data/input/hive/partitions/file1' INTO TABLE logs PARTITION (dt='2001-01-01', country='GB');

hive> LOAD DATA LOCAL INPATH '/root/hapood/data/input/hive/partitions/file2' INTO TABLE logs PARTITION (dt='2001-01-01', country='GB');

hive> LOAD DATA LOCAL INPATH '/root/hapood/data/input/hive/partitions/file3' INTO TABLE logs PARTITION (dt='2001-01-01', country='US');

hive> LOAD DATA LOCAL INPATH '/root/hapood/data/input/hive/partitions/file4' INTO TABLE logs PARTITION (dt='2001-01-02', country='GB');

hive> LOAD DATA LOCAL INPATH '/root/hapood/data/input/hive/partitions/file5' INTO TABLE logs PARTITION (dt='2001-01-02', country='US');

hive> LOAD DATA LOCAL INPATH '/root/hapood/data/input/hive/partitions/file6' INTO TABLE logs PARTITION (dt='2001-01-02', country='US');

7.7.1.3. 显示分区表的分区

hive> SHOW PARTITIONS logs;

OK

dt=2001-01-01/country=GB

dt=2001-01-01/country=US

dt=2001-01-02/country=GB

dt=2001-01-02/country=US

Time taken: 4.439 seconds, Fetched: 4 row(s)

7.7.1.4. 查询数据

hive> SELECT ts, dt, line FROM logs WHERE country='GB';

OK

1 2001-01-01 Log line 1

2 2001-01-01 Log line 2

4 2001-01-02 Log line 4

Time taken: 1.922 seconds, Fetched: 3 row(s)

7.7.2. 桶

7.7.2.1. 创建一般的表

hive> DROP TABLE IF EXISTS users;

hive> CREATE TABLE users (id INT, name STRING);

7.7.2.2. 为表加载数据

hive> LOAD DATA LOCAL INPATH '/root/hapood/data/input/hive/tables/users.txt' OVERWRITE INTO TABLE users;

hive> dfs -cat /user/hive/warehouse/users/users.txt;

0Nat

2Joe

3Kay

4Ann

hive>

7.7.2.3. 创建分桶表

hive> CREATE TABLE bucketed_users (id INT, name STRING) CLUSTERED BY (id) INTO 4 BUCKETS;

OK

Time taken: 0.081 seconds

hive> DROP TABLE bucketed_users;

OK

Time taken: 1.118 seconds

7.7.2.4. 创建分桶排序表

hive> CREATE TABLE bucketed_users (id INT, name STRING) CLUSTERED BY (id) SORTED BY (id) INTO 4 BUCKETS;

7.7.2.5. 为分桶排序表加载数据

hive> SELECT * FROM users;

OK

0 Nat

2 Joe

3 Kay

4 Ann

Time taken: 1.366 seconds, Fetched: 4 row(s)

hive> SET hive.enforce.bucketing=true;

hive> INSERT OVERWRITE TABLE bucketed_users SELECT * FROM users;

7.7.2.6. 查看分分桶排序表中的HDFS的文件

hive> dfs -ls /user/hive/warehouse/bucketed_users;

Found 4 items

-rwxr-xr-x 1 hadoop supergroup 12 2018-05-22 21:07 /user/hive/warehouse/bucketed_users/000000_0

-rwxr-xr-x 1 hadoop supergroup 0 2018-05-22 21:07 /user/hive/warehouse/bucketed_users/000001_0

-rwxr-xr-x 1 hadoop supergroup 6 2018-05-22 21:07 /user/hive/warehouse/bucketed_users/000002_0

-rwxr-xr-x 1 hadoop supergroup 6 2018-05-22 21:07 /user/hive/warehouse/bucketed_users/000003_0

hive> dfs -cat /user/hive/warehouse/bucketed_users/000000_0;

0Nat

4Ann

7.7.2.7. 从指定的桶中进行取样

hive> SELECT * FROM bucketed_users TABLESAMPLE(BUCKET 1 OUT OF 4 ON id);

OK

0 Nat

4 Ann

Time taken: 0.393 seconds, Fetched: 2 row(s)

hive> SELECT * FROM bucketed_users TABLESAMPLE(BUCKET 1 OUT OF 2 ON id);

OK

0 Nat

4 Ann

2 Joe

hive> SELECT * FROM users TABLESAMPLE(BUCKET 1 OUT OF 4 ON rand());

OK

Time taken: 0.072 seconds

7.8. 存贮格式

7.8.1. 创建一般的表

hive> DROP TABLE IF EXISTS users;

hive> CREATE TABLE users (id INT, name STRING);

7.8.2. 为表加载数据

hive> LOAD DATA LOCAL INPATH '/root/hapood/data/input/hive/tables/users.txt' OVERWRITE INTO TABLE users;

7.8.3. SequenceFile文件

7.8.3.1. 创建SequenceFile文件与加载数据

hive> DROP TABLE IF EXISTS users_seqfile;

hive> SET hive.exec.compress.output=true;

hive> SET mapreduce.output.fileoutputformat.compress.codec=org.apache.hadoop.io.compress.DeflateCodec;

hive> SET mapreduce.output.fileoutputformat.compress.type=BLOCK;

hive> CREATE TABLE users_seqfile STORED AS SEQUENCEFILE AS SELECT id, name FROM users;

7.8.3.2. 查询数据

hive> SELECT * from users_seqfile;

OK

0 Nat

2 Joe

3 Kay

4 Ann

Time taken: 0.409 seconds, Fetched: 4 row(s)

7.8.4. Avro文件

7.8.4.1. 创建Avro文件

hive> DROP TABLE IF EXISTS users_avro;

hive> SET hive.exec.compress.output=true;

hive> SET avro.output.codec=snappy;

hive> CREATE TABLE users_avro (id INT, name STRING) STORED AS AVRO;

OK

Time taken: 0.234 seconds

7.8.4.2. 加载数据

hive> INSERT OVERWRITE TABLE users_avro SELECT * FROM users;

7.8.4.3. 查询数据

hive> SELECT * from users_avro;

OK

0 Nat

2 Joe

3 Kay

4 Ann

Time taken: 0.21 seconds, Fetched: 4 row(s)

7.8.5. Parquet文件

7.8.5.1. 创建Parquet文件

hive> DROP TABLE IF EXISTS users_parquet;

7.8.5.2. 创建Parquet文件与加载数据

hive> CREATE TABLE users_parquet STORED AS PARQUET AS SELECT * FROM users;

7.8.5.3. 查询数据

hive> SELECT * from users_parquet;

OK

SLF4J: Failed to load class "org.slf4j.impl.StaticLoggerBinder".

SLF4J: Defaulting to no-operation (NOP) logger implementation

SLF4J: See http://www.slf4j.org/codes.html#StaticLoggerBinder for further details.

0 Nat

2 Joe

3 Kay

4 Ann

7.8.6. ORCFile文件

7.8.6.1. 创建ORCFile文件

hive> DROP TABLE IF EXISTS users_orc;

7.8.6.2. 创建ORCFile文件与加载数据

hive> CREATE TABLE users_orc STORED AS ORCFILE AS SELECT * FROM users;

7.8.6.3. 查询数据

hive> SELECT * from users_orc;

OK

0 Nat

2 Joe

3 Kay

4 Ann

Time taken: 0.086 seconds, Fetched: 4 row(s)

7.8.7. 定制系列化

7.8.7.1. 创建文件

hive> DROP TABLE IF EXISTS stations;

hive> CREATE TABLE stations (usaf STRING, wban STRING, name STRING)

ROW FORMAT SERDE 'org.apache.hadoop.hive.contrib.serde2.RegexSerDe'

WITH SERDEPROPERTIES (

"input.regex" = "(\\d{6}) (\\d{5}) (.{29}) .*"

);

7.8.7.2. 加载数据

hive> LOAD DATA LOCAL INPATH "/root/hapood/data/input/ncdc/metadata/stations-fixed-width.txt" INTO TABLE stations;

7.8.7.3. 查询数据

hive> SELECT * FROM stations LIMIT 4;

OK

010000 99999 BOGUS NORWAY

010003 99999 BOGUS NORWAY

010010 99999 JAN MAYEN

010013 99999 ROST

Time taken: 0.103 seconds, Fetched: 4 row(s)

hive>

7.9. 多表插入

7.9.1. 创建一般的表

hive> DROP TABLE IF exists records2;

hive> CREATE TABLE records2 (station STRING, year STRING, temperature INT, quality INT) ROW FORMAT DELIMITED FIELDS TERMINATED BY '\t';

7.9.2. 为表加载数据

hive> LOAD DATA LOCAL INPATH '/root/hapood/data/input/ncdc/micro-tab/sample2.txt' OVERWRITE INTO TABLE records2;

7.9.3. 创建其它的多张表

hive> DROP TABLE IF exists stations_by_year;

OK

Time taken: 0.03 seconds

hive> DROP TABLE IF exists records_by_year;

OK

Time taken: 0.016 seconds

hive> DROP TABLE IF exists good_records_by_year;

OK

Time taken: 0.012 seconds

hive> CREATE TABLE stations_by_year (year STRING, num INT);

OK

Time taken: 0.101 seconds

hive> CREATE TABLE records_by_year (year STRING, num INT);

OK

Time taken: 0.166 seconds

hive> CREATE TABLE good_records_by_year (year STRING, num INT);

OK

Time taken: 0.073 seconds

7.9.4. 将一张表中的数据插入到其它多张表中

hive> FROM records2

INSERT OVERWRITE TABLE stations_by_year SELECT year, COUNT(DISTINCT station) GROUP BY year

INSERT OVERWRITE TABLE records_by_year SELECT year, COUNT(1) GROUP BY year

INSERT OVERWRITE TABLE good_records_by_year SELECT year, COUNT(1) WHERE temperature != 9999 AND quality IN (0, 1, 4, 5, 9) GROUP BY year;

7.9.4.1. 查询数据

hive> SELECT * FROM stations_by_year;

OK

1949 2

1950 2

Time taken: 0.207 seconds, Fetched: 2 row(s)

hive> SELECT * FROM records_by_year;

OK

1949 2

1950 3

Time taken: 0.133 seconds, Fetched: 2 row(s)

hive> SELECT * FROM good_records_by_year;

OK

1949 2

1950 3

Time taken: 0.091 seconds, Fetched: 2 row(s)

7.9.4.2. 多表联接查询数据

hive> SELECT stations_by_year.year, stations_by_year.num, records_by_year.num, good_records_by_year.num FROM stations_by_year

JOIN records_by_year ON (stations_by_year.year = records_by_year.year)

JOIN good_records_by_year ON (stations_by_year.year = good_records_by_year.year);

Stage-Stage-4: Map: 1 Cumulative CPU: 2.19 sec HDFS Read: 7559 HDFS Write: 133 SUCCESS

Total MapReduce CPU Time Spent: 2 seconds 190 msec

OK

1949 2 2 2

1950 2 3 3

Time taken: 29.217 seconds, Fetched: 2 row(s)