理解 Audio 音频系统三 之 [1] AudioFlinger 启动流程 及 Audio PatchPanel初始化

- 三、AudioFlinger

- 1. AudioFlinger 的启动

- 2. AudioFlinger::AudioFlinger() 构造函数

- 3. AudioFlinger::onFirstRef() 构造函数

- 4. PatchPanel 对象 Audio Patch机制分析

- 4.1 结构体 struct audio_patch

- 4.2 结构体 struct audio_port_config

- 4.3 AudioFlinger 中 PatchPanel 的接口函数

- 4.4 PatchPanel 具本实现函数

- 4.5 创建Audio Patch 绑定 PatchPanel::createAudioPatch()

- 4.6 建立Patch连接 PatchPanel::createPatchConnections()

- 4.7 清除Audio patch连接 PatchPanel::clearPatchConnections()

- 4.8 释放Audio Patch连接 PatchPanel::releaseAudioPatch()

- 4.9 配置Audio Port 端口 PatchPanel::setAudioPortConfig()

- 4.10 PatchPanel 中函数调用关系

- 4.11 create_audio_patch 底层实现

- 5. Audio Patch 使用场景调用流程分析

断断续续写了好多天,终于把《理解 Audio 音频系统二 之 audioserver & AudioPolicyService》 写好了,

文章完全是作为自已的学习笔记来用的,因为刚开始学习,知识还没有形成体系结构,

所以其中有些内容,还是看不太懂,这个没办法,等以后慢慢深入学习肯定会更好。

今天开始,AudioPolicy算暂时结束了,现在正式看 AudioFlinger,

先按计划把 Audio 过一遍,前面的文章有更好的理解时再同步更新。

三、AudioFlinger

1. AudioFlinger 的启动

@ \src\frameworks\av\media\audioserver\main_audioserver.cpp

int main(int argc __unused, char **argv)

{

ALOGI("ServiceManager: %p", sm.get());

AudioFlinger::instantiate();

AudioPolicyService::instantiate();

}

AudioFlinger::instantiate() 调用如下:

- 注册 AudioFlinger 服务名为 “media.audio_flinger”.

- 调用 AudioFlinger 构造函数.

- 调用 AudioFlinger::onFirstRef 函数.

@ \src\frameworks\native\libs\binder\include\binder\BinderService.h

static void instantiate() { publish(); }

static status_t publish(bool allowIsolated = false) {

sp<IServiceManager> sm(defaultServiceManager());

return sm->addService(

String16(SERVICE::getServiceName()),

new SERVICE(), allowIsolated);

}

注册的服务名字为:"media.audio_flinger"

static const char* getServiceName() ANDROID_API { return "media.audio_flinger"; }

2. AudioFlinger::AudioFlinger() 构造函数

在构造函数中,主要是做一下初始化,

初始化了 mDevicesFactoryHal 设备接口 和 mEffectsFactoryHal 音效接口。

@ \src\frameworks\av\services\audioflinger\AudioFlinger.cpp

AudioFlinger::AudioFlinger() :

BnAudioFlinger(),

mPrimaryHardwareDev(NULL), // mPrimaryHardwareDev = NULL

mAudioHwDevs(NULL), // mAudioHwDevs = NULL

mHardwareStatus(AUDIO_HW_IDLE), // mHardwareStatus = NULL

mMasterVolume(1.0f), // mMasterVolume = 1.0f

mMasterMute(false), // mMasterMute = false

// mNextUniqueId(AUDIO_UNIQUE_ID_USE_MAX),

mMode(AUDIO_MODE_INVALID), // mMode = AUDIO_MODE_INVALID

mBtNrecIsOff(false), // mBtNrecIsOff = false

mIsLowRamDevice(true), // mIsLowRamDevice = true

mIsDeviceTypeKnown(false), // mIsDeviceTypeKnown = false

mGlobalEffectEnableTime(0), // mGlobalEffectEnableTimeb = 0

mSystemReady(false) // mSystemReady = false

{

// unsigned instead of audio_unique_id_use_t, because ++ operator is unavailable for enum

for (unsigned use = AUDIO_UNIQUE_ID_USE_UNSPECIFIED; use < AUDIO_UNIQUE_ID_USE_MAX; use++) {

// zero ID has a special meaning, so unavailable

mNextUniqueIds[use] = AUDIO_UNIQUE_ID_USE_MAX;

}

// 获得当前调用进程程的 PID

getpid_cached = getpid();

// 实例化打开设备的接口 DevicesFactoryHalInterface,通过它可以获得Hardware 层Audio 硬件的接口

// @ \src\frameworks\av\media\libaudiohal\DevicesFactoryHalHybrid.cpp

mDevicesFactoryHal = DevicesFactoryHalInterface::create();

// 实例化音效的接口,通过它可以获得 Hardware 层音效的接口

// @ \src\frameworks\av\media\libaudiohal\EffectsFactoryHalLocal.cpp

mEffectsFactoryHal = EffectsFactoryHalInterface::create();

// 启动 MediaLogNotifier 进程,用于处理 audioflinger 的部份 binder call 工作,减少 audioFlinger log相关的工作。

mMediaLogNotifier->run("MediaLogNotifier");

}

3. AudioFlinger::onFirstRef() 构造函数

@ \src\frameworks\av\services\audioflinger\AudioFlinger.cpp

void AudioFlinger::onFirstRef()

{

// 构造一个 PatchPanel 对象

mPatchPanel = new PatchPanel(this);

// 修改 mode 为 AUDIO_MODE_NORMAL

mMode = AUDIO_MODE_NORMAL;

gAudioFlinger = this;

}

4. PatchPanel 对象 Audio Patch机制分析

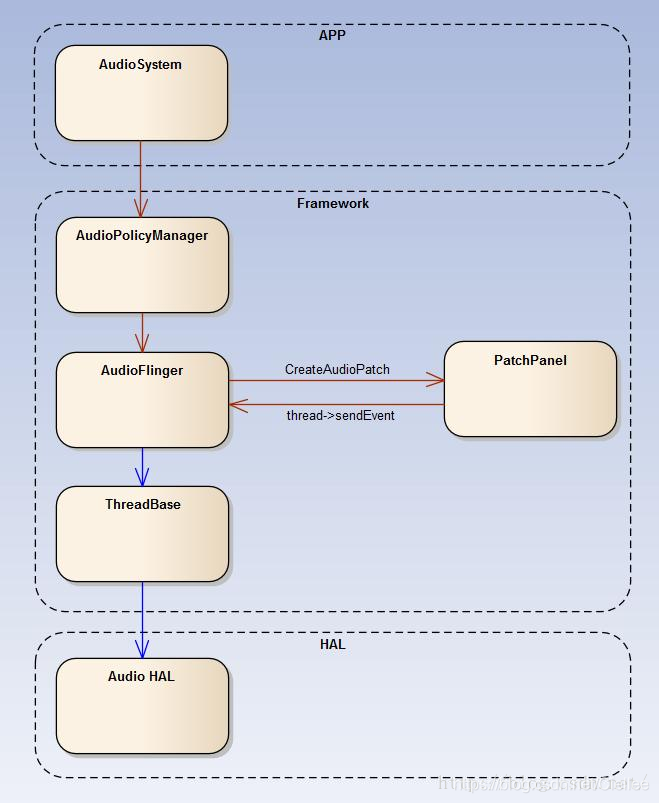

先来说下 Audio PatchPanel 参象所处的位置在网上看到一篇写的很好的文章(见后面),

此入引用一下他的图片:

可以看出 PatchPanel 只为AudioFlinger 服务,由AudioPolicy Manger 来管理的。

整个跑的流程为:

AudioSystem(APP层) —> AudioPolicyManager(framwork层) —> AudioFlinger。

AudioFlinger ----> CreateAudioPatch() ----> Patchpanel ----> Thread->sendEvent() ----> ThreadBase -----> Hardware 层

4.1 结构体 struct audio_patch

我们先来看一下 audio_patch 结构体

@ \src\system\media\audio\include\system\audio.h

/* An audio patch represents a connection between one or more source ports and

* one or more sink ports. Patches are connected and disconnected by audio policy manager or by

* applications via framework APIs.

* Each patch is identified by a handle at the interface used to create that patch. For instance,

* when a patch is created by the audio HAL, the HAL allocates and returns a handle.

* This handle is unique to a given audio HAL hardware module.

* But the same patch receives another system wide unique handle allocated by the framework.

* This unique handle is used for all transactions inside the framework.

*/

struct audio_patch {

audio_patch_handle_t id; /* patch unique ID */

// Audio 输入源的数量 及 对应的 sources 数组

unsigned int num_sources; /* number of sources in following array */

struct audio_port_config sources[AUDIO_PATCH_PORTS_MAX];

// Audio 输出端口的数量 及 对对应的输出端口的 sinks 数组

unsigned int num_sinks; /* number of sinks in following array */

struct audio_port_config sinks[AUDIO_PATCH_PORTS_MAX];

};

可以看出,audio patch 是用来表示 audio 中一个或地者多个 source 端 和 sink 端的结构体。

其中含了当前支持的 source 和 sink 的个数及,对应的数组。

在 audio_port_config 结构体中,包含了该 port 的所有信息,如下

/* audio port configuration structure used to specify a particular configuration of

* an audio port */

struct audio_port_config {

audio_port_handle_t id; /* port unique ID */

audio_port_role_t role; /* sink or source */

audio_port_type_t type; /* device, mix ... */

unsigned int config_mask; /* e.g AUDIO_PORT_CONFIG_ALL */

unsigned int sample_rate; /* sampling rate in Hz */

audio_channel_mask_t channel_mask; /* channel mask if applicable */

audio_format_t format; /* format if applicable */

struct audio_gain_config gain; /* gain to apply if applicable */

union {

struct audio_port_config_device_ext device; /* device specific info */

struct audio_port_config_mix_ext mix; /* mix specific info */

struct audio_port_config_session_ext session; /* session specific info */

} ext;

};

Audio patch 是被 Audio Policy Manager 或者 Framework API 来进行管理连接的。

4.2 结构体 struct audio_port_config

接下来我们来看下 struct audio_port_config 是如何描述的。

@ \src\system\media\audio\include\system\audio.h

/* extension for audio port configuration structure when the audio port is a hardware device */

struct audio_port_config_device_ext {

audio_module_handle_t hw_module; /* module the device is attached to */

audio_devices_t type; /* device type (e.g AUDIO_DEVICE_OUT_SPEAKER) */

char address[AUDIO_DEVICE_MAX_ADDRESS_LEN]; /* device address. "" if N/A */

};

在 audio_port_config_device_ext 中绑定了对应的 hardware module,设备类型 audio_devices_t ,及对应 port 的地址。

4.3 AudioFlinger 中 PatchPanel 的接口函数

在 AudioFlinger::PatchPanel 中包含了一系列 patchpanel 的操作函数:

先来看下 AudioFlinger 的接口:

@ \frameworks\av\services\audioflinger\PatchPanel.cpp

// 列出所有的 AudioPorts 端口

/* List connected audio ports and their attributes */

status_t AudioFlinger::listAudioPorts(unsigned int *num_ports, struct audio_port *ports){

return mPatchPanel->listAudioPorts(num_ports, ports);

}

// 获得某个 AudioPorts 端口

/* Get supported attributes for a given audio port */

status_t AudioFlinger::getAudioPort(struct audio_port *port){

return mPatchPanel->getAudioPort(port);

}

// 连接 一个 patch

/* Connect a patch between several source and sink ports */

status_t AudioFlinger::createAudioPatch(const struct audio_patch *patch,audio_patch_handle_t *handle){

return mPatchPanel->createAudioPatch(patch, handle);

}

// 断开 patch 连接

/* Disconnect a patch */

status_t AudioFlinger::releaseAudioPatch(audio_patch_handle_t handle){

return mPatchPanel->releaseAudioPatch(handle);

}

// 列出当前 Audio 所有的 AudioPatches

/* List connected audio ports and they attributes */

status_t AudioFlinger::listAudioPatches(unsigned int *num_patches, struct audio_patch *patches){

return mPatchPanel->listAudioPatches(num_patches, patches);

}

// 配置 AudioPort 端口

/* Set audio port configuration */

status_t AudioFlinger::setAudioPortConfig(const struct audio_port_config *config){

return mPatchPanel->setAudioPortConfig(config);

}

4.4 PatchPanel 具本实现函数

前面 Charpter 4.3 中可以看出,AudioFlinger 实际上是对 PatchPanel 进行了封装。

接下来,我们主要看下 PatchPanel 的具体实现函数:

@ \frameworks\av\services\audioflinger\PatchPanel.cpp

AudioFlinger::PatchPanel::PatchPanel(const sp<AudioFlinger>& audioFlinger): mAudioFlinger(audioFlinger)

{}

/* List connected audio ports and their attributes */

status_t AudioFlinger::PatchPanel::listAudioPorts(unsigned int *num_ports __unused, struct audio_port *ports __unused)

{}

/* Get supported attributes for a given audio port */

status_t AudioFlinger::PatchPanel::getAudioPort(struct audio_port *port __unused)

{}

/* Connect a patch between several source and sink ports */

status_t AudioFlinger::PatchPanel::createAudioPatch(const struct audio_patch *patch, audio_patch_handle_t *handle)

{

... 详见 Charpter 4.5 创建Audio Patch 绑定 PatchPanel::createAudioPatch()

}

status_t AudioFlinger::PatchPanel::createPatchConnections(Patch *patch, const struct audio_patch *audioPatch)

{

... 详见 Charpter 4.6 建立Patch连接 PatchPanel::createPatchConnections()

}

void AudioFlinger::PatchPanel::clearPatchConnections(Patch *patch)

{

... 详见 Charpter 4.7 清除Audio patch连接 PatchPanel::clearPatchConnections()

}

/* Disconnect a patch */

status_t AudioFlinger::PatchPanel::releaseAudioPatch(audio_patch_handle_t handle)

{

... 详见 Charpter 4.8 释放Audio Patch连接 PatchPanel::releaseAudioPatch()

}

/* Set audio port configuration */

status_t AudioFlinger::PatchPanel::setAudioPortConfig(const struct audio_port_config *config)

{

... 详见 Charpter 4.9 配置Audio Port 端口 PatchPanel::setAudioPortConfig()

}

4.5 创建Audio Patch 绑定 PatchPanel::createAudioPatch()

主要目的是创建一个 patch 对象,保存在 mPatchs 中。

工作如下:

-

获得 AudioFlinger 对象

-

只允许创建 1 个 souces , 只有 AudioPolicyManger 才能创建 2 个 Souces

-

如果当前已经有现成的 Patch 则依次遍历,并且释放删除它

释放 Audio Playback 或 Capture 线程,释放 Audio Hardware 接口。 -

新建一个 patch 对象

-

如果端口类型是 AUDIO_PORT_TYPE_DEVICE 的话

(5.1.1) 如果判断是有两个设备,则说明调用者是 AudioPolicyManger

其中 设备 0:AUDIO_PORT_TYPE_DEVICE , 设备 1:AUDIO_PORT_TYPE_MIX

获取 playback 线程并保存在 mPlaybackThread中(5.1.2) 如果判断只有一个设备,则将config 配置为 AUDIO_CONFIG_INITIALIZER,output 配置为 AUDIO_IO_HANDLE_NONE

调用 openOutput_l() 新创建一个 output 线程,mPlaybackThreads,

获取 playback 线程并保存在 mPlaybackThread中。获得输入设备类型 及 对应的输出设备地址,获得source 的采样率、声道数、数据格式,

创建输入设备的线程,保存在 newPatch->mRecordThread 中调用 createPatchConnections 函数,创建patch 连接,至些完成创建过程。

(5.2.1) 如果,输出源是 MIX 的话,则获得 record thread 线程,发送消息,创建 AudioPatch 事件。

(5.2.2) 否则,调用 createAudioPatch 创建 audio patch

-

如果端口类型是 AUDIO_PORT_TYPE_MIX 的话

获得输入模块的 hardware Module,

获得 输出源 playback 的类型,

获得并检查 输出源 playback 线程,

如果 获得的 thread 线程 是系统主线程的话,则获得 audio param 参数,将参数发送到 record 线程中。发送消息,创建 AudioPatch 事件 -

如果前面的 newPatch 创建成功后,则给新建的 newPatch 分析一个 UID,并添加到 mPatchs 中

@ \src\frameworks\av\services\audioflinger\PatchPanel.cpp

/* Connect a patch between several source and sink ports */

status_t AudioFlinger::PatchPanel::createAudioPatch(const struct audio_patch *patch, audio_patch_handle_t *handle)

{

audio_patch_handle_t halHandle = AUDIO_PATCH_HANDLE_NONE;

// 1. 获得 AudioFlinger 对象

sp<AudioFlinger> audioflinger = mAudioFlinger.promote();

ALOGV("createAudioPatch() num_sources %d num_sinks %d handle %d", patch->num_sources, patch->num_sinks, *handle);

// 2. 只允许创建一个 souces , 只有 AudioPolicyManger 才能创建 2 Souces

// limit number of sources to 1 for now or 2 sources for special cross hw module case.

// only the audio policy manager can request a patch creation with 2 sources.

if (patch->num_sources > 2) {

return INVALID_OPERATION;

}

// 3. 如果当前已经有现成的 Patch 则依次遍历,并且释放删除它 (释放 Audio Playback 或 Capture 线程,释放 Audio Hardware 接口)

if (*handle != AUDIO_PATCH_HANDLE_NONE) {

for (size_t index = 0; *handle != 0 && index < mPatches.size(); index++) {

if (*handle == mPatches[index]->mHandle) {

ALOGV("createAudioPatch() removing patch handle %d", *handle);

halHandle = mPatches[index]->mHalHandle;

Patch *removedPatch = mPatches[index];

// free resources owned by the removed patch if applicable

// 释放 Audio Playback 或 Capture 线程

// 1) if a software patch is present, release the playback and capture threads and

// tracks created. This will also release the corresponding audio HAL patches

if ((removedPatch->mRecordPatchHandle != AUDIO_PATCH_HANDLE_NONE) ||

(removedPatch->mPlaybackPatchHandle != AUDIO_PATCH_HANDLE_NONE)) {

clearPatchConnections(removedPatch);

}

// 释放 Audio Hardware 接口

// 2) if the new patch and old patch source or sink are devices from different

// hw modules, clear the audio HAL patches now because they will not be updated

// by call to create_audio_patch() below which will happen on a different HW module

if (halHandle != AUDIO_PATCH_HANDLE_NONE) {

audio_module_handle_t hwModule = AUDIO_MODULE_HANDLE_NONE;

if ((removedPatch->mAudioPatch.sources[0].type == AUDIO_PORT_TYPE_DEVICE) &&

((patch->sources[0].type != AUDIO_PORT_TYPE_DEVICE) ||

(removedPatch->mAudioPatch.sources[0].ext.device.hw_module !=

patch->sources[0].ext.device.hw_module))) {

hwModule = removedPatch->mAudioPatch.sources[0].ext.device.hw_module;

} else if ((patch->num_sinks == 0) ||

((removedPatch->mAudioPatch.sinks[0].type == AUDIO_PORT_TYPE_DEVICE) &&

((patch->sinks[0].type != AUDIO_PORT_TYPE_DEVICE) ||

(removedPatch->mAudioPatch.sinks[0].ext.device.hw_module !=

patch->sinks[0].ext.device.hw_module)))) {

// Note on (patch->num_sinks == 0): this situation should not happen as

// these special patches are only created by the policy manager but just

// in case, systematically clear the HAL patch.

// Note that removedPatch->mAudioPatch.num_sinks cannot be 0 here because

// halHandle would be AUDIO_PATCH_HANDLE_NONE in this case.

hwModule = removedPatch->mAudioPatch.sinks[0].ext.device.hw_module;

}

if (hwModule != AUDIO_MODULE_HANDLE_NONE) {

ssize_t index = audioflinger->mAudioHwDevs.indexOfKey(hwModule);

if (index >= 0) {

sp<DeviceHalInterface> hwDevice =

audioflinger->mAudioHwDevs.valueAt(index)->hwDevice();

hwDevice->releaseAudioPatch(halHandle);

}

}

}

mPatches.removeAt(index);

delete removedPatch;

break;

}

}

}

// 4. 新建一个 patch 对象

Patch *newPatch = new Patch(patch);

==============>

| 在 patch 对象中主要包含了audiopatch 和 对应的 thread 信息:

|

| class Patch {

| public:

| explicit Patch(const struct audio_patch *patch) :

| mAudioPatch(*patch), mHandle(AUDIO_PATCH_HANDLE_NONE),

| mHalHandle(AUDIO_PATCH_HANDLE_NONE), mRecordPatchHandle(AUDIO_PATCH_HANDLE_NONE),

| mPlaybackPatchHandle(AUDIO_PATCH_HANDLE_NONE) {}

| ~Patch() {}

|

| struct audio_patch mAudioPatch;

| audio_patch_handle_t mHandle;

| // handle for audio HAL patch handle present only when the audio HAL version is >= 3.0

| audio_patch_handle_t mHalHandle;

| // below members are used by a software audio patch connecting a source device from a

| // given audio HW module to a sink device on an other audio HW module.

| sp<PlaybackThread> mPlaybackThread;

| sp<PlaybackThread::PatchTrack> mPatchTrack;

| sp<RecordThread> mRecordThread;

| sp<RecordThread::PatchRecord> mPatchRecord;

| // handle for audio patch connecting source device to record thread input.

| audio_patch_handle_t mRecordPatchHandle;

| // handle for audio patch connecting playback thread output to sink device

| audio_patch_handle_t mPlaybackPatchHandle;

| }

<==============

switch (patch->sources[0].type) {

// 5. 如果端口类型是 Device 的话

case AUDIO_PORT_TYPE_DEVICE: {

audio_module_handle_t srcModule = patch->sources[0].ext.device.hw_module;

ssize_t index = audioflinger->mAudioHwDevs.indexOfKey(srcModule);

AudioHwDevice *audioHwDevice = audioflinger->mAudioHwDevs.valueAt(index);

// manage patches requiring a software bridge

// - special patch request with 2 sources (reuse one existing output mix) OR Device to device AND

// - source HW module != destination HW module OR audio HAL does not support audio patches creation

if ((patch->num_sources == 2) || ((patch->sinks[0].type == AUDIO_PORT_TYPE_DEVICE) &&

((patch->sinks[0].ext.device.hw_module != srcModule) || !audioHwDevice->supportsAudioPatches()))) {

// (1)如果判断是有两个设备,则说明调用者是 AudioPolicyManger

// 其中 设备 0:AUDIO_PORT_TYPE_DEVICE , 设备 1:AUDIO_PORT_TYPE_MIX

if (patch->num_sources == 2) {

if (patch->sources[1].type != AUDIO_PORT_TYPE_MIX ||

(patch->num_sinks != 0 && patch->sinks[0].ext.device.hw_module !=

patch->sources[1].ext.mix.hw_module)) {

ALOGW("createAudioPatch() invalid source combination");

status = INVALID_OPERATION;

goto exit;

}

// 获得 playback 的线程

sp<ThreadBase> thread = audioflinger->checkPlaybackThread_l(patch->sources[1].ext.mix.handle);

// 将 playback 线程保存在 mPlaybackThread 中。

newPatch->mPlaybackThread = (MixerThread *)thread.get();

} else {

// (2)如果判断只有一个设备,则将config 配置为 AUDIO_CONFIG_INITIALIZER,output 配置为 AUDIO_IO_HANDLE_NONE。

audio_config_t config = AUDIO_CONFIG_INITIALIZER;

audio_devices_t device = patch->sinks[0].ext.device.type;

String8 address = String8(patch->sinks[0].ext.device.address);

audio_io_handle_t output = AUDIO_IO_HANDLE_NONE;

// 调用 openOutput_l() 创建 output 线程,mPlaybackThreads,保存在 mPlaybackThread 中。

sp<ThreadBase> thread = audioflinger->openOutput_l(

patch->sinks[0].ext.device.hw_module,

&output,

&config,

device,

address,

AUDIO_OUTPUT_FLAG_NONE);

newPatch->mPlaybackThread = (PlaybackThread *)thread.get();

ALOGV("audioflinger->openOutput_l() returned %p", newPatch->mPlaybackThread.get());

}

// 获得输入设备类型 及 对应的输出设备地址

audio_devices_t device = patch->sources[0].ext.device.type;

String8 address = String8(patch->sources[0].ext.device.address);

audio_config_t config = AUDIO_CONFIG_INITIALIZER;

// 获得source 的采样率、声道数、数据格式

// open input stream with source device audio properties if provided or

// default to peer output stream properties otherwise.

if (patch->sources[0].config_mask & AUDIO_PORT_CONFIG_SAMPLE_RATE) {

config.sample_rate = patch->sources[0].sample_rate;

} else {

config.sample_rate = newPatch->mPlaybackThread->sampleRate();

}

if (patch->sources[0].config_mask & AUDIO_PORT_CONFIG_CHANNEL_MASK) {

config.channel_mask = patch->sources[0].channel_mask;

} else {

config.channel_mask =

audio_channel_in_mask_from_count(newPatch->mPlaybackThread->channelCount());

}

if (patch->sources[0].config_mask & AUDIO_PORT_CONFIG_FORMAT) {

config.format = patch->sources[0].format;

} else {

config.format = newPatch->mPlaybackThread->format();

}

audio_io_handle_t input = AUDIO_IO_HANDLE_NONE;

// 创建输入设备的线程,保存在 newPatch->mRecordThread 中

sp<ThreadBase> thread = audioflinger->openInput_l(srcModule,

&input,

&config,

device,

address,

AUDIO_SOURCE_MIC,

AUDIO_INPUT_FLAG_NONE);

newPatch->mRecordThread = (RecordThread *)thread.get();

ALOGV("audioflinger->openInput_l() returned %p inChannelMask %08x",

newPatch->mRecordThread.get(), config.channel_mask);

// 调用 createPatchConnections 函数,创建patch 连接

status = createPatchConnections(newPatch, patch);

} else {

// 如果,输出源是 MIX 的话,则获得 record thread 线程

if (patch->sinks[0].type == AUDIO_PORT_TYPE_MIX) {

sp<ThreadBase> thread = audioflinger->checkRecordThread_l( patch->sinks[0].ext.mix.handle);

if (thread == 0) {

thread = audioflinger->checkMmapThread_l(patch->sinks[0].ext.mix.handle);

}

// 发送消息,创建 AudioPatch 事件

status = thread->sendCreateAudioPatchConfigEvent(patch, &halHandle);

} else {

// 否则,调用 createAudioPatch 创建 audio patch

sp<DeviceHalInterface> hwDevice = audioHwDevice->hwDevice();

status = hwDevice->createAudioPatch(patch->num_sources,

patch->sources,

patch->num_sinks,

patch->sinks,

&halHandle);

}

}

} break;

// 6. 如果端口类型是 MIX 的话

case AUDIO_PORT_TYPE_MIX: {

// 获得输入模块的 hardware Module

audio_module_handle_t srcModule = patch->sources[0].ext.mix.hw_module;

ssize_t index = audioflinger->mAudioHwDevs.indexOfKey(srcModule);

// limit to connections between devices and output streams

audio_devices_t type = AUDIO_DEVICE_NONE;

// 获得 输出源 playback 的类型

for (unsigned int i = 0; i < patch->num_sinks; i++) {

type |= patch->sinks[i].ext.device.type;

}

// 检查 输出源 playback 线程

sp<ThreadBase> thread = audioflinger->checkPlaybackThread_l(patch->sources[0].ext.mix.handle);

if (thread == 0) {

thread = audioflinger->checkMmapThread_l(patch->sources[0].ext.mix.handle);

}

// 如果 获得的 thread 线程 是系统主线程的话,则获得 audio param 参数,将参数发送到 record 线程中。

if (thread == audioflinger->primaryPlaybackThread_l()) {

AudioParameter param = AudioParameter();

param.addInt(String8(AudioParameter::keyRouting), (int)type);

audioflinger->broacastParametersToRecordThreads_l(param.toString());

}

// 发送消息,创建 AudioPatch 事件

status = thread->sendCreateAudioPatchConfigEvent(patch, &halHandle);

} break;

}

exit:

// 如果创建成功,则给新建的 patch 分析一个 UID,并添加到 mPatchs 中。

ALOGV("createAudioPatch() status %d", status);

if (status == NO_ERROR) {

*handle = (audio_patch_handle_t) audioflinger->nextUniqueId(AUDIO_UNIQUE_ID_USE_PATCH);

newPatch->mHandle = *handle;

newPatch->mHalHandle = halHandle;

mPatches.add(newPatch);

ALOGV("createAudioPatch() added new patch handle %d halHandle %d", *handle, halHandle);

} else {

clearPatchConnections(newPatch);

delete newPatch;

}

return status;

}

4.6 建立Patch连接 PatchPanel::createPatchConnections()

将 输入线程 和 输出线程 绑定起来。

-

配置输入源的个数 和 输出源的个数为 1,获取 输入源的设备结构体audio_port_config ,其中包括了它的所有属性。

-

输入 Record :

获得输入源 的 audio_port_config 结构体信息,保存在 subPatch.sinks[0] 中

将该输入源 source 配置为 AUDIO_SOURCE_MIC 类型

调用 createAudioPatch 创建输入的 Audio patch

获得输入源 playback 的 port 端口配置

调用 createAudioPatch 创建输出的 Audio Patch

创建一个特殊的 track 来处理录音事务

创建一个 RecordThread::PatchRecord 线程 用于 recordthread 线程,保存在 mPatchRecord 中。

添加录音 PatchRecord track 到 mTracks 中 -

输出 Playback :

创建 Placthread 线程,新建 playback Patch线程,保存在 mPatchTrack 中。

将 playback track 添加到 patch 的 mTracks 中。 -

将playback 和 record tracks 绑定在一起

-

开始调用 record 录音,同时调用 playback 测试播放

@ \src\frameworks\av\services\audioflinger\PatchPanel.cpp

status_t AudioFlinger::PatchPanel::createPatchConnections(Patch *patch, const struct audio_patch *audioPatch)

{

// 配置输入源的个数 和 输出源的个数为 1,获取 输入源的设备结构体audio_port_config ,其中包括了它的所有属性。

// create patch from source device to record thread input

struct audio_patch subPatch;

subPatch.num_sources = 1;

subPatch.sources[0] = audioPatch->sources[0];

subPatch.num_sinks = 1;

// 获得输入源 的 audio_port_config 结构体信息,保存在 subPatch.sinks[0] 中

patch->mRecordThread->getAudioPortConfig(&subPatch.sinks[0]);

// 将该输入源 source 配置为 AUDIO_SOURCE_MIC 类型

subPatch.sinks[0].ext.mix.usecase.source = AUDIO_SOURCE_MIC;

// 调用 createAudioPatch 创建输入的 Audio patch

status_t status = createAudioPatch(&subPatch, &patch->mRecordPatchHandle);

// create patch from playback thread output to sink device

// 获得输入源 playback 的 port 端口配置

patch->mPlaybackThread->getAudioPortConfig(&subPatch.sources[0]);

subPatch.sinks[0] = audioPatch->sinks[0];

// 调用 createAudioPatch 创建输出的 Audio Patch

status = createAudioPatch(&subPatch, &patch->mPlaybackPatchHandle);

// use a pseudo LCM between input and output framecount

size_t playbackFrameCount = patch->mPlaybackThread->frameCount();

int playbackShift = __builtin_ctz(playbackFrameCount);

size_t recordFramecount = patch->mRecordThread->frameCount();

int shift = __builtin_ctz(recordFramecount);

if (playbackShift < shift) {

shift = playbackShift;

}

// 计算一帧的偏秒

size_t frameCount = (playbackFrameCount * recordFramecount) >> shift;

ALOGV("createPatchConnections() playframeCount %zu recordFramecount %zu frameCount %zu",

playbackFrameCount, recordFramecount, frameCount);

// 创建一个特殊的 track 来处理录音事务

// create a special record track to capture from record thread

uint32_t channelCount = patch->mPlaybackThread->channelCount();

audio_channel_mask_t inChannelMask = audio_channel_in_mask_from_count(channelCount);

audio_channel_mask_t outChannelMask = patch->mPlaybackThread->channelMask();

uint32_t sampleRate = patch->mPlaybackThread->sampleRate();

audio_format_t format = patch->mPlaybackThread->format();

// 创建一个 RecordThread::PatchRecord 线程 用于 recordthread 线程,保存在 mPatchRecord 中。

patch->mPatchRecord = new RecordThread::PatchRecord(

patch->mRecordThread.get(),

sampleRate,

inChannelMask,

format,

frameCount,

NULL,

(size_t)0 /* bufferSize */,

AUDIO_INPUT_FLAG_NONE);

// patch 初始化查查

status = patch->mPatchRecord->initCheck();

// 添加录音 PatchRecord track 到 mTracks 中

patch->mRecordThread->addPatchRecord(patch->mPatchRecord);

-----》 mTracks.add(patch->mPatchRecord));

// 同样创建 Placthread 线程,新建 playback Patch线程,保存在 mPatchTrack 中。

// create a special playback track to render to playback thread.

// this track is given the same buffer as the PatchRecord buffer

patch->mPatchTrack = new PlaybackThread::PatchTrack(

patch->mPlaybackThread.get(),

audioPatch->sources[1].ext.mix.usecase.stream,

sampleRate,

outChannelMask,

format,

frameCount,

patch->mPatchRecord->buffer(),

patch->mPatchRecord->bufferSize(),

AUDIO_OUTPUT_FLAG_NONE);

status = patch->mPatchTrack->initCheck();

// 将 playback track 添加到 patch 的 mTracks 中。

patch->mPlaybackThread->addPatchTrack(patch->mPatchTrack);

// 将playback 和 record tracks 绑定在一起

// tie playback and record tracks together

patch->mPatchRecord->setPeerProxy(patch->mPatchTrack.get()); // record

patch->mPatchTrack->setPeerProxy(patch->mPatchRecord.get()); // playback

// 开始调用 record 录音,同时调用 playback 测试播放

// start capture and playback

patch->mPatchRecord->start(AudioSystem::SYNC_EVENT_NONE, AUDIO_SESSION_NONE);

----> @\src\frameworks\av\services\audioflinger\PlaybackTracks.h

----> Track::start(event, triggerSession);

patch->mPatchTrack->start();

return status;

}

- Track::start()

@ \src\frameworks\av\services\audioflinger\Tracks.cpp

status_t AudioFlinger::PlaybackThread::Track::start(AudioSystem::sync_event_t event __unused, audio_session_t triggerSession __unused){

sp<ThreadBase> thread = mThread.promote();

if (thread != 0) {

// here the track could be either new, or restarted in both cases "unstop" the track

// initial state-stopping. next state-pausing.

// What if resume is called ?

if (state == PAUSED || state == PAUSING) {

if (mResumeToStopping) {

// happened we need to resume to STOPPING_1

mState = TrackBase::STOPPING_1;

ALOGV("PAUSED => STOPPING_1 (%d) on thread %p", mName, this);

} else {

mState = TrackBase::RESUMING;

ALOGV("PAUSED => RESUMING (%d) on thread %p", mName, this);

}

} else {

mState = TrackBase::ACTIVE;

ALOGV("? => ACTIVE (%d) on thread %p", mName, this);

}

// 获得 playback 线程

PlaybackThread *playbackThread = (PlaybackThread *)thread.get();

status = playbackThread->addTrack_l(this);

----------->

status = AudioSystem::startOutput(mId, track->streamType(),track->sessionId());

// track was already in the active list, not a problem

if (status == ALREADY_EXISTS) {

status = NO_ERROR;

} else {

// Acknowledge any pending flush(), so that subsequent new data isn't discarded.

// It is usually unsafe to access the server proxy from a binder thread.

// But in this case we know the mixer thread (whether normal mixer or fast mixer)

// isn't looking at this track yet: we still hold the normal mixer thread lock,

// and for fast tracks the track is not yet in the fast mixer thread's active set.

// For static tracks, this is used to acknowledge change in position or loop.

ServerProxy::Buffer buffer;

buffer.mFrameCount = 1;

(void) mAudioTrackServerProxy->obtainBuffer(&buffer, true /*ackFlush*/);

}

} else {

status = BAD_VALUE;

}

return status;

}

4.7 清除Audio patch连接 PatchPanel::clearPatchConnections()

获得 audioflinger 对象

调用 stop 函数 停止录音

调用 patch releaseAudioPatch函数,释放 audio patch 且将 patch handle 置为空

删除录音 和 播放线程

清除patch mPatchRecord 和 mPatchTrack 变量

void AudioFlinger::PatchPanel::clearPatchConnections(Patch *patch)

{

// 获得 audioflinger 对象

sp<AudioFlinger> audioflinger = mAudioFlinger.promote();

ALOGV("clearPatchConnections() patch->mRecordPatchHandle %d patch->mPlaybackPatchHandle %d",

patch->mRecordPatchHandle, patch->mPlaybackPatchHandle);

// 调用 stop 函数 停止录音

if (patch->mPatchRecord != 0)

patch->mPatchRecord->stop();

if (patch->mPatchTrack != 0)

patch->mPatchTrack->stop();

// 调用 patch releaseAudioPatch函数,释放 audio patch 且将 patch handle 置为空

if (patch->mRecordPatchHandle != AUDIO_PATCH_HANDLE_NONE) {

releaseAudioPatch(patch->mRecordPatchHandle);

patch->mRecordPatchHandle = AUDIO_PATCH_HANDLE_NONE;

}

if (patch->mPlaybackPatchHandle != AUDIO_PATCH_HANDLE_NONE) {

releaseAudioPatch(patch->mPlaybackPatchHandle);

patch->mPlaybackPatchHandle = AUDIO_PATCH_HANDLE_NONE;

}

// 删除录音 和 播放线程

if (patch->mRecordThread != 0) {

if (patch->mPatchRecord != 0) {

patch->mRecordThread->deletePatchRecord(patch->mPatchRecord);

}

audioflinger->closeInputInternal_l(patch->mRecordThread);

}

if (patch->mPlaybackThread != 0) {

if (patch->mPatchTrack != 0) {

patch->mPlaybackThread->deletePatchTrack(patch->mPatchTrack);

}

// if num sources == 2 we are reusing an existing playback thread so we do not close it

if (patch->mAudioPatch.num_sources != 2) {

audioflinger->closeOutputInternal_l(patch->mPlaybackThread);

}

}

// 清除patch mPatchRecord 和 mPatchTrack 变量

if (patch->mRecordThread != 0) {

if (patch->mPatchRecord != 0) {

patch->mPatchRecord.clear();

}

patch->mRecordThread.clear();

}

if (patch->mPlaybackThread != 0) {

if (patch->mPatchTrack != 0) {

patch->mPatchTrack.clear();

}

patch->mPlaybackThread.clear();

}

}

4.8 释放Audio Patch连接 PatchPanel::releaseAudioPatch()

@ \src\frameworks\av\services\audioflinger\PatchPanel.cpp

/* Disconnect a patch */

status_t AudioFlinger::PatchPanel::releaseAudioPatch(audio_patch_handle_t handle)

{

sp<AudioFlinger> audioflinger = mAudioFlinger.promote();

for (index = 0; index < mPatches.size(); index++) {

if (handle == mPatches[index]->mHandle) {

break;

}

}

Patch *removedPatch = mPatches[index];

mPatches.removeAt(index);

struct audio_patch *patch = &removedPatch->mAudioPatch;

switch (patch->sources[0].type) {

case AUDIO_PORT_TYPE_DEVICE: {

audio_module_handle_t srcModule = patch->sources[0].ext.device.hw_module;

ssize_t index = audioflinger->mAudioHwDevs.indexOfKey(srcModule);

if (removedPatch->mRecordPatchHandle != AUDIO_PATCH_HANDLE_NONE ||

removedPatch->mPlaybackPatchHandle != AUDIO_PATCH_HANDLE_NONE) {

clearPatchConnections(removedPatch);

break;

}

if (patch->sinks[0].type == AUDIO_PORT_TYPE_MIX) {

sp<ThreadBase> thread = audioflinger->checkRecordThread_l(patch->sinks[0].ext.mix.handle);

if (thread == 0) {

thread = audioflinger->checkMmapThread_l(patch->sinks[0].ext.mix.handle);

}

status = thread->sendReleaseAudioPatchConfigEvent(removedPatch->mHalHandle);

} else {

AudioHwDevice *audioHwDevice = audioflinger->mAudioHwDevs.valueAt(index);

sp<DeviceHalInterface> hwDevice = audioHwDevice->hwDevice();

status = hwDevice->releaseAudioPatch(removedPatch->mHalHandle);

}

} break;

case AUDIO_PORT_TYPE_MIX: {

audio_module_handle_t srcModule = patch->sources[0].ext.mix.hw_module;

ssize_t index = audioflinger->mAudioHwDevs.indexOfKey(srcModule);

sp<ThreadBase> thread = audioflinger->checkPlaybackThread_l(patch->sources[0].ext.mix.handle);

if (thread == 0) {

thread = audioflinger->checkMmapThread_l(patch->sources[0].ext.mix.handle);

}

status = thread->sendReleaseAudioPatchConfigEvent(removedPatch->mHalHandle);

} break;

}

delete removedPatch;

return status;

}

4.9 配置Audio Port 端口 PatchPanel::setAudioPortConfig()

@ \src\frameworks\av\services\audioflinger\PatchPanel.cpp

/* Set audio port configuration */

status_t AudioFlinger::PatchPanel::setAudioPortConfig(const struct audio_port_config *config)

{

ALOGV("setAudioPortConfig");

sp<AudioFlinger> audioflinger = mAudioFlinger.promote();

// 获得 audio hardware 接口

audio_module_handle_t module;

if (config->type == AUDIO_PORT_TYPE_DEVICE) {

module = config->ext.device.hw_module;

} else {

module = config->ext.mix.hw_module;

}

ssize_t index = audioflinger->mAudioHwDevs.indexOfKey(module);

// 直接调用 audio hardware 的 setAudioPortConfig 接口

AudioHwDevice *audioHwDevice = audioflinger->mAudioHwDevs.valueAt(index);

return audioHwDevice->hwDevice()->setAudioPortConfig(config);

}

4.10 PatchPanel 中函数调用关系

除了给 audioflinger 被用外,他们互相间也有调用,我们来看下它们的调用关系

@ \src\frameworks\av\services\audioflinger\PatchPanel.cpp

setAudioPortConfig 是被 AudioFlinger 调用的,用来设置参数

AudioFlinger::setAudioPortConfig

-----> AudioFlinger::PatchPanel::setAudioPortConfig

当 createAudioPatch 创建失败后会调用

AudioFlinger::PatchPanel::createAudioPatch

----> 调用 AudioFlinger::PatchPanel::createPatchConnections

----> 调用 sendCreateAudioPatchConfigEvent

----> 如果 fail ,调用 AudioFlinger::PatchPanel::releaseAudioPatch

----> 如果 fail ,调用 AudioFlinger::PatchPanel::clearPatchConnections

AudioFlinger::PatchPanel::clearPatchConnections

----> AudioFlinger::PatchPanel::releaseAudioPatch

AudioFlinger::PatchPanel::releaseAudioPatch

----> AudioFlinger::PatchPanel::clearPatchConnections

----> 调用 sendReleaseAudioPatchConfigEvent

4.11 create_audio_patch 底层实现

@ \src\frameworks\av\services\audioflinger\Threads.cpp

status_t AudioFlinger::ThreadBase::sendCreateAudioPatchConfigEvent(const struct audio_patch *patch,audio_patch_handle_t *handle)

{

sp<ConfigEvent> configEvent = (ConfigEvent *)new CreateAudioPatchConfigEvent(*patch, *handle);

status_t status = sendConfigEvent_l(configEvent);

if (status == NO_ERROR) {

CreateAudioPatchConfigEventData *data = (CreateAudioPatchConfigEventData *)configEvent->mData.get();

*handle = data->mHandle;

}

}

// sendConfigEvent_l() must be called with ThreadBase::mLock held

// Can temporarily release the lock if waiting for a reply from processConfigEvents_l().

status_t AudioFlinger::ThreadBase::sendConfigEvent_l(sp<ConfigEvent>& event)

{

status_t status = NO_ERROR;

if (event->mRequiresSystemReady && !mSystemReady) {

event->mWaitStatus = false;

mPendingConfigEvents.add(event);

return status;

}

mConfigEvents.add(event);

ALOGV("sendConfigEvent_l() num events %zu event %d", mConfigEvents.size(), event->mType);

mWaitWorkCV.signal();

------->

return mTrack->signal();

mLock.unlock();

{

Mutex::Autolock _l(event->mLock);

while (event->mWaitStatus) {

if (event->mCond.waitRelative(event->mLock, kConfigEventTimeoutNs) != NO_ERROR) {

event->mStatus = TIMED_OUT;

event->mWaitStatus = false;

}

}

status = event->mStatus;

}

mLock.lock();

return status;

}

处理消息函数:

@ \frameworks\av\services\audioflinger\Threads.cpp

// post condition: mConfigEvents.isEmpty()

void AudioFlinger::ThreadBase::processConfigEvents_l()

{

bool configChanged = false;

while (!mConfigEvents.isEmpty()) {

ALOGV("processConfigEvents_l() remaining events %zu", mConfigEvents.size());

sp<ConfigEvent> event = mConfigEvents[0];

mConfigEvents.removeAt(0);

switch (event->mType) {

case CFG_EVENT_PRIO: {

PrioConfigEventData *data = (PrioConfigEventData *)event->mData.get();

// FIXME Need to understand why this has to be done asynchronously

int err = requestPriority(data->mPid, data->mTid, data->mPrio, data->mForApp,

true /*asynchronous*/);

if (err != 0) {

ALOGW("Policy SCHED_FIFO priority %d is unavailable for pid %d tid %d; error %d",

data->mPrio, data->mPid, data->mTid, err);

}

} break;

case CFG_EVENT_IO: {

IoConfigEventData *data = (IoConfigEventData *)event->mData.get();

ioConfigChanged(data->mEvent, data->mPid);

} break;

case CFG_EVENT_SET_PARAMETER: {

SetParameterConfigEventData *data = (SetParameterConfigEventData *)event->mData.get();

if (checkForNewParameter_l(data->mKeyValuePairs, event->mStatus)) {

configChanged = true;

mLocalLog.log("CFG_EVENT_SET_PARAMETER: (%s) configuration changed",

data->mKeyValuePairs.string());

}

} break;

case CFG_EVENT_CREATE_AUDIO_PATCH: {

const audio_devices_t oldDevice = getDevice();

CreateAudioPatchConfigEventData *data =

(CreateAudioPatchConfigEventData *)event->mData.get();

event->mStatus = createAudioPatch_l(&data->mPatch, &data->mHandle);

const audio_devices_t newDevice = getDevice();

mLocalLog.log("CFG_EVENT_CREATE_AUDIO_PATCH: old device %#x (%s) new device %#x (%s)",

(unsigned)oldDevice, devicesToString(oldDevice).c_str(),

(unsigned)newDevice, devicesToString(newDevice).c_str());

} break;

case CFG_EVENT_RELEASE_AUDIO_PATCH: {

const audio_devices_t oldDevice = getDevice();

ReleaseAudioPatchConfigEventData *data =

(ReleaseAudioPatchConfigEventData *)event->mData.get();

event->mStatus = releaseAudioPatch_l(data->mHandle);

const audio_devices_t newDevice = getDevice();

mLocalLog.log("CFG_EVENT_RELEASE_AUDIO_PATCH: old device %#x (%s) new device %#x (%s)",

(unsigned)oldDevice, devicesToString(oldDevice).c_str(),

(unsigned)newDevice, devicesToString(newDevice).c_str());

} break;

default:

ALOG_ASSERT(false, "processConfigEvents_l() unknown event type %d", event->mType);

break;

}

{

Mutex::Autolock _l(event->mLock);

if (event->mWaitStatus) {

event->mWaitStatus = false;

event->mCond.signal();

}

}

ALOGV_IF(mConfigEvents.isEmpty(), "processConfigEvents_l() DONE thread %p", this);

}

if (configChanged) {

cacheParameters_l();

}

}

@ \src\frameworks\av\services\audioflinger\Threads.cpp

status_t AudioFlinger::MixerThread::createAudioPatch_l(const struct audio_patch *patch,

audio_patch_handle_t *handle)

{

status_t status;

if (property_get_bool("af.patch_park", false /* default_value */)) {

// Park FastMixer to avoid potential DOS issues with writing to the HAL

// or if HAL does not properly lock against access.

AutoPark<FastMixer> park(mFastMixer);

status = PlaybackThread::createAudioPatch_l(patch, handle);

} else {

status = PlaybackThread::createAudioPatch_l(patch, handle);

}

return status;

}

@ \src\frameworks\av\services\audioflinger\Threads.cpp

status_t AudioFlinger::PlaybackThread::createAudioPatch_l(const struct audio_patch *patch,

audio_patch_handle_t *handle)

{

status_t status = NO_ERROR;

// store new device and send to effects

audio_devices_t type = AUDIO_DEVICE_NONE;

for (unsigned int i = 0; i < patch->num_sinks; i++) {

type |= patch->sinks[i].ext.device.type;

}

for (size_t i = 0; i < mEffectChains.size(); i++) {

mEffectChains[i]->setDevice_l(type);

}

// mPrevOutDevice is the latest device set by createAudioPatch_l(). It is not set when

// the thread is created so that the first patch creation triggers an ioConfigChanged callback

bool configChanged = mPrevOutDevice != type;

mOutDevice = type;

mPatch = *patch;

if (mOutput->audioHwDev->supportsAudioPatches()) {

sp<DeviceHalInterface> hwDevice = mOutput->audioHwDev->hwDevice();

status = hwDevice->createAudioPatch(patch->num_sources,

patch->sources,

patch->num_sinks,

patch->sinks,

handle);

} else {

char *address;

if (strcmp(patch->sinks[0].ext.device.address, "") != 0) {

//FIXME: we only support address on first sink with HAL version < 3.0

address = audio_device_address_to_parameter(

patch->sinks[0].ext.device.type,

patch->sinks[0].ext.device.address);

} else {

address = (char *)calloc(1, 1);

}

AudioParameter param = AudioParameter(String8(address));

free(address);

param.addInt(String8(AudioParameter::keyRouting), (int)type);

status = mOutput->stream->setParameters(param.toString());

*handle = AUDIO_PATCH_HANDLE_NONE;

}

if (configChanged) {

mPrevOutDevice = type;

sendIoConfigEvent_l(AUDIO_OUTPUT_CONFIG_CHANGED);

}

return status;

}

最终调用到 hardware 中:

@ \src\frameworks\av\media\libaudiohal\DeviceHalLocal.cpp

status_t DeviceHalLocal::createAudioPatch(

unsigned int num_sources,

const struct audio_port_config *sources,

unsigned int num_sinks,

const struct audio_port_config *sinks,

audio_patch_handle_t *patch) {

if (version() >= AUDIO_DEVICE_API_VERSION_3_0) {

return mDev->create_audio_patch(

mDev, num_sources, sources, num_sinks, sinks, patch);

} else {

return INVALID_OPERATION;

}

}

@ \src\hardware\qcom\audio\hal\audio_hw.c

adev->device.create_audio_patch = adev_create_audio_patch;

adev->device.release_audio_patch = adev_release_audio_patch;

int adev_create_audio_patch(struct audio_hw_device *dev,

unsigned int num_sources,

const struct audio_port_config *sources,

unsigned int num_sinks,

const struct audio_port_config *sinks,

audio_patch_handle_t *handle)

{

return audio_extn_hw_loopback_create_audio_patch(dev,

num_sources,

sources,

num_sinks,

sinks,

handle);

}

经过一系列调用,最终调用到底层的 API,正式开始创建 audio patch

@ \src\hardware\qcom\audio\hal\audio_extn\hw_loopback.c

/* API to create audio patch */

int audio_extn_hw_loopback_create_audio_patch(struct audio_hw_device *dev,

unsigned int num_sources,

const struct audio_port_config *sources,

unsigned int num_sinks,

const struct audio_port_config *sinks,

audio_patch_handle_t *handle)

{

int status = 0;

patch_handle_type_t loopback_patch_type=0x0;

loopback_patch_t loopback_patch, *active_loopback_patch = NULL;

ALOGV("%s : Create audio patch begin", __func__);

/* Use an empty patch from patch database and initialze */

active_loopback_patch = &(audio_loopback_mod->patch_db.loopback_patch[audio_loopback_mod->patch_db.num_patches]);

active_loopback_patch->patch_handle_id = PATCH_HANDLE_INVALID;

active_loopback_patch->patch_state = PATCH_INACTIVE;

active_loopback_patch->patch_stream.ip_hdlr_handle = NULL;

active_loopback_patch->patch_stream.adsp_hdlr_stream_handle = NULL;

memcpy(&active_loopback_patch->loopback_source, &sources[0], sizeof(struct audio_port_config));

memcpy(&active_loopback_patch->loopback_sink, &sinks[0], sizeof(struct audio_port_config));

/* Get loopback patch type based on source and sink ports configuration */

loopback_patch_type = get_loopback_patch_type(active_loopback_patch);

update_patch_stream_config(&active_loopback_patch->patch_stream.in_config, &active_loopback_patch->loopback_source);

update_patch_stream_config(&active_loopback_patch->patch_stream.out_config, &active_loopback_patch->loopback_sink);

// Lock patch database, create patch handle and add patch handle to the list

active_loopback_patch->patch_handle_id = (loopback_patch_type << 8 | audio_loopback_mod->patch_db.num_patches);

/* Is usecase transcode loopback? If yes, invoke loopback driver */

if ((active_loopback_patch->loopback_source.type == AUDIO_PORT_TYPE_DEVICE)&&

(active_loopback_patch->loopback_sink.type == AUDIO_PORT_TYPE_DEVICE)) {

status = create_loopback_session(active_loopback_patch);

}

// Create callback thread to listen to events from HW data path

/* Fill unique handle ID generated based on active loopback patch */

*handle = audio_loopback_mod->patch_db.loopback_patch[audio_loopback_mod->patch_db.num_patches].patch_handle_id;

audio_loopback_mod->patch_db.num_patches++;

exit_create_patch :

ALOGV("%s : Create audio patch end, status(%d)", __func__, status);

pthread_mutex_unlock(&audio_loopback_mod->lock);

return status;

}

// \src\hardware\qcom\audio\hal\audio_extn\hw_loopback.c

/* Create a loopback session based on active loopback patch selected */

int create_loopback_session(loopback_patch_t *active_loopback_patch)

{

int32_t ret = 0, bits_per_sample;

struct audio_usecase *uc_info;

int32_t pcm_dev_asm_rx_id, pcm_dev_asm_tx_id;

char dummy_write_buf[64];

struct audio_device *adev = audio_loopback_mod->adev;

struct compr_config source_config, sink_config;

struct snd_codec codec;

struct audio_port_config *source_patch_config = &active_loopback_patch->loopback_source;

struct audio_port_config *sink_patch_config = &active_loopback_patch->loopback_sink;

struct stream_inout *inout = &active_loopback_patch->patch_stream;

struct adsp_hdlr_stream_cfg hdlr_stream_cfg;

struct stream_in loopback_source_stream;

ALOGD("%s: Create loopback session begin", __func__);

uc_info = (struct audio_usecase *)calloc(1, sizeof(struct audio_usecase));

uc_info->id = USECASE_AUDIO_TRANSCODE_LOOPBACK;

uc_info->type = audio_loopback_mod->uc_type;

uc_info->stream.inout = &active_loopback_patch->patch_stream;

uc_info->devices = active_loopback_patch->patch_stream.out_config.devices;

uc_info->in_snd_device = SND_DEVICE_NONE;

uc_info->out_snd_device = SND_DEVICE_NONE;

list_add_tail(&adev->usecase_list, &uc_info->list);

loopback_source_stream.source = AUDIO_SOURCE_UNPROCESSED;

loopback_source_stream.device = inout->in_config.devices;

loopback_source_stream.channel_mask = inout->in_config.channel_mask;

loopback_source_stream.bit_width = inout->in_config.bit_width;

loopback_source_stream.sample_rate = inout->in_config.sample_rate;

loopback_source_stream.format = inout->in_config.format;

memcpy(&loopback_source_stream.usecase, uc_info,sizeof(struct audio_usecase));

adev->active_input = &loopback_source_stream;

select_devices(adev, uc_info->id);

pcm_dev_asm_rx_id = platform_get_pcm_device_id(uc_info->id, PCM_PLAYBACK);

pcm_dev_asm_tx_id = platform_get_pcm_device_id(uc_info->id, PCM_CAPTURE);

ALOGD("%s: LOOPBACK PCM devices (rx: %d tx: %d) usecase(%d)",__func__, pcm_dev_asm_rx_id, pcm_dev_asm_tx_id, uc_info->id);

/* setup a channel for client <--> adsp communication for stream events */

inout->dev = adev;

inout->client_callback = loopback_stream_cb;

inout->client_cookie = active_loopback_patch;

hdlr_stream_cfg.pcm_device_id = pcm_dev_asm_rx_id;

hdlr_stream_cfg.flags = 0;

hdlr_stream_cfg.type = PCM_PLAYBACK;

ret = audio_extn_adsp_hdlr_stream_open(&inout->adsp_hdlr_stream_handle,&hdlr_stream_cfg);

if (audio_extn_ip_hdlr_intf_supported(source_patch_config->format)) {

ret = audio_extn_ip_hdlr_intf_init(&inout->ip_hdlr_handle, NULL, NULL);

}

if (source_patch_config->format == AUDIO_FORMAT_IEC61937) {

// This is needed to set a known format to DSP and handle

// any format change via ADSP event

codec.id = AUDIO_FORMAT_AC3;

}

/* Set config for compress stream open in capture path */

codec.id = get_snd_codec_id(source_patch_config->format);

codec.ch_in = audio_channel_count_from_out_mask(source_patch_config->

channel_mask);

codec.ch_out = 2; // Irrelevant for loopback case in this direction

codec.sample_rate = source_patch_config->sample_rate;

codec.format = hal_format_to_alsa(source_patch_config->format);

source_config.fragment_size = 1024;

source_config.fragments = 1;

source_config.codec = &codec;

/* Open compress stream in capture path */

active_loopback_patch->source_stream = compress_open(adev->snd_card,

pcm_dev_asm_tx_id, COMPRESS_OUT, &source_config);

if (active_loopback_patch->source_stream && !is_compress_ready(

active_loopback_patch->source_stream)) {

ALOGE("%s: %s", __func__, compress_get_error(active_loopback_patch->

source_stream));

active_loopback_patch->source_stream = NULL;

ret = -EIO;

goto exit;

} else if (active_loopback_patch->source_stream == NULL) {

ALOGE("%s: Failure to open loopback stream in capture path", __func__);

ret = -EINVAL;

goto exit;

}

/* Set config for compress stream open in playback path */

codec.id = get_snd_codec_id(sink_patch_config->format);

codec.ch_in = 2; // Irrelevant for loopback case in this direction

codec.ch_out = audio_channel_count_from_out_mask(sink_patch_config->

channel_mask);

codec.sample_rate = sink_patch_config->sample_rate;

codec.format = hal_format_to_alsa(sink_patch_config->format);

sink_config.fragment_size = 1024;

sink_config.fragments = 1;

sink_config.codec = &codec;

/* Open compress stream in playback path */

active_loopback_patch->sink_stream = compress_open(adev->snd_card,

pcm_dev_asm_rx_id, COMPRESS_IN, &sink_config);

if (active_loopback_patch->sink_stream && !is_compress_ready(

active_loopback_patch->sink_stream)) {

ALOGE("%s: %s", __func__, compress_get_error(active_loopback_patch->

sink_stream));

active_loopback_patch->sink_stream = NULL;

ret = -EIO;

goto exit;

} else if (active_loopback_patch->sink_stream == NULL) {

ALOGE("%s: Failure to open loopback stream in playback path", __func__);

ret = -EINVAL;

goto exit;

}

active_loopback_patch->patch_state = PATCH_CREATED;

if (compress_start(active_loopback_patch->source_stream) < 0) {

ALOGE("%s: Failure to start loopback stream in capture path",

__func__);

ret = -EINVAL;

goto exit;

}

/* Dummy compress_write to ensure compress_start does not fail */

compress_write(active_loopback_patch->sink_stream, dummy_write_buf, 64);

if (compress_start(active_loopback_patch->sink_stream) < 0) {

ALOGE("%s: Cannot start loopback stream in playback path",

__func__);

ret = -EINVAL;

goto exit;

}

if (audio_extn_ip_hdlr_intf_supported(source_patch_config->format) && inout->ip_hdlr_handle) {

ret = audio_extn_ip_hdlr_intf_open(inout->ip_hdlr_handle, true, inout,

USECASE_AUDIO_TRANSCODE_LOOPBACK);

}

/* Move patch state to running, now that session is set up */

active_loopback_patch->patch_state = PATCH_RUNNING;

ALOGD("%s: Create loopback session end: status(%d)", __func__, ret);

return ret;

exit:

ALOGE("%s: Problem in Loopback session creation: \

status(%d), releasing session ", __func__, ret);

release_loopback_session(active_loopback_patch);

return ret;

}

5. Audio Patch 使用场景调用流程分析

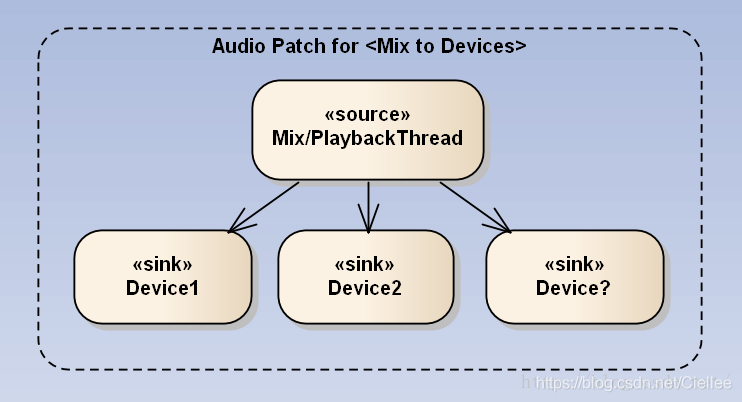

5.1 Mix to Devices(通过 MIX 将 Stream 播放到 多个Device设备)

如下是网友图片,原文见文章末尾:

场景如上图:输入源 Source 是 Mix 后的声音,输出源是多个 Device。

要修改这种,一般在 setOutpuDevice时,调用 setParameters 来配置即可。

代码流程如下:

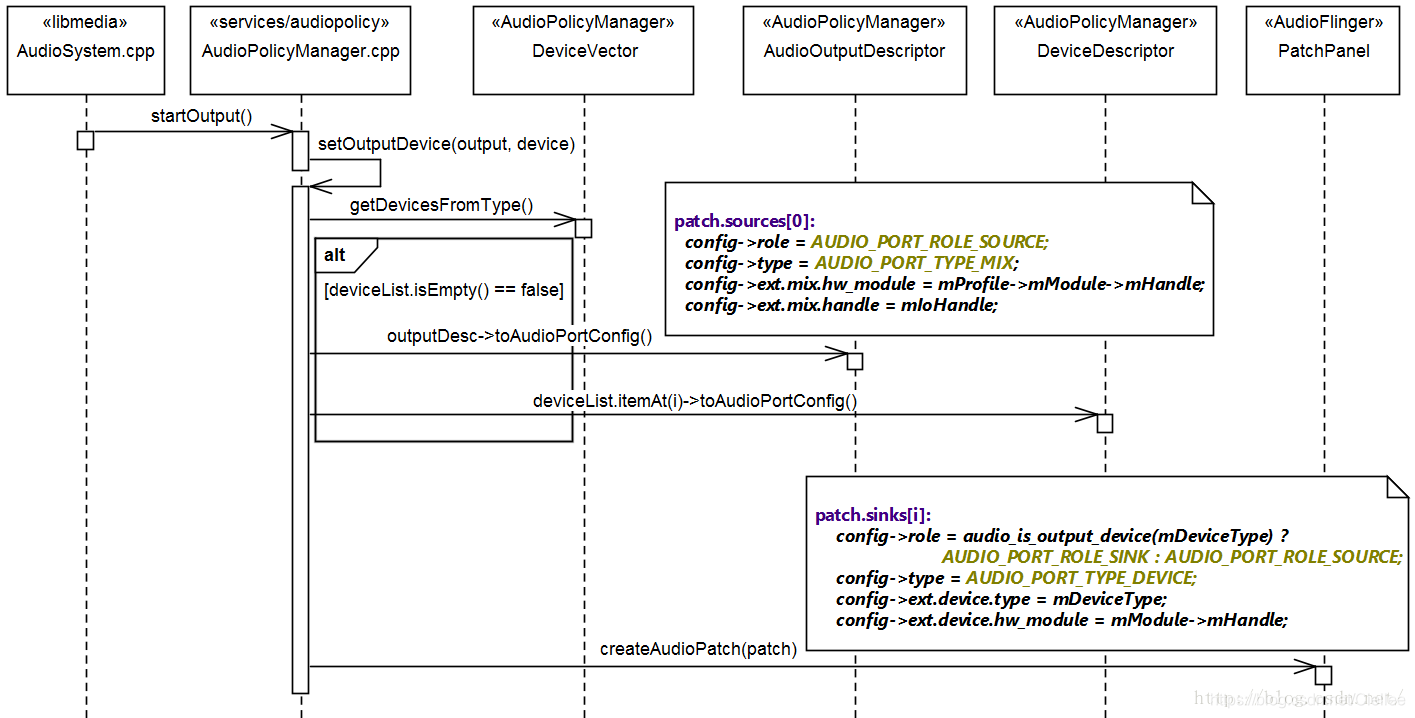

5.1.1 代码调用过程

- AudioSystem.cpp 调用 IAudioPolicyService.cpp的 startOutput( ) 函数

- 在 IAudioPolicyService.cpp 的 startOutput( ) 函数中,通过 Binder 调用 AudioPolicyManager.cpp 的 startOutput( ) 函数。

- 在 AudioPolicyManager.cpp 中的 startOutput( ) 中

获得输出设备描述符 outputDesc

获得 output 的 设备类型

调用 startSource 将 outputDesc , stream, newDevices 绑定在一起 - 在startSource 函数中,如要是播放音乐的话,应用音乐的参数 ,调用 setOutputDevice( ) 配置输出设备,最后配置对应的音量。

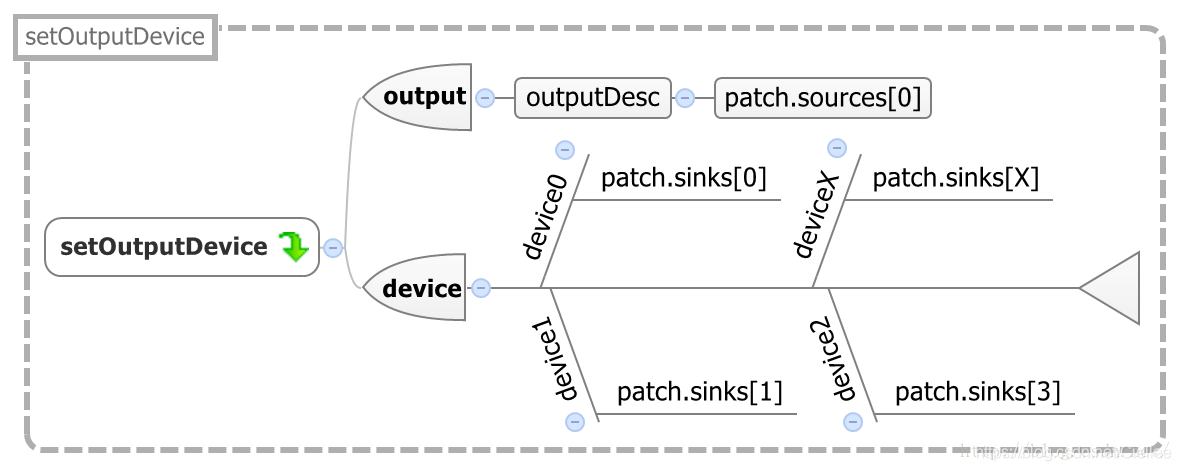

- 在 AudioPolicyManager.cpp 的 setOutputDevice( ) 中

过滤当前支持的设备,保存输出设备的类型,开始修改输出的设备类型

如果没有现成的 patch 新建一个,有现成的则直接获得其索引号

调用 createAudioPatch( ) 创建新的 AudioPatch, 配置输出设备的 patch,

并更新监听,调用 setParameters( ) 配置 audio 参数,更新音量

可以看出,更改 devices 设备是在 AudioPolicyManager.cpp 中的 setOutputDevice( ) 中修改。

@ \src\frameworks\av\media\libaudioclient\AudioSystem.cpp

status_t AudioSystem::startOutput(audio_io_handle_t output,

audio_stream_type_t stream,

audio_session_t session)

{

const sp<IAudioPolicyService>& aps = AudioSystem::get_audio_policy_service();

// 1. AudioSystem 调用 AudioPolicyService 的 startOutput 函数

return aps->startOutput(output, stream, session);

}

-------------------------------------------------------------------------------

// 2. 在AudioPolicyService 的 startOutput 函数中,通过 Binder 调用 AudioPolicyManager 的 startOutput 函数。

@ \src\frameworks\av\media\libaudioclient\IAudioPolicyService.cpp

virtual status_t startOutput(audio_io_handle_t output,

audio_stream_type_t stream,

audio_session_t session)

{

Parcel data, reply;

data.writeInterfaceToken(IAudioPolicyService::getInterfaceDescriptor());

data.writeInt32(output);

data.writeInt32((int32_t) stream);

data.writeInt32((int32_t) session);

remote()->transact(START_OUTPUT, data, &reply);

return static_cast <status_t> (reply.readInt32());

}

-------------------------------------------------------------------------------

@ \src\frameworks\av\services\audiopolicy\managerdefault\AudioPolicyManager.cpp

// 3. 在 AudioPolicyManager.cpp 中

status_t AudioPolicyManager::startOutput(audio_io_handle_t output,

audio_stream_type_t stream,

audio_session_t session)

{

ALOGV("startOutput() output %d, stream %d, session %d",output, stream, session);

ssize_t index = mOutputs.indexOfKey(output);

// 获得输出设备描述符 outputDesc

sp<SwAudioOutputDescriptor> outputDesc = mOutputs.valueAt(index);

// Routing?

mOutputRoutes.incRouteActivity(session);

// 获得 output 的 设备类型

audio_devices_t newDevice;

AudioMix *policyMix = NULL;

const char *address = NULL;

if (outputDesc->mPolicyMix != NULL) {

policyMix = outputDesc->mPolicyMix;

address = policyMix->mDeviceAddress.string();

if ((policyMix->mRouteFlags & MIX_ROUTE_FLAG_RENDER) == MIX_ROUTE_FLAG_RENDER) {

newDevice = policyMix->mDeviceType;

} else {

newDevice = AUDIO_DEVICE_OUT_REMOTE_SUBMIX;

}

} else if (mOutputRoutes.hasRouteChanged(session)) {

newDevice = getNewOutputDevice(outputDesc, false /*fromCache*/);

checkStrategyRoute(getStrategy(stream), output);

} else {

newDevice = AUDIO_DEVICE_NONE;

}

uint32_t delayMs = 0;

// 调用 startSource 将 outputDesc , stream, newDevices 绑定在一起

status_t status = startSource(outputDesc, stream, newDevice, address, &delayMs);

return status;

}

-------------------------------------------------------------------------------

@ src\frameworks\av\services\audiopolicy\managerdefault\AudioPolicyManager.cpp

// 4. 在startSource 函数中,如要是播放音乐的话,应用音乐的参数 ,调用 setOutputDevice 配置输出设备,最后配置对应的音量。

status_t AudioPolicyManager::startSource(const sp<AudioOutputDescriptor>& outputDesc,

audio_stream_type_t stream,

audio_devices_t device,

const char *address,

uint32_t *delayMs)

{

// cannot start playback of STREAM_TTS if any other output is being used

uint32_t beaconMuteLatency = 0;

*delayMs = 0;

// force device change if the output is inactive and no audio patch is already present.

// check active before incrementing usage count

bool force = !outputDesc->isActive() &&

(outputDesc->getPatchHandle() == AUDIO_PATCH_HANDLE_NONE);

// increment usage count for this stream on the requested output:

// NOTE that the usage count is the same for duplicated output and hardware output which is

// necessary for a correct control of hardware output routing by startOutput() and stopOutput()

outputDesc->changeRefCount(stream, 1);

// 如要是播放音乐的话,应用音乐的参数

if (stream == AUDIO_STREAM_MUSIC) {

selectOutputForMusicEffects();

}

if (outputDesc->mRefCount[stream] == 1 || device != AUDIO_DEVICE_NONE) {

// starting an output being rerouted?

uint32_t muteWaitMs = setOutputDevice(outputDesc, device, force, 0, NULL, address);

// handle special case for sonification while in call

if (isInCall()) {

handleIncallSonification(stream, true, false);

}

// apply volume rules for current stream and device if necessary

checkAndSetVolume(stream,

mVolumeCurves->getVolumeIndex(stream, outputDesc->device()),

outputDesc,

outputDesc->device());

// update the outputs if starting an output with a stream that can affect notification routing

handleNotificationRoutingForStream(stream);

}

return NO_ERROR;

}

----------------------------------------------------------------------------------------

@ \src\frameworks\av\services\audiopolicy\managerdefault\AudioPolicyManager.cpp

// 5. 在 AudioPolicyManager.cpp 的 setOutputDevice 中

// 过滤当前支持的设备,保存输出设备的类型,开始修改输出的设备类型

uint32_t AudioPolicyManager::setOutputDevice(const sp<AudioOutputDescriptor>& outputDesc,

audio_devices_t device,

bool force,

int delayMs,

audio_patch_handle_t *patchHandle,

const char* address)

{

ALOGV("setOutputDevice() device %04x delayMs %d", device, delayMs);

// 过滤当前支持的设备

// filter devices according to output selected

device = (audio_devices_t)(device & outputDesc->supportedDevices());

audio_devices_t prevDevice = outputDesc->mDevice;

ALOGV("setOutputDevice() prevDevice 0x%04x", prevDevice);

// 保存输出设备的类型

if (device != AUDIO_DEVICE_NONE) {

outputDesc->mDevice = device;

}

muteWaitMs = checkDeviceMuteStrategies(outputDesc, prevDevice, delayMs);

ALOGV("setOutputDevice() changing device");

// 开始修改输出的设备类型

// 获得设备列表

DeviceVector deviceList;

if ((address == NULL) || (strlen(address) == 0)) {

deviceList = mAvailableOutputDevices.getDevicesFromType(device);

} else {

deviceList = mAvailableOutputDevices.getDevicesFromTypeAddr(device, String8(address));

}

if (!deviceList.isEmpty()) {

struct audio_patch patch;

outputDesc->toAudioPortConfig(&patch.sources[0]); // 配置 sources 的配置信息

patch.num_sources = 1; // 输入 sources 个数为0

patch.num_sinks = 0; // 输出 sinks 个数为 0

for (size_t i = 0; i < deviceList.size() && i < AUDIO_PATCH_PORTS_MAX; i++) {

deviceList.itemAt(i)->toAudioPortConfig(&patch.sinks[i]); // 配置 输出 sinks

patch.num_sinks++;

}

ssize_t index;

// 如果没有现成的 patch 新建一个,有现成的则直接获得其索引号

if (patchHandle && *patchHandle != AUDIO_PATCH_HANDLE_NONE) {

index = mAudioPatches.indexOfKey(*patchHandle);

} else {

index = mAudioPatches.indexOfKey(outputDesc->getPatchHandle());

}

sp< AudioPatch> patchDesc;

audio_patch_handle_t afPatchHandle = AUDIO_PATCH_HANDLE_NONE;

if (index >= 0) {

patchDesc = mAudioPatches.valueAt(index);

afPatchHandle = patchDesc->mAfPatchHandle;

}

// 调用 createAudioPatch 创建新的 AudioPatch

status_t status = mpClientInterface->createAudioPatch(&patch,

&afPatchHandle,

delayMs);

ALOGV("setOutputDevice() createAudioPatch returned %d patchHandle %d"

"num_sources %d num_sinks %d", status, afPatchHandle, patch.num_sources, patch.num_sinks);

if (status == NO_ERROR) {

patchDesc->mPatch = patch;

patchDesc->mAfPatchHandle = afPatchHandle;

if (patchHandle) {

*patchHandle = patchDesc->mHandle;

}

// 配置输出设备的 patch,并更新监听

outputDesc->setPatchHandle(patchDesc->mHandle);

nextAudioPortGeneration();

mpClientInterface->onAudioPatchListUpdate();

}

// 调用 setParameters 配置 audio 参数

// inform all input as well

for (size_t i = 0; i < mInputs.size(); i++) {

const sp<AudioInputDescriptor> inputDescriptor = mInputs.valueAt(i);

if (!is_virtual_input_device(inputDescriptor->mDevice)) {

AudioParameter inputCmd = AudioParameter();

ALOGV("%s: inform input %d of device:%d", __func__,

inputDescriptor->mIoHandle, device);

inputCmd.addInt(String8(AudioParameter::keyRouting),device);

mpClientInterface->setParameters(inputDescriptor->mIoHandle,

inputCmd.toString(),

delayMs);

}

}

}

// 更新音量

// update stream volumes according to new device

applyStreamVolumes(outputDesc, device, delayMs);

return muteWaitMs;

}

讲到这果,AudioFliner 的 patch 启动流程就讲完了,AudioFlinger 初始化主要是对 AudioPatch 的初始化。

本章有点长了,下章我们插入一章,详细学习下 AudioFlinger 中其他的模块及接口,

借此来理解 AudioPolicy 和 AudioFlinger 两者之间的关系,及各自的作用。

参考文章如下,感谢!