Refs: https://docs.opencv.org/4.3.0/d3/dc1/tutorial_basic_linear_transform.html

目录

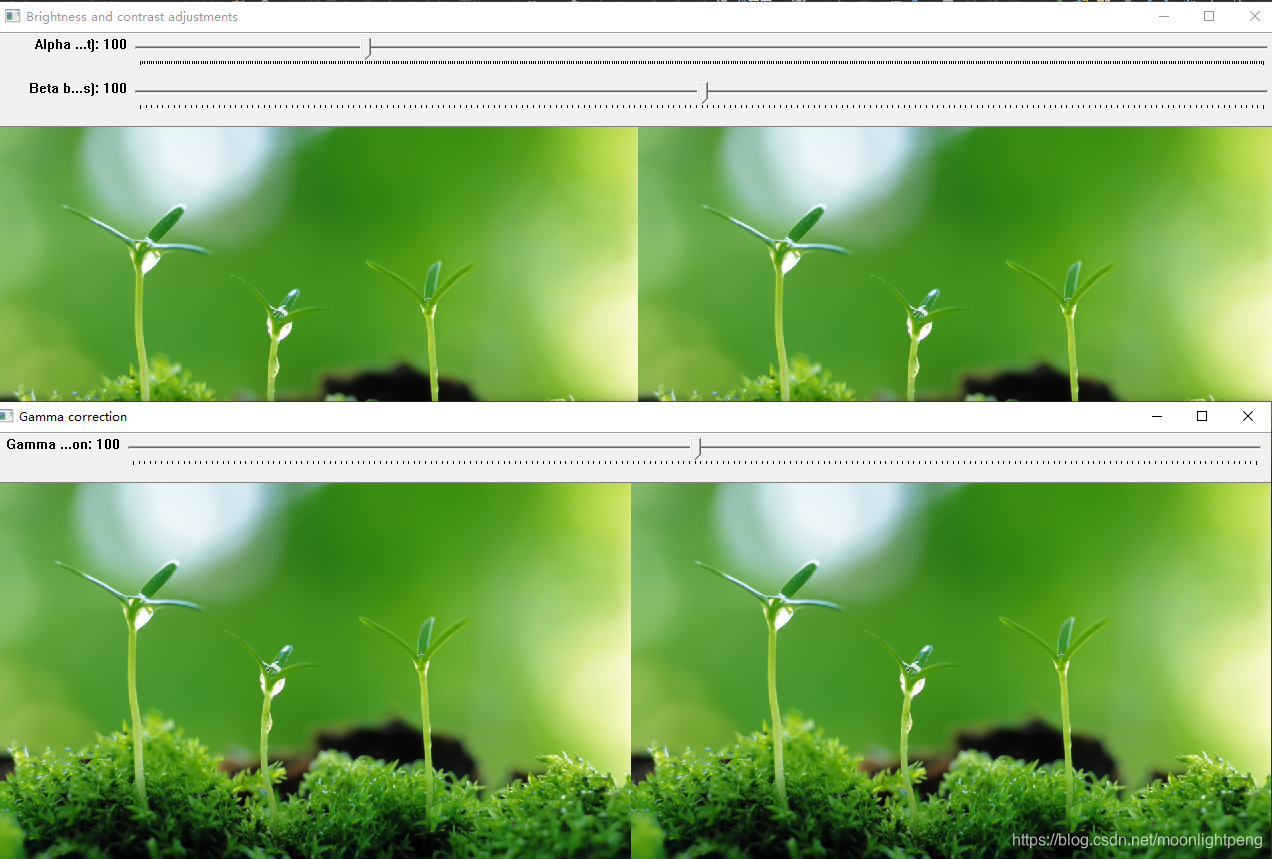

Brightness and contrast adjustments

Notice the following (C++ code only):

Goal

In this tutorial you will learn how to:

- Access pixel values

- Initialize a matrix with zeros

- Learn what cv::saturate_cast does and why it is useful

- Get some cool info about pixel transformations

- Improve the brightness of an image on a practical example

Brightness and contrast adjustments

-

Two commonly used point processes are multiplication and addition with a constant:

g(x)=αf(x)+β

- The parameters α>0 and β are often called the gain and bias parameters; sometimes these parameters are said to control contrast and brightness respectively.

-

You can think of f(x) as the source image pixels and g(x) as the output image pixels. Then, more conveniently we can write the expression as:

g(i,j)=α⋅f(i,j)+β

where i and j indicates that the pixel is located in the i-th row and j-th column.

Note

Instead of using the for loops to access each pixel, we could have simply used this command:

image.convertTo(new_image, -1, alpha, beta);

where cv::Mat::convertTo would effectively perform *new_image = a*image + beta*. However, we wanted to show you how to access each pixel. In any case, both methods give the same result but convertTo is more optimized and works a lot faster.

to perform the operation g(i,j)=α⋅f(i,j)+β we will access to each pixel in image. Since we are operating with BGR images, we will have three values per pixel (B, G and R), so we will also access them separately. Here is the piece of code:

for( int y = 0; y < image.rows; y++ ) {

for( int x = 0; x < image.cols; x++ ) {

for( int c = 0; c < image.channels(); c++ ) {

new_image.at<Vec3b>(y,x)[c] =

saturate_cast<uchar>( alpha*image.at<Vec3b>(y,x)[c] + beta );

}

}

}Notice the following (C++ code only):

- To access each pixel in the images we are using this syntax: image.at<Vec3b>(y,x)[c] where y is the row, x is the column and c is B, G or R (0, 1 or 2).

- Since the operation α⋅p(i,j)+β can give values out of range or not integers (if α is float), we use cv::saturate_cast to make sure the values are valid.

C++

#include <iostream>

#include <opencv.hpp>

using namespace cv;

// we're NOT "using namespace std;" here, to avoid collisions between the beta variable and std::beta in c++17

using std::cin;

using std::cout;

using std::endl;

namespace

{

/** Global Variables */

int alpha = 100;

int beta = 100;

int gamma_cor = 100;

Mat img_original, img_corrected, img_gamma_corrected;

void basicLinearTransform(const Mat &img, const double alpha_, const int beta_)

{

Mat res;

img.convertTo(res, -1, alpha_, beta_);

hconcat(img, res, img_corrected);

imshow("Brightness and contrast adjustments", img_corrected);

}

void gammaCorrection(const Mat &img, const double gamma_)

{

CV_Assert(gamma_ >= 0);

//! [changing-contrast-brightness-gamma-correction]

Mat lookUpTable(1, 256, CV_8U);

uchar* p = lookUpTable.ptr();

for (int i = 0; i < 256; ++i)

p[i] = saturate_cast<uchar>(pow(i / 255.0, gamma_) * 255.0);

Mat res = img.clone();

LUT(img, lookUpTable, res);

//! [changing-contrast-brightness-gamma-correction]

hconcat(img, res, img_gamma_corrected);

imshow("Gamma correction", img_gamma_corrected);

}

void alpha_beta_value(const Mat &image, const double alpha_, const int beta_)

{

//Mat new_image = Mat(img_original.rows, img_original.cols, img_original.type());

Mat new_image = Mat(img_original.size(), img_original.type());

for (int y = 0; y < image.rows; y++) {

for (int x = 0; x < image.cols; x++) {

for (int c = 0; c < image.channels(); c++) {

new_image.at<Vec3b>(y, x)[c] =

saturate_cast<uchar>(alpha_*image.at<Vec3b>(y, x)[c] + beta_);

}

}

}

hconcat(image, new_image, img_corrected);

imshow("Brightness and contrast adjustments", img_corrected);

}

void on_linear_transform_alpha_trackbar(int, void *)

{

double alpha_value = alpha / 100.0;

int beta_value = beta - 100;

//basicLinearTransform(img_original, alpha_value, beta_value);

alpha_beta_value(img_original, alpha_value, beta_value);

}

void on_linear_transform_beta_trackbar(int, void *)

{

double alpha_value = alpha / 100.0;

int beta_value = beta - 100;

//basicLinearTransform(img_original, alpha_value, beta_value);

alpha_beta_value(img_original, alpha_value, beta_value);

}

void on_gamma_correction_trackbar(int, void *)

{

double gamma_value = gamma_cor / 100.0;

gammaCorrection(img_original, gamma_value);

}

}

int main(int argc, char** argv)

{

img_original = imread("Grass.jpg", IMREAD_COLOR);

//Mat image_grass = imread("Grass.jpg", IMREAD_GRAYSCALE);

if (img_original.empty())

{

printf("No data\n");

return -1;

}

//pyrDown(img_original, img_original, Size(img_original.cols / 3, img_original.rows / 3));

pyrDown(img_original, img_original);

resize(img_original, img_original, Size(img_original.cols / 1.5, img_original.rows / 1.5), 0, 0, 0);

img_corrected = Mat(img_original.rows, img_original.cols * 2, img_original.type());

img_gamma_corrected = Mat(img_original.rows, img_original.cols * 2, img_original.type());

hconcat(img_original, img_original, img_corrected);

hconcat(img_original, img_original, img_gamma_corrected);

// 类似于hconcat()水平连接两张图, 也会有垂直连接的函数

//Mat img_vtest = Mat(img_original.rows * 2, img_original.cols, img_original.type());

//vconcat(img_original, img_original, img_vtest);

//imshow("image1", img_corrected);

//imshow("image2", img_vtest);

//waitKey();

namedWindow("Brightness and contrast adjustments");

namedWindow("Gamma correction");

createTrackbar("Alpha gain (contrast)", "Brightness and contrast adjustments", &alpha, 500, on_linear_transform_alpha_trackbar);

createTrackbar("Beta bias (brightness)", "Brightness and contrast adjustments", &beta, 200, on_linear_transform_beta_trackbar);

createTrackbar("Gamma correction", "Gamma correction", &gamma_cor, 200, on_gamma_correction_trackbar);

on_linear_transform_alpha_trackbar(0, 0);

on_gamma_correction_trackbar(0, 0);

waitKey();

imwrite("linear_transform_correction.png", img_corrected);

imwrite("gamma_correction.png", img_gamma_corrected);

return 0;

}

Python

from __future__ import print_function

from builtins import input

import cv2 as cv

import numpy as np

import argparse

# Read image given by user

## [basic-linear-transform-load]

parser = argparse.ArgumentParser(description='Code for Changing the contrast and brightness of an image! tutorial.')

parser.add_argument('--input', help='Path to input image.', default='lena.jpg')

args = parser.parse_args()

image = cv.imread(cv.samples.findFile(args.input))

if image is None:

print('Could not open or find the image: ', args.input)

exit(0)

## [basic-linear-transform-load]

## [basic-linear-transform-output]

new_image = np.zeros(image.shape, image.dtype)

## [basic-linear-transform-output]

## [basic-linear-transform-parameters]

alpha = 1.0 # Simple contrast control

beta = 0 # Simple brightness control

# Initialize values

print(' Basic Linear Transforms ')

print('-------------------------')

try:

alpha = float(input('* Enter the alpha value [1.0-3.0]: '))

beta = int(input('* Enter the beta value [0-100]: '))

except ValueError:

print('Error, not a number')

## [basic-linear-transform-parameters]

# Do the operation new_image(i,j) = alpha*image(i,j) + beta

# Instead of these 'for' loops we could have used simply:

# new_image = cv.convertScaleAbs(image, alpha=alpha, beta=beta)

# but we wanted to show you how to access the pixels :)

## [basic-linear-transform-operation]

for y in range(image.shape[0]):

for x in range(image.shape[1]):

for c in range(image.shape[2]):

new_image[y,x,c] = np.clip(alpha*image[y,x,c] + beta, 0, 255)

## [basic-linear-transform-operation]

## [basic-linear-transform-display]

# Show stuff

cv.imshow('Original Image', image)

cv.imshow('New Image', new_image)

# Wait until user press some key

cv.waitKey()Practical example

https://docs.opencv.org/4.3.0/d3/dc1/tutorial_basic_linear_transform.html