之前写过一篇python3.5 单进程 爬取 3gpp R13 版本的博客:

https://blog.csdn.net/m0_37509180/article/details/74800553

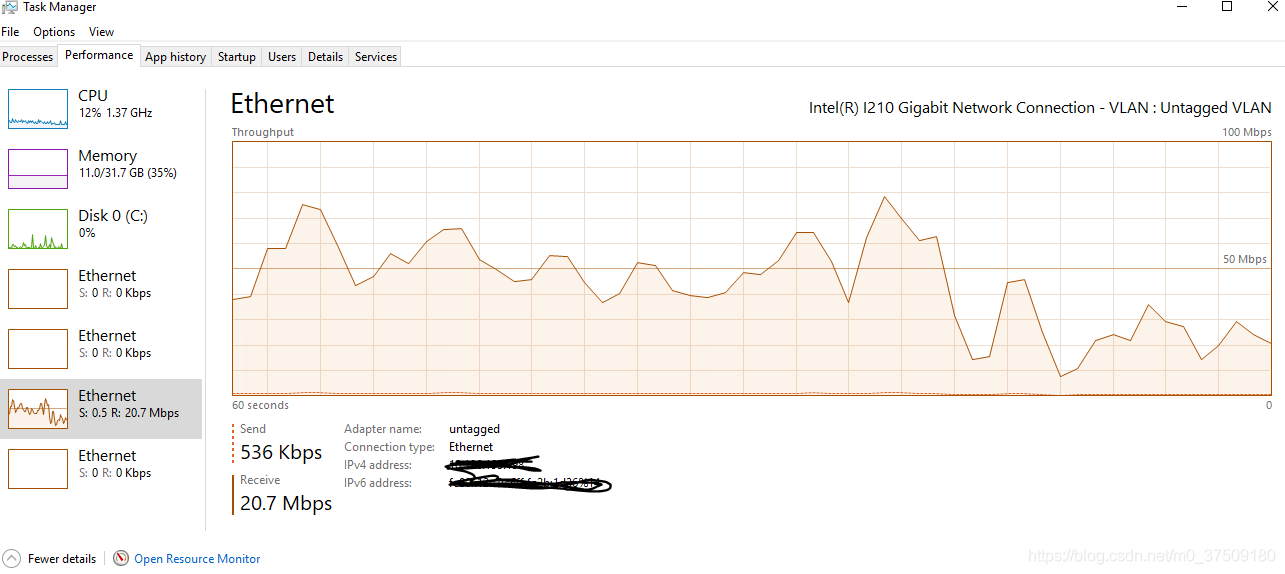

也能下载,但是缺点就是下载非常慢,每次更新都要下半天,现在写了个python3.7版本基于多进程的爬取程序,速度刚刚的!

源代码如下:

from urllib.request import urlopen,urlretrieve

import re,os

from bs4 import BeautifulSoup

from multiprocessing import Pool

start_url = "http://www.3gpp.org/ftp/Specs//archive/"

with urlopen(start_url) as f:

contentNet = f.read().decode('utf-8')

soup = BeautifulSoup(contentNet,'html.parser')

series_url = []

for link in soup.find_all('a'):

res = re.search('\d\d_series',link.string)

if res:

#series_path,series_url[]

seriesName = res.group()

if not os.path.exists(".\\3gpp\\"+seriesName):

os.makedirs(".\\3gpp\\"+seriesName)

series_url.append(start_url+seriesName+"/")

seriesLink = start_url+seriesName+"/"

print("seriesLink:"+seriesLink+"\n")

print("series_url length:"+str(len(series_url))+"\n")

def download(seriesLink):

seriesName = seriesLink.split('/')[-2]

print("seriesName:"+seriesName+"\n")

with urlopen(seriesLink) as f1:

contentSeries = f1.read().decode('utf-8')

soupSeries = BeautifulSoup(contentSeries,'html.parser')

for fileLink in soupSeries.find_all('a'):

res1 = re.search('\d\d.[0-9]*[\s\S]*',fileLink.string)

if res1:

fileNameLink = res1.group()

fileLink = seriesLink+fileNameLink+"/"

print("fileLink:"+fileLink+"\n")

with urlopen(fileLink) as f2:

contentFile = f2.read().decode('utf-8')

soupFile = BeautifulSoup(contentFile,'html.parser')

if len(soupFile.find_all('a'))>=2:

fileName = soupFile.find_all('a')[-1].string

downloadLink = fileLink+fileName

print("downloadLink:"+downloadLink+"\n")

try:

if os.path.exists(".\\3gpp\\"+seriesName+"\\"+fileName):

continue

else:

urlretrieve(downloadLink, ".\\3gpp\\"+seriesName+"\\"+fileName)

except:

pass

if __name__ == "__main__":

p = Pool(len(series_url))

for seriesLink in series_url:

p.apply_async(download,args=(seriesLink,))

p.close()

p.join()