版权声明:本文为博主原创文章,未经博主允许不得转载。 https://blog.csdn.net/ZZZJX7/article/details/52860161

最近工作上挺多事的,心有点乱,感觉是时候静下心来了。

之前就想找个爬取补天的厂商,又碰巧在一个论坛看到一篇文章,然后自己就改改了,算二次原创吧,自己加了多进程并且自动获取最终页数。

#coding=utf-8

import sys

reload(sys)

sys.setdefaultencoding("utf-8")

import multiprocessing

import time

import requests as req

import re

import lxml

from bs4 import BeautifulSoup

def Spide(url):

try:

html=req.get(url,timeout=60).text

print url

html=html.encode('utf-8')

pat='<td align="left" style="padding-left:20px;">.*</td>'

u=re.compile(pat)

ress=u.findall(html)

res=[]

R=[]

for i in ress:

u=re.compile('>.*<')

res+=u.findall(i)

for i in res:

a=i.strip('<>')

#print a

with open('360.txt','a+') as f:

f.write(a+'\n')

except Exception,e:

pass

def get_page(url):

a = req.get(url)

if a.status_code == 200:

soup = BeautifulSoup(a.text,"lxml")

pages = soup.select("div.pages > a")[-1].get('href').split('/')[-1]

return pages

if __name__ == "__main__":

pool = multiprocessing.Pool(processes=4)

url_list=[]

url='https://butian.360.cn/company/lists/page/1'

page=int(get_page(url))

for i in range(1,int(page)):

url='https://butian.360.cn/company/lists/page/'+str(i)

url_list.append(url)

pool.map(Spide,url_list)

pool.close()

pool.join()

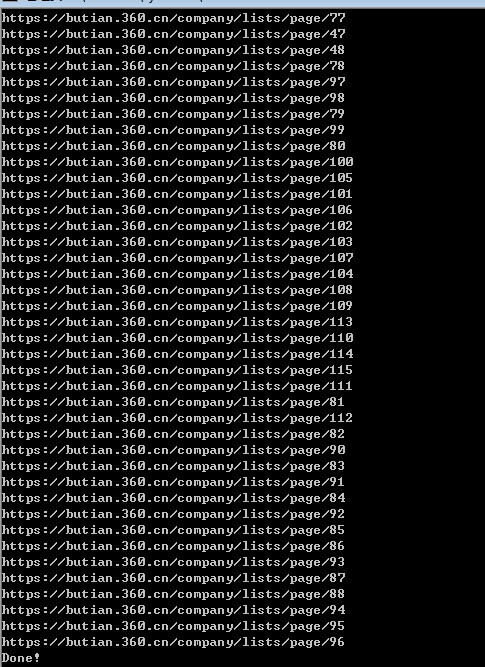

print("Done!")效果如下,感觉还可以: