通常情况下,停止进程显存会释放

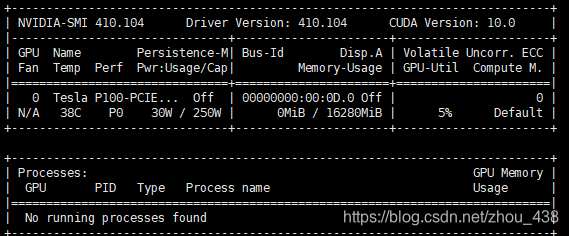

但是如果在不正常情况关闭进程,可能不会释放,这个时候就会出现这样的情况:

Mon Oct 19 16:00:00 2020

+-----------------------------------------------------------------------------+

| NVIDIA-SMI 410.104 Driver Version: 410.104 CUDA Version: 10.0 |

|-------------------------------+----------------------+----------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

|===============================+======================+======================|

| 0 Tesla P100-PCIE... Off | 00000000:00:0D.0 Off | 0 |

| N/A 38C P0 35W / 250W | 16239MiB / 16280MiB | 0% Default |

+-------------------------------+----------------------+----------------------+

+-----------------------------------------------------------------------------+

| Processes: GPU Memory |

| GPU PID Type Process name Usage |

|=============================================================================|

+-----------------------------------------------------------------------------+

解决方式,当然是干掉正常使用显存的进程

想要释放进程,当然需要找到进程

fuser -v /dev/nvidia*

USER PID ACCESS COMMAND

/dev/nvidia0: root 26031 F...m python

root 26035 F...m python

root 26041 F...m python

root 26050 F...m python

root 32512 F...m ZMQbg/1

/dev/nvidiactl: root 26031 F...m python

root 26035 F...m python

root 26041 F...m python

root 26050 F...m python

root 32512 F.... ZMQbg/1

/dev/nvidia-uvm: root 26031 F.... python

root 26035 F.... python

root 26041 F.... python

root 26050 F.... python

root 32512 F.... ZMQbg/1

然后使用kill -9 26031杀死进程,进程 释放资源,需要一次对上面查询到的进程进行依次kill

不出意外就正常了: