实例1:中国大学排名爬虫

链接:

http://www.zuihaodaxue.cn/zuihaodaxuepaiming2016.html

功能描述:

输入:大学排名url链接

输出:大学排名信息的屏幕输出

技术路线:requests-bs4

定向爬虫:仅对输入url进行爬取,不扩展爬取

验证可行性

程序的结构设计

步骤1:从网络上获取大学排名网页内容

步骤2:提取网页内容中信息到合适的数据结构

步骤3:利用数据结构展示并输出结果

代码实现

#CrawUnivRankingB.py

import requests

from bs4 import BeautifulSoup

import bs4

def getHTMLText(url):

try:

r = requests.get(url, timeout=30)

r.raise_for_status()

r.encoding = r.apparent_encoding

return r.text

except:

return ""

def fillUnivList(ulist, html):

soup = BeautifulSoup(html, "html.parser")

for tr in soup.find('tbody').children:

if isinstance(tr, bs4.element.Tag):

tds = tr('td')

ulist.append([tds[0].string, tds[1].string, tds[3].string])

def printUnivList(ulist, num):

tplt = "{0:^10}\t{1:{3}^10}\t{2:^10}"

print(tplt.format("排名","学校名称","总分",chr(12288)))

for i in range(num):

u=ulist[i]

print(tplt.format(u[0],u[1],u[2],chr(12288)))

def main():

uinfo = []

url = 'http://www.zuihaodaxue.cn/zuihaodaxuepaiming2016.html'

html = getHTMLText(url)

fillUnivList(uinfo, html)

printUnivList(uinfo, 20) # 20 univs

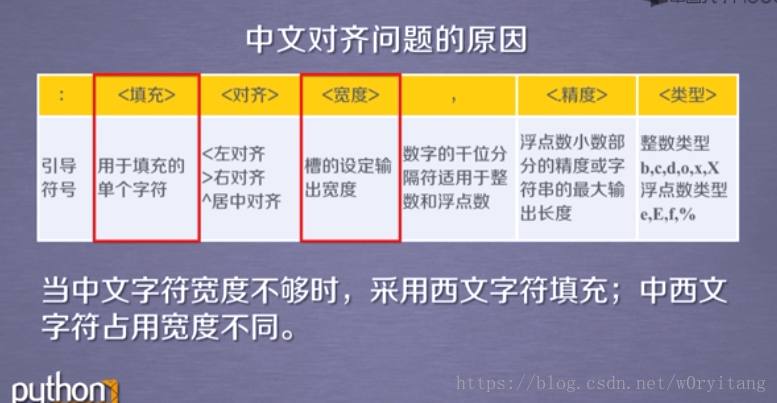

main()中文对齐问题:

解决:

采用中文空格填充 chr(12288)

format格式化介绍:

http://www.runoob.com/python/att-string-format.html

实例2:淘宝商品比价定向爬虫

目标:获取淘宝搜索页面的信息,提取其中的商品名称与价格

理解:淘宝的搜索接口翻页的处理

技术路线: requests-re

链接分析:

可行性分析:

- robots.txt

- 不要不加限制地访问页面

程序结构设计

代码

#CrowTaobaoPrice.py

import requests

import re

def getHTMLText(url):

try:

r = requests.get(url, timeout=30)

r.raise_for_status()

r.encoding = r.apparent_encoding

return r.text

except:

return ""

def parsePage(ilt, html):

try:

plt = re.findall(r'\"view_price\"\:\"[\d\.]*\"',html)

tlt = re.findall(r'\"raw_title\"\:\".*?\"',html)

for i in range(len(plt)):

price = eval(plt[i].split(':')[1])

title = eval(tlt[i].split(':')[1])

ilt.append([price , title])

except:

print("")

def printGoodsList(ilt):

tplt = "{:4}\t{:8}\t{:16}"

print(tplt.format("序号", "价格", "商品名称"))

count = 0

for g in ilt:

count = count + 1

print(tplt.format(count, g[0], g[1]))

def main():

goods = '书包'

depth = 3

start_url = 'http://s.taobao.com/search?q=' + goods

infoList = []

for i in range(depth):

try:

url = start_url + '&s=' + str(44*i)

html = getHTMLText(url)

parsePage(infoList, html)

except:

continue

printGoodsList(infoList)

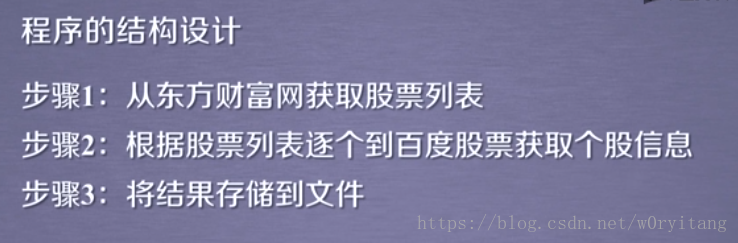

main()实例3:股票数据定向爬虫

功能描述:获取上交所和深交所所有股票的名称和交易信息

输出:保存到文件

技术路线:requests-bs4-re

选取原则:股票信息静态存在于html页面中,非js代码生成,没有robots协议限制

程序设计

代码

#CrawBaiduStocksB.py

import requests

from bs4 import BeautifulSoup

import traceback

import re

def getHTMLText(url, code="utf-8"):

try:

r = requests.get(url)

r.raise_for_status()

r.encoding = code

return r.text

except:

return ""

def getStockList(lst, stockURL):

html = getHTMLText(stockURL, "GB2312")

soup = BeautifulSoup(html, 'html.parser')

a = soup.find_all('a')

for i in a:

try:

href = i.attrs['href']

lst.append(re.findall(r"[s][hz]\d{6}", href)[0])

except:

continue

def getStockInfo(lst, stockURL, fpath):

count = 0

for stock in lst:

url = stockURL + stock + ".html"

html = getHTMLText(url)

try:

if html=="":

continue

infoDict = {}

soup = BeautifulSoup(html, 'html.parser')

stockInfo = soup.find('div',attrs={'class':'stock-bets'})

name = stockInfo.find_all(attrs={'class':'bets-name'})[0]

infoDict.update({'股票名称': name.text.split()[0]})

keyList = stockInfo.find_all('dt')

valueList = stockInfo.find_all('dd')

for i in range(len(keyList)):

key = keyList[i].text

val = valueList[i].text

infoDict[key] = val

with open(fpath, 'a', encoding='utf-8') as f:

f.write( str(infoDict) + '\n' )

count = count + 1

print("\r当前进度: {:.2f}%".format(count*100/len(lst)),end="")

except:

count = count + 1

print("\r当前进度: {:.2f}%".format(count*100/len(lst)),end="")

continue

def main():

stock_list_url = 'http://quote.eastmoney.com/stocklist.html'

stock_info_url = 'http://gupiao.baidu.com/stock/'

output_file = 'C:/Users/horyit/Desktop/杂项'

slist=[]

getStockList(slist, stock_list_url)

getStockInfo(slist, stock_info_url, output_file)

main()