AI实战训练营&MMPretrain代码实战

一 安装MMPretrain

首先默认大家都是安装好mmcv以及mim的,用mim的话比较方便,不会出现奇奇怪怪去的错误。

- 安装一下openmim ,用来编译下面这个是我用的服务器的基本配置信息。

pip3 install openmim

- 我们直接从官网git

git clone https://github.com/open-mmlab/mmpretrain.git

- 第二步 对git下来的代码编译 先得cd到mmpretrain目录里面 。注意 : **e后面有个 .**代表本级目录

cd mmpretrain

mim install -e .

如果需要多模态的话,需要执行下面的命令

mim install -e ".[multimodal]"

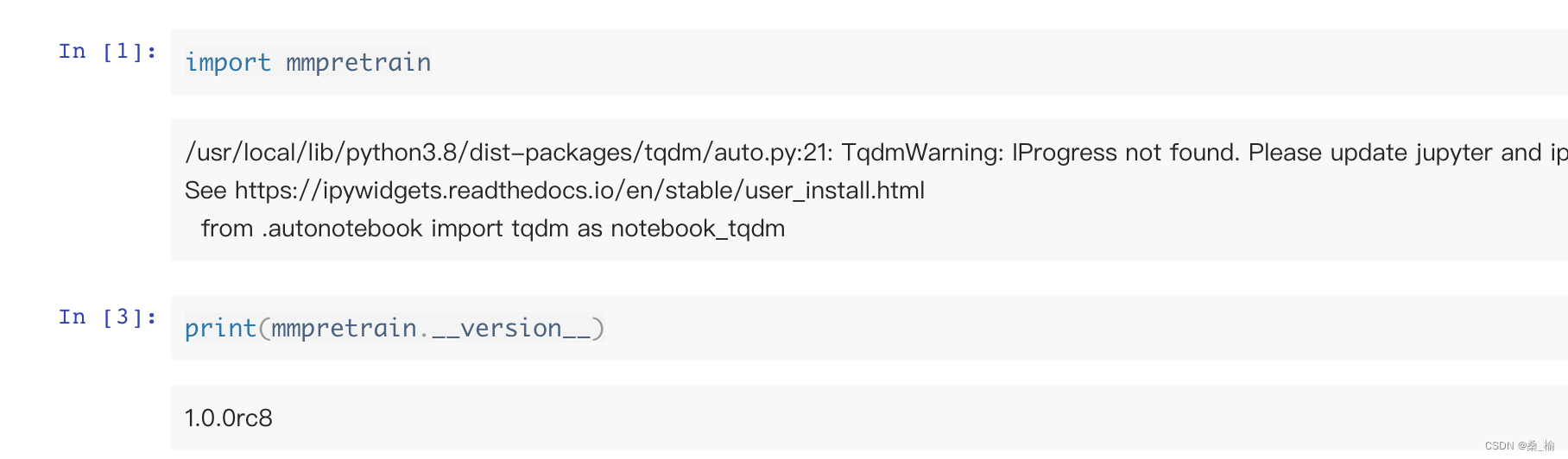

最后就是验证安装。

如果出现上面的信息,就说明安装成功了!!!

二 解析MMPretrain

我们首先导入mmpretrain中的下面库。

from mmpretrain import get_model,list_models,inference_model

接着我们用list_model可以查看具体的模型,我们以resnet18为例子:

list_models(task='Image Classification',pattern='resnet18')

# 第一个参数是 图分类

代码运行结果:

[‘resnet18_8xb16_cifar10’, ‘resnet18_8xb32_in1k’]

然后我们测试一下 get_model,用这个模型做预测推理

model = get_model('resnet18_8xb16_cifar10')

type(model)

代码运行结果:

mmpretrain.models.classifiers.image.ImageClassifier

导入下面这个库,可以进行推理,但是得加上模型参数,不然不准确

inference_model(model,'./mmpretrain/demo/bird.JPEG',show=True)

可以看到,如果不加参数的话,推理出来的结果就会很离谱。

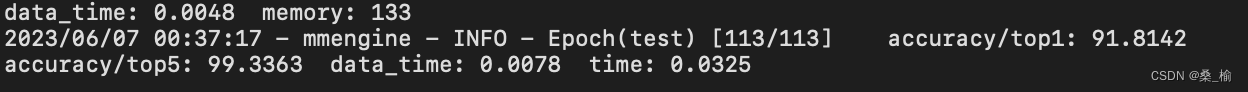

三 作业

这里是用ResNet50做分类,我们选择mmpretrain文件夹下的configs文件夹下的resnet中的resnet50_8xb32_in1k.py 文件内容如下。

我们根据上面的文件,写出自己的配置文件,下面是我写的配置文件

- resnet50.py 记录了主干网络情况 注意类别数得改成30,因为这里是30分类,预训练模型一定不要选错

# model settings

model = dict(

type='ImageClassifier',

backbone=dict(

type='ResNet',

depth=50,

num_stages=4,

out_indices=(3, ),

style='pytorch'),

neck=dict(type='GlobalAveragePooling'),

head=dict(

type='LinearClsHead',

num_classes=30,

in_channels=2048,

loss=dict(type='CrossEntropyLoss', loss_weight=1.0),

topk=(1, 5),

),

# 在这里我们会加载预训练模型,方便训练,这里可以直接去官网下载 init_cfg=dict(type='Pretrained',checkpoint='https://download.openmmlab.com/mmclassification/v0/resnet/resnet50_8xb32_in1k_20210831-ea4938fc.pth')

)

- imagenet_bs32.py 记录了图像处理流程 注意:这里的数据类型要改成’CustomDataset’,因为我们是按照train-类别-图片的形式划分的数据集

# dataset settings

dataset_type = 'CustomDataset'

data_preprocessor = dict(

num_classes=30,

# RGB format normalization parameters

mean=[123.675, 116.28, 103.53],

std=[58.395, 57.12, 57.375],

# convert image from BGR to RGB

to_rgb=True,

)

train_pipeline = [

dict(type='LoadImageFromFile'),

dict(type='RandomResizedCrop', scale=224),

dict(type='RandomFlip', prob=0.5, direction='horizontal'),

dict(type='PackInputs'),

]

test_pipeline = [

dict(type='LoadImageFromFile'),

dict(type='ResizeEdge', scale=256, edge='short'),

dict(type='CenterCrop', crop_size=224),

dict(type='PackInputs'),

]

train_dataloader = dict(

batch_size=32,

num_workers=4,

dataset=dict(

type=dataset_type,

data_root='/gemini/code/mmpretrain/data/train',

pipeline=train_pipeline),

sampler=dict(type='DefaultSampler', shuffle=True),

)

val_dataloader = dict(

batch_size=32,

num_workers=2,

dataset=dict(

type=dataset_type,

data_root='/gemini/code/mmpretrain/data/val',

pipeline=test_pipeline),

sampler=dict(type='DefaultSampler', shuffle=False),

)

val_evaluator = dict(type='Accuracy', topk=(1, 5))

# # If you want standard test, please manually configure the test dataset

test_dataloader = val_dataloader

test_evaluator = val_evaluator

- imagenet_bs256.py 记录了训练中的各种参数 auto_scale_lr = dict(base_batch_size=256) 这个是多卡训练,我们用不到,可以注销掉,然后学习率稍微调小一点

# optimizer

optim_wrapper = dict(

optimizer=dict(type='SGD', lr=0.01, momentum=0.9, weight_decay=0.0001))

# learning policy

param_scheduler = dict(

type='MultiStepLR', by_epoch=True, milestones=[30, 60, 90], gamma=0.1)

# train, val, test setting

train_cfg = dict(by_epoch=True, max_epochs=50, val_interval=1)

val_cfg = dict()

test_cfg = dict()

# NOTE: `auto_scale_lr` is for automatically scaling LR,

# based on the actual training batch size.

# auto_scale_lr = dict(base_batch_size=256)

- default_runtime.py 记录了训练中间过程像日志什么的

# defaults to use registries in mmpretrain

default_scope = 'mmpretrain'

# configure default hooks

default_hooks = dict(

# record the time of every iteration.

timer=dict(type='IterTimerHook'),

# print log every 100 iterations.

logger=dict(type='LoggerHook', interval=100),

# enable the parameter scheduler.

param_scheduler=dict(type='ParamSchedulerHook'),

# save checkpoint per epoch.

checkpoint=dict(type='CheckpointHook', interval=1 , max_keep_ckpts=10, save_best='auto'),

# set sampler seed in distributed evrionment.

sampler_seed=dict(type='DistSamplerSeedHook'),

# validation results visualization, set True to enable it.

visualization=dict(type='VisualizationHook', enable=False),

)

# configure environment

env_cfg = dict(

# whether to enable cudnn benchmark

cudnn_benchmark=False,

# set multi process parameters

mp_cfg=dict(mp_start_method='fork', opencv_num_threads=0),

# set distributed parameters

dist_cfg=dict(backend='nccl'),

)

# set visualizer

vis_backends = [dict(type='LocalVisBackend')]

visualizer = dict(type='UniversalVisualizer', vis_backends=vis_backends)

# set log level

log_level = 'INFO'

# load from which checkpoint

load_from = None

# whether to resume training from the loaded checkpoint

resume = False

# Defaults to use random seed and disable `deterministic`

randomness = dict(seed=None, deterministic=False)

以上是我的配置文件。

然后运行即可:

mim train mmpretrain resnet50.py --work_dir=./work

附:将数据集转成CustomDataset类型的代码

import os

import sys

import shutil

import numpy as np

def load_data(data_path):

count = 0

data = {

}

for dir_name in os.listdir(data_path):

dir_path = os.path.join(data_path,dir_name)

if not os.path.isdir(dir_path):

continue

data[dir_name] = []

for file_name in os.listdir(dir_path):

file_path = os.path.join(dir_path,file_name)

if not os.path.isfile(file_path):

continue

data[dir_name].append(file_path)

count += len(data[dir_name])

print('{}:{}'.format(dir_name,len(data[dir_name])))

print('total of image:{}'.format(count))

return data

def copy_dataset(src_img_list,data_index,target_path):

target_img_list = []

for index in data_index:

src_img = src_img_list[index]

img_name = os.path.split(src_img)[-1]

shutil.copy(src_img, target_path)

target_img_list.append(os.path.join(target_path, img_name))

return target_img_list

def write_file(data,file_name):

if isinstance(data,dict):

write_data = []

for lab,img_list in data.items():

for img in img_list:

write_data.append("{} {}".format(img, lab))

else:

write_data = data

with open(file_name, "w") as f:

for line in write_data:

f.write(line + "\n")

print("{} write over!".format(file_name))

def split_data(src_data_path, target_data_path, train_rate=0.8):

src_data_dict = load_data(src_data_path)

classes = []

train_dataset, val_dataset = {

}, {

}

train_count, val_count = 0, 0

for i, (cls_name, img_list) in enumerate(src_data_dict.items()):

img_data_size = len(img_list)

random_index = np.random.choice(img_data_size, img_data_size,replace=False)

train_data_size = int(img_data_size * train_rate)

train_data_index = random_index[:train_data_size]

val_data_index = random_index[train_data_size:]

train_data_path = os.path.join(target_data_path, "train", cls_name)

val_data_path = os.path.join(target_data_path, "val", cls_name)

os.makedirs(train_data_path, exist_ok=True)

os.makedirs(val_data_path, exist_ok=True)

classes.append(cls_name)

train_dataset[i] = copy_dataset(img_list, train_data_index,train_data_path)

val_dataset[i] = copy_dataset(img_list, val_data_index, val_data_path)

print("target {} train:{}, val:{}".format(cls_name,len(train_dataset[i]), len(val_dataset[i])))

train_count += len(train_dataset[i])

val_count += len(val_dataset[i])

print("train size:{}, val size:{}, total:{}".format(train_count, val_count,train_count + val_count))

write_file(classes, os.path.join(target_data_path, "classes.txt"))

write_file(train_dataset, os.path.join(target_data_path, "train.txt"))

write_file(val_dataset, os.path.join(target_data_path, "val.txt"))

def main():

src_data_path = sys.argv[1]

target_data_path = sys.argv[2]

split_data(src_data_path, target_data_path, train_rate=0.9)