一、安装JDK以及zooeleeper这里省略

二、安装与运行Kafka

下载

http://kafka.apache.org/downloads.html

下载后解压到任意一个目录,笔者的是D:\Java\Tool\kafka_2.11-0.10.0.1

1. 进入Kafka配置目录,D:\Java\Tool\kafka_2.11-0.10.0.1

2. 编辑文件“server.properties”

3. 找到并编辑log.dirs=D:\Java\Tool\kafka_2.11-0.10.0.1\kafka-log,这里的目录自己修改成自己喜欢的

4. 找到并编辑zookeeper.connect=localhost:2181。表示本地运行

5. Kafka会按照默认,在9092端口上运行,并连接zookeeper的默认端口:2181。

运行:

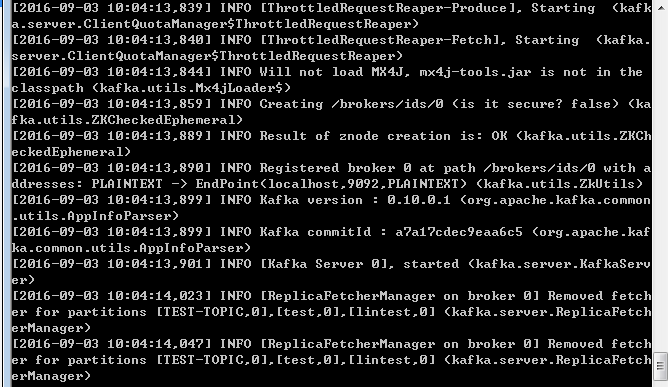

重要:请确保在启动Kafka服务器前,Zookeeper实例已经准备好并开始运行。

1.进入Kafka安装目录D:\Java\Tool\kafka_2.11-0.10.0.1

2.按下Shift+右键,选择“打开命令窗口”选项,打开命令行。

3.现在输入

.\bin\windows\kafka-server-start.bat .\config\server.properties

并回车。

三、测试

上面的Zookeeper和kafka一直打开

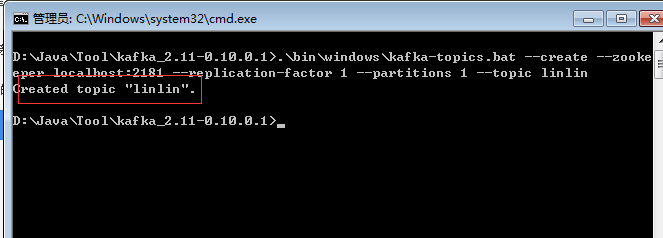

(1)、创建主题

1.进入Kafka安装目录D:\Java\Tool\kafka_2.11-0.10.0.12.按下Shift+右键,选择“打开命令窗口”选项,打开命令行。

3.现在输入

.\bin\windows\kafka-topics.bat --create --zookeeper localhost:2181 --replication-factor 1 --partitions 1 --topic linlin 注意不要关了这个窗口!

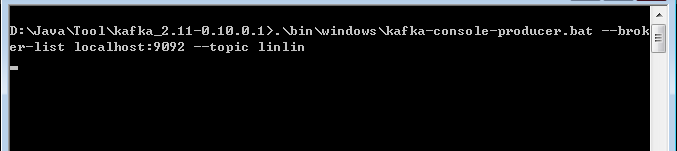

(2)创建生产者

1.进入Kafka安装目录D:\Java\Tool\kafka_2.11-0.10.0.1

2.按下Shift+右键,选择“打开命令窗口”选项,打开命令行。

3.现在输入

.\binwindows\kafka-console-producer.bat --broker-list localhost:9092 --topic linlin(3)创建消费者

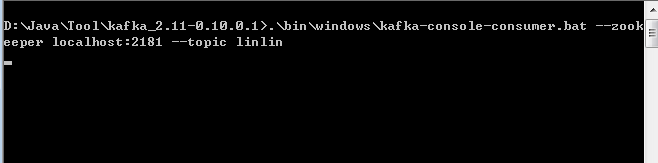

1.进入Kafka安装目录D:\Java\Tool\kafka_2.11-0.10.0.1

2.按下Shift+右键,选择“打开命令窗口”选项,打开命令行。

3.现在输入

.\bin\windows\kafka-console-consumer.bat --zookeeper localhost:2181 --topic linlin注意不要关了这个窗口!

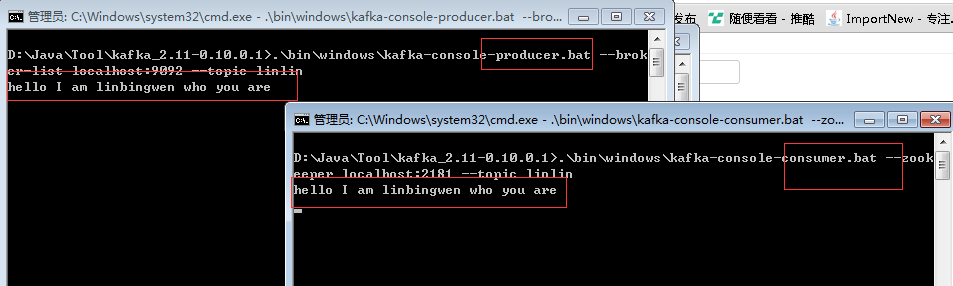

然后在第2个窗口中输入内容,最后记得回车

四、Kafka入门实例

1.整个工程目录如下:

2.pom文件

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.lin</groupId>

<artifactId>Kafka-Demo</artifactId>

<version>0.0.1-SNAPSHOT</version>

<dependencies>

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka_2.10</artifactId>

<version>0.8.2.0</version>

</dependency>

<dependency>

<groupId>com.101tec</groupId>

<artifactId>zkclient</artifactId>

<version>0.10</version>

</dependency>

<dependency>

<groupId>com.alibaba</groupId>

<artifactId>fastjson</artifactId>

<version>1.2.4</version>

</dependency>

</dependencies>

</project>3.生产者 KafkaProducer

package com.lin.demo.producer;

import kafka.javaapi.producer.Producer;

import kafka.producer.KeyedMessage;

import kafka.producer.ProducerConfig;

import java.util.Properties;

public class KafkaProducer {

private final Producer<String, String> producer;

public final static String TOPIC = "TEST-TOPIC";

private KafkaProducer() {

Properties props = new Properties();

//此处配置的是kafka的端口

props.put("metadata.broker.list", "localhost:9092");

//配置value的序列化类

props.put("serializer.class", "kafka.serializer.StringEncoder");

//配置key的序列化类

props.put("key.serializer.class", "kafka.serializer.StringEncoder");

props.put("request.required.acks", "-1");

producer = new Producer<String, String>(new ProducerConfig(props));

}

void produce() {

int messageNo = 1000;

final int COUNT = 10000;

while (messageNo < COUNT) {

String key = String.valueOf(messageNo);

String data = "hello kafka message " + key;

producer.send(new KeyedMessage<String, String>(TOPIC, key, data));

System.out.println(data);

messageNo++;

}

}

public static void main(String[] args) {

new KafkaProducer().produce();

}

}

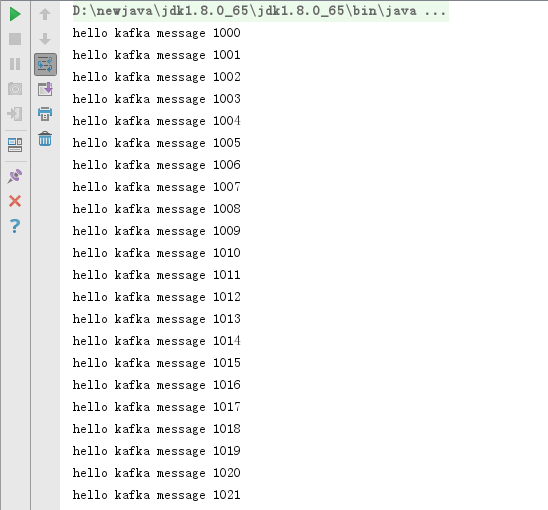

运行,结果:

4.消费者 KafkaConsumer

package com.lin.demo.consumer;

import java.util.HashMap;

import java.util.List;

import java.util.Map;

import java.util.Properties;

import com.lin.demo.producer.KafkaProducer;

import kafka.consumer.ConsumerConfig;

import kafka.consumer.ConsumerIterator;

import kafka.consumer.KafkaStream;

import kafka.javaapi.consumer.ConsumerConnector;

import kafka.serializer.StringDecoder;

import kafka.utils.VerifiableProperties;

/**

* Created by yz.shi on 2018/4/11.

*/

public class KafkaConsumer {

private final ConsumerConnector consumer;

private KafkaConsumer() {

Properties props = new Properties();

//zookeeper 配置

props.put("zookeeper.connect", "localhost:2181");

//group 代表一个消费组

props.put("group.id", "jwd-group");

//zk连接超时

props.put("zookeeper.session.timeout.ms", "4000");

props.put("zookeeper.sync.time.ms", "200");

props.put("rebalance.max.retries", "5");

props.put("rebalance.backoff.ms", "1200");

props.put("auto.commit.interval.ms", "1000");

props.put("auto.offset.reset", "smallest");

//序列化类

props.put("serializer.class", "kafka.serializer.StringEncoder");

ConsumerConfig config = new ConsumerConfig(props);

consumer = kafka.consumer.Consumer.createJavaConsumerConnector(config);

}

void consume() {

Map<String, Integer> topicCountMap = new HashMap<String, Integer>();

topicCountMap.put(KafkaProducer.TOPIC, new Integer(1));

StringDecoder keyDecoder = new StringDecoder(new VerifiableProperties());

StringDecoder valueDecoder = new StringDecoder(new VerifiableProperties());

Map<String, List<KafkaStream<String, String>>> consumerMap =

consumer.createMessageStreams(topicCountMap,keyDecoder,valueDecoder);

KafkaStream<String, String> stream = consumerMap.get(KafkaProducer.TOPIC).get(0);

ConsumerIterator<String, String> it = stream.iterator();

while (it.hasNext())

System.out.println("<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<" + it.next().message() + "<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<");

}

public static void main(String[] args) {

new KafkaConsumer().consume();

}

}

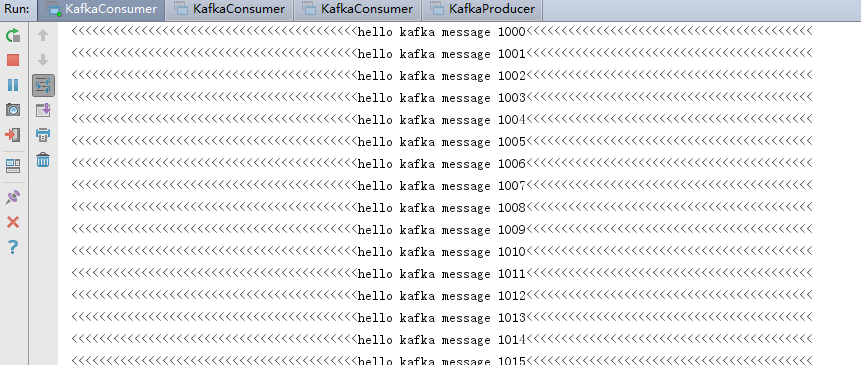

运行结果: