python2.4升级到python2.7.10

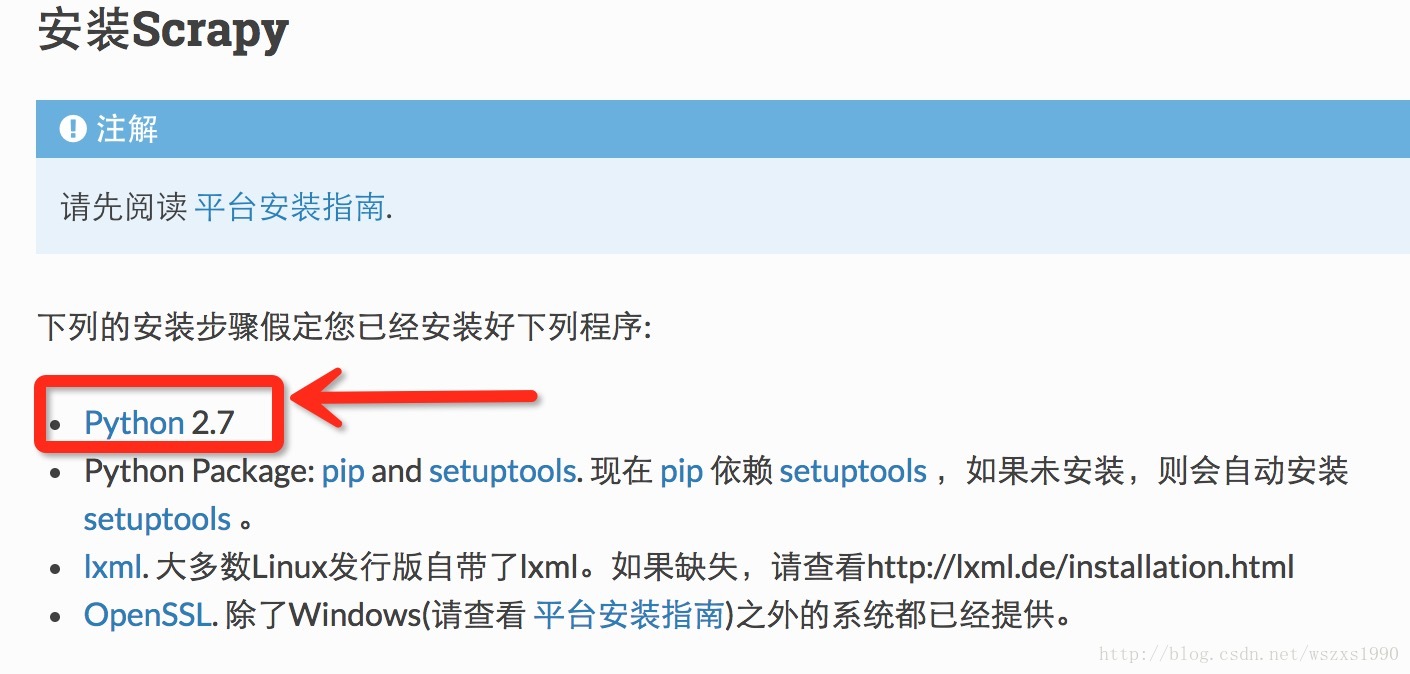

官方要求python 版本2.7,而我的MAC端和远端服务器都是python2.4,只能先升级了,升级步骤:

- yum install gcc gcc-c++.x86_64 compat-gcc-34-c++.x86_64 openssl-devel.x86_64 zlib*.x86_64

- wget http://www.python.org/ftp/python/2.7/Python-2.7.10.tar.bz2

- tar -xvjf Python-2.7.tar.bz2

- cd Python2.7

- ./configure –prefix=/opt/python27

- make

- make install

- mv /usr/bin/python /usr/bin/python_backup

- ln -s /opt/python27/bin/python /usr/bin/

python -V 查看,已经是 python2.7.10, - vim /usr/bin/yum

- 将!/usr/bin/python 修改成 !/usr/bin/python2.4

安装easy_install,pip - curl -O http://pypi.python.org/packages/2.7/s/setuptools/setuptools-0.6c11-py2.7.egg

- chmod 775 setuptools-0.6c11-py2.7.egg

- sh setuptools-0.6c11-py2.7.egg

- curl -O http://pypi.python.org/packages/source/p/pip/pip-1.0.tar.gz

- tar xvfz pip-1.0.tar.gz

- cd pip-1.0

- python setup.py install

scrapy 安装

pip install Scrapyscrapy使用

引用官方的例子,进行说明

- 创建项目

scrapy startproject tutorial- 定义ITME

import scrapy

class DmozItem(scrapy.Item):

title = scrapy.Field()

link = scrapy.Field()

desc = scrapy.Field()- 编写第一个爬虫(Spider)

import scrapy

from tutorial.items import DmozItem

class DmozSpider(scrapy.Spider):

name = "dmoz"

allowed_domains = ["dmoz.org"]

start_urls = [

"http://www.dmoz.org/Computers/Programming/Languages/Python/Books/",

"http://www.dmoz.org/Computers/Programming/Languages/Python/Resources/"

]

def parse(self, response):

for sel in response.xpath('//ul/li'):

item = DmozItem()

item['title'] = sel.xpath('a/text()').extract()

item['link'] = sel.xpath('a/@href').extract()

item['desc'] = sel.xpath('text()').extract()

yield item- 保存爬取到的数据

scrapy crawl dmoz -o items.jsonscrapyd安装

pip install scrapydscrapyd部署

- 安装scrapyd-client

网址:https://github.com/scrapy/scrapyd-client,建议从github上下载最新源码,然后用Python setup.py install安装,因为pip安装源有可能不是最新版的- 使用上传工具 scrapyd-deploy

scrapyd-deploy <target> -p <project>

target为你的服务器命令,project是你的工程名字。

首先对你要发布的爬虫工程的scrapy.cfg 文件进行修改,我这个文件的内容如下:

[deploy:scrapyd1]

url = http://localhost:6800/

project = baidu- 启动远端爬虫

curl http://localhost:6800/schedule.json -d project=PROJECT_NAME -d spider=SPIDER_NAME

PROJECT_NAME填入你爬虫工程的名字,SPIDER_NAME填入你爬虫的名字部署过程中遇到的坑

报错:command ‘gcc’ failed with exit status 1

解决方法:根据自己的报错详细情况,由图中看错我的主要是openssl 报错,经历多次排查,我将 openssl升至最新版本问题解决……本地上传到远端也有openssl的报错,我也正在升级本地openssl中……