版权声明:本文为博主原创文章,未经博主允许不得转载。 https://blog.csdn.net/q__y__L/article/details/72614661

机器学习经典算法SVM,网上有各种博客介绍,以及各种语言的源代码。 这里提供SVM几种版本的matlab实现,主要目的是熟悉利用CVX来求解凸优化问题。

basic SVM

推导什么的就不说了,直接搬最后的公式:

minw,b2∥w∥22

x_i+b)\ge1,i=1,…,Ls.t.yi(wTxi+b)≥1,i=1,...,L

然后是代码:`

function [ w,b ] = svm_prim_sep( data,labels )

%UNTITLED2 此处显示有关此函数的摘要

% Input:

% data: num-by-dim matrix .mun is the number of data points,

% dim is the the dimension of a point

% labels: num-by-1 vector, specifying the class that each point belongs

% to +1 or -1

% output:

% w: dim-by -1 vector ,the mormal dimension of hyperpalne

% b: a scalar, the bias

[num,dim]=size(data);

cvx_begin

variables w(dim) b;

minimize (norm(w));

subject to

labels.*(data*w+b)>=1;

cvx_end

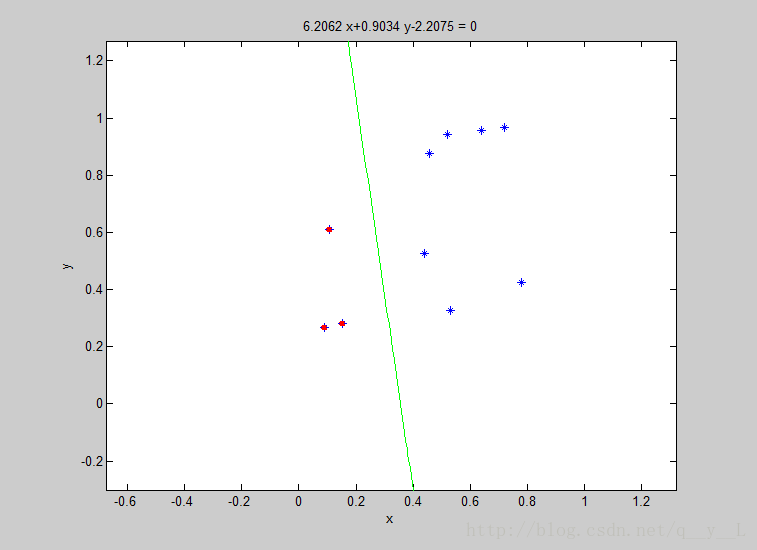

end然后随便随机生成了10个2维样本,运行结果如下:

- Soft Margin SVM

Soft Margin SVM

线性不可分的时候,通过引入罚函数(penalty function)来解决,使得分类误差最小。公式如下:

代码依然很简单:

function [ w,b ] = svm_prim_sep( data,labels )

%UNTITLED2 此处显示有关此函数的摘要

% Input:

% data: num-by-dim matrix .mun is the number of data points,

% dim is the the dimension of a point

% labels: num-by-1 vector, specifying the class that each point belongs

% to +1 or -1

% output:

% w: dim-by -1 vector ,the mormal dimension of hyperpalne

% b: a scalar, the bias

[num,dim]=size(data);

cvx_begin

variables w(dim) b,xi(num);

minimize (sum(w.^2)/2+C * sum(xi.^2));

subject to

labels.* (data * w+b)>=1-xi;

xi>=0;

cvx_end

end是不是很简单?例子以后再给吧。(公式乱码,请尝试其它浏览器)

未完待续,