1. one-hot编码

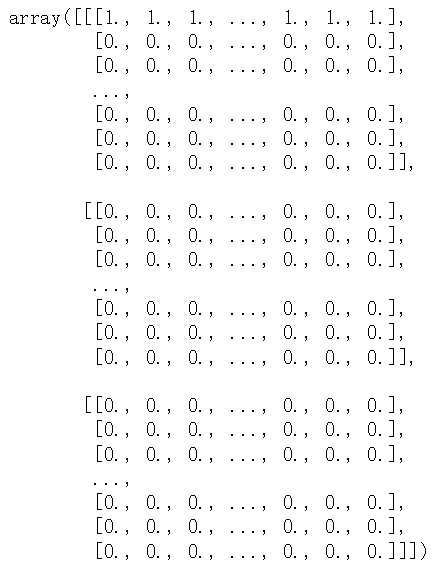

# 字符集的one-hot编码 import string samples = ['zzh is a pig','he loves himself very much','pig pig han'] characters = string.printable token_index = dict(zip(range(1,len(characters)+1),characters)) max_length =20 results = np.zeros((len(samples),max_length,max(token_index.keys()) + 1)) for i,sample in enumerate(sample): for j,character in enumerate(sample): index = token_index.get(character) results[i,j,index] = 1 results characters= '0123456789abcdefghijklmnopqrstuvwxyzABCDEFGHIJKLMNOPQRSTUVW XYZ!"#$%&\'()*+,-./:;<=>?@[\\]^_`{|}~ \t\n\r\x0b\x0c' |

|

# keras实现单词级的one-hot编码 from keras.preprocessing.text import Tokenizer samples = ['zzh is a pig','he loves himself very much','pig pig han'] tokenizer = Tokenizer(num_words = 100) #创建一个分词器(tokenizer),设置为只考虑前1000个最常见的单词 tokenizer.fit_on_texts(samples)#构建单词索引 sequences = tokenizer.texts_to_sequences(samples) one_hot_results = tokenizer.texts_to_matrix(samples,mode='binary') # one_hot_results.shape --> (3, 100) word_index = tokenizer.word_index print('发现%s个unique标记',len(word_index)) |

sequences = [[2, 3, 4, 1], 发现10个unique标记 {'pig': 1, 'zzh': 2, 'is': 3, 'a': 4, 'he': 5, |

one-hot 编码的一种办法是 one-hot散列技巧(one-hot hashing trick)如果词表中唯一标记的数量太大而无法直接处理,就可以使用这种技巧。这种方法没有为每个单词显示的分配一个索引并将这些索引保存在一个字典中,而是将单词散列编码为固定长度的向量,通常用一个非常简单的散列函数来实现。 优点:节省内存并允许数据的在线编码(读取完所有数据之前,你就可以立刻生成标记向量) 缺点:可能会出现散列冲突 如果散列空间的维度远大于需要散列的唯一标记的个数,散列冲突的可能性会减小 |

|

import numpy as np samples = ['the cat sat on the mat the cat sat on the mat the cat sat on the mat','the dog ate my homowork'] dimensionality = 1000#将单词保存为1000维的向量 max_length = 10 results = np.zeros((len(samples),max_length,dimensionality)) for i,sample in enumerate(samples): for j,word in list(enumerate(sample.split()))[:max_length]: index = abs(hash(word)) % dimensionality results[i,j,index] = 1 |

|

2. 词嵌入