在 /etc/logstash/conf.d/ 目录内新建文件 httpd.conf

root@b4bb675920c7:/# vi /etc/logstash/conf.d/httpd.conf

input {

file {

path => "/data/access_log"

start_position => "beginning"

}

}

filter {

grok {

match => { "message" => "%{IPORHOST:clientip} - - \[%{HTTPDATE:timestamp}\] \"(?:%{WORD:verb} %{NOTSPACE:request}(?: HTTP/%{NUMBER:httpversion})?|%{DATA:rawrequest})\" %{NUMBER:response} (?:%{NUMBER:bytes}) %{QS:referrer} %{QS:agent}" }

}

}

output {

elasticsearch {

hosts => ["localhost:9200"]

}

stdout {codec => rubydebug}

}

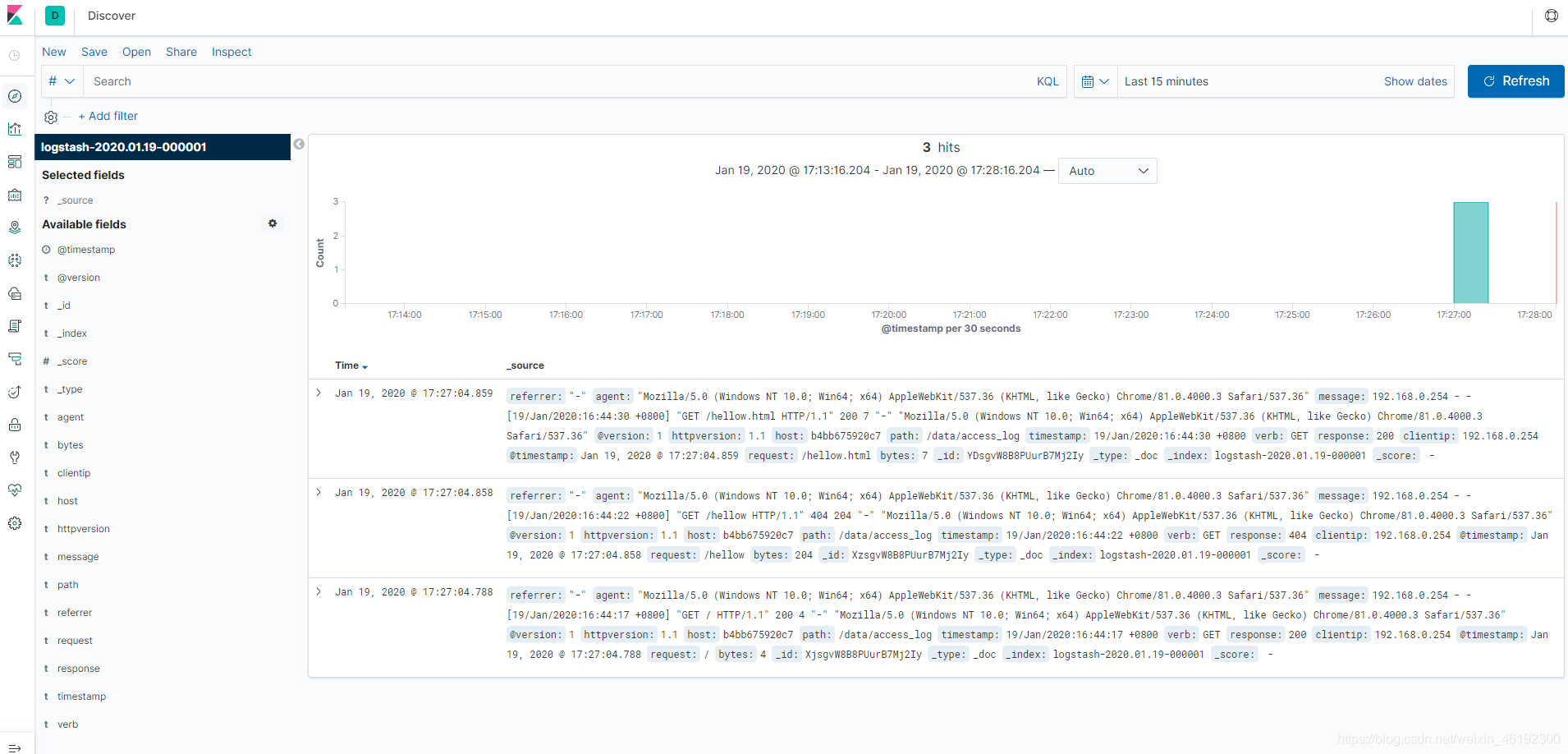

收集数据源

以apache日志为数据源, 通过logstash过滤后输出到elasticsearch收集起来, 然后在kibana进行可视化分析

接收数据源

root@b4bb675920c7:/# /opt/logstash/bin/logstash --path.data /root/ -f /etc/logstash/conf.d/apache.conf &

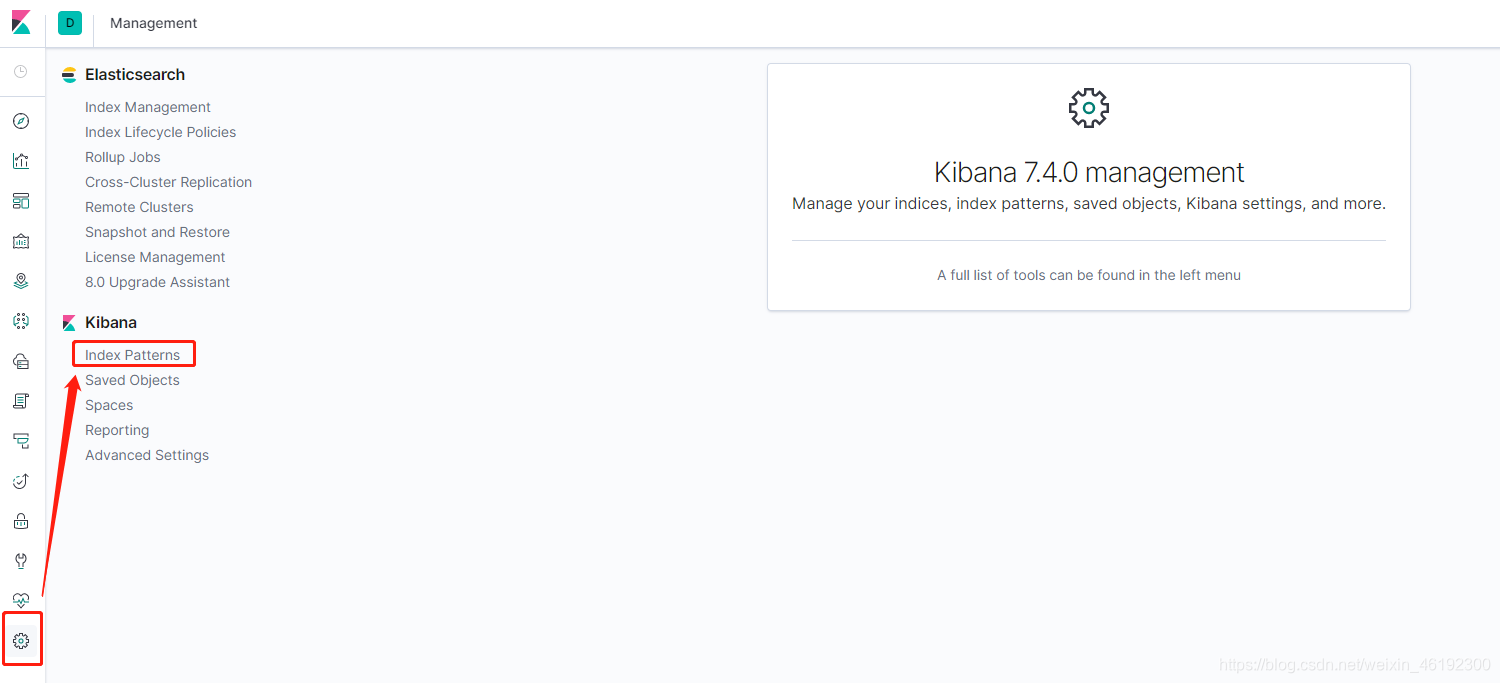

浏览器访问 http://ip:5601

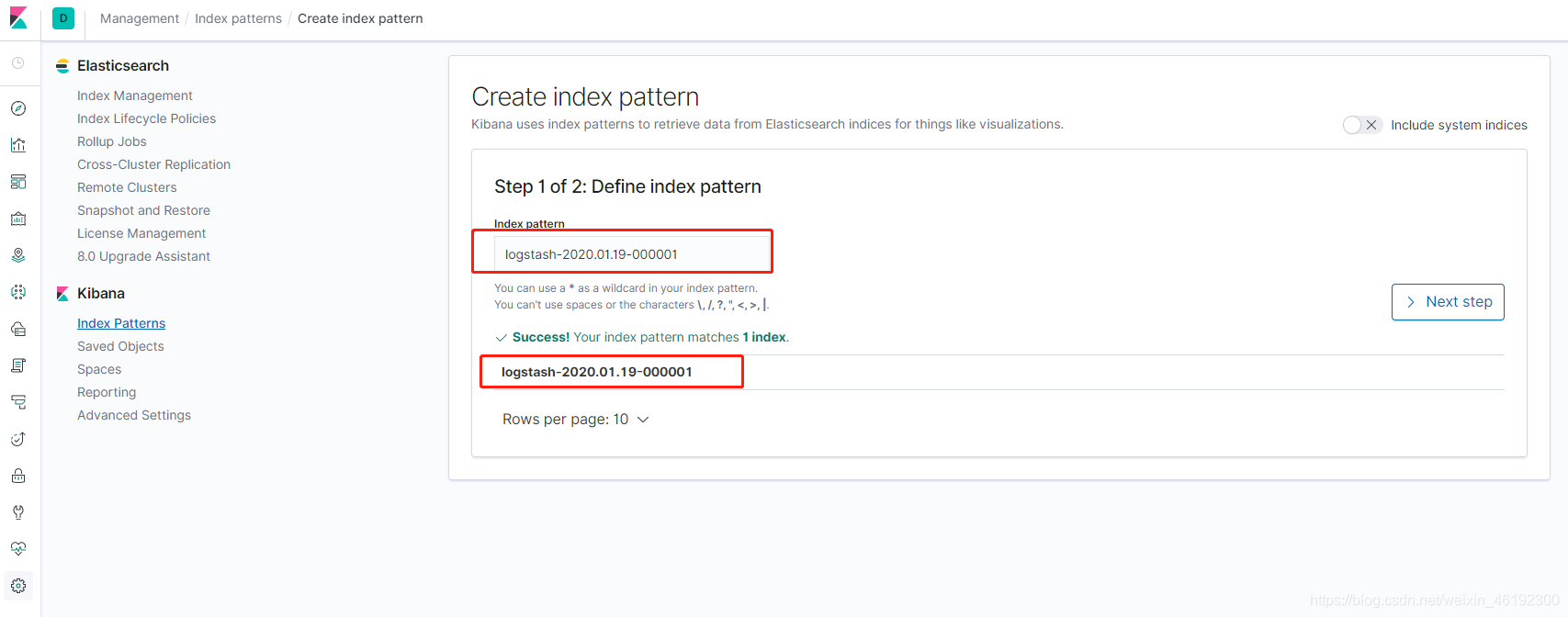

添加索引